"Google has the entire chain in its hands. It does not rely on Nvidia and possesses efficient, low-cost computing sovereignty."

Author: Ma Leilei

Source: Wu Xiaobo Channel CHANNELWU

Warren Buffett once said, "Never invest in a business you cannot understand." However, as the "Oracle of Omaha" era is about to come to an end, Buffett made a decision that goes against his "house rules": he bought Google stock at a high premium of about 40 times free cash flow.

Yes, Buffett bought "AI-themed stocks" for the first time, and it was not OpenAI or Nvidia. All investors are asking one question: Why Google?

Back to the end of 2022. At that time, ChatGPT burst onto the scene, and Google's executives sounded the "red alert." They held numerous meetings and even urgently recalled the two founders. But at that time, Google seemed like a slow-moving, bureaucratic dinosaur.

It hurriedly launched the chatbot Bard, but made factual errors during the demonstration, causing the company's stock price to plummet, evaporating over a hundred billion dollars in market value in a single day. Then, it integrated its AI teams and launched the multimodal Gemini 1.5.

However, this product, seen as a trump card, only sparked a few hours of discussion in the tech community before being overshadowed by OpenAI's subsequent release of the video generation model Sora, quickly becoming irrelevant.

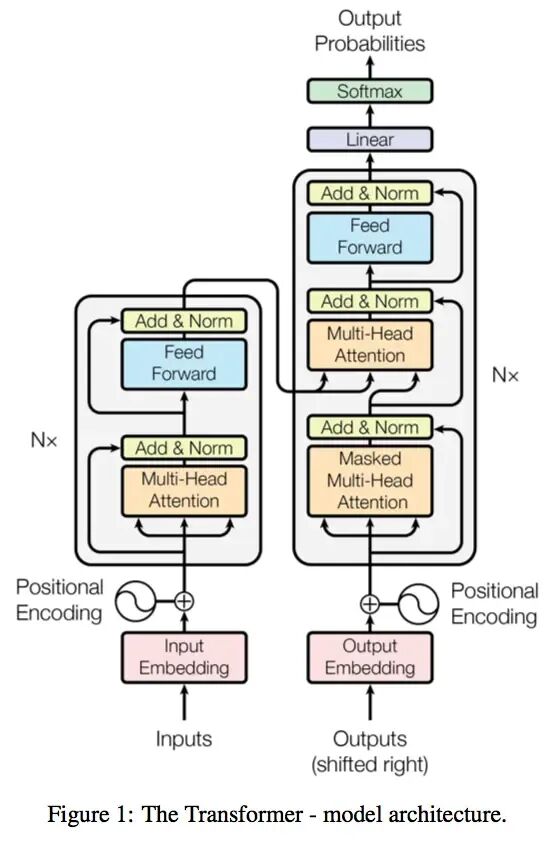

Somewhat awkwardly, it was Google's researchers who published a groundbreaking academic paper in 2017, laying a solid theoretical foundation for this round of AI revolution.

The paper "Attention Is All You Need"

Proposed the Transformer model

Competitors mocked Google. OpenAI's CEO Altman looked down on Google's taste, saying, "I can't help but think about the aesthetic differences between OpenAI and Google."

Google's former CEO was also dissatisfied with the company's laziness, stating, "Google has always believed that work-life balance is more important than winning the competition."

This series of predicaments has led to doubts about whether Google has fallen behind in the AI competition.

But change finally came. In November, Google launched Gemini 3, which surpassed competitors, including OpenAI, on most benchmark metrics. More crucially, Gemini 3 was entirely trained using Google's self-developed TPU chips, which are now positioned by Google as a low-cost alternative to Nvidia's GPUs, officially being sold to external customers.

Google showcased its prowess on two fronts: responding directly to OpenAI's software front with the Gemini 3 series; and challenging Nvidia's long-standing dominance on the hardware front with TPU chips.

Kicking OpenAI and punching Nvidia.

Altman felt the pressure as early as last month, stating in an internal letter that Google "may bring some temporary economic headwinds to our company." This week, after hearing that major companies were purchasing TPU chips, Nvidia's stock price plummeted by 7% during trading, prompting Altman to personally send a letter to reassure the market.

Google CEO Sundar Pichai said in a recent podcast that Google employees should catch up on sleep. "From an external perspective, we may have seemed quiet or behind during that time, but in reality, we were solidifying all the foundational components and pushing forward on that basis."

Now the situation has reversed. Pichai stated, "We have now reached a turning point."

At this moment, it has been exactly three years since the release of ChatGPT. In these three years, AI has opened a feast of Silicon Valley capital and alliances; yet beneath the feast, concerns about bubbles have emerged. Has the industry reached a turning point?

Overtaking

On November 19, Google released its latest AI model, Gemini 3.

A test showed that in most tests covering expert knowledge, logical reasoning, mathematics, and image recognition, Gemini 3 scored significantly higher than the latest models from other companies, including ChatGPT. In the only programming ability test, it performed slightly worse, ranking second.

The Wall Street Journal stated, "Let's call it the next generation of top models in the U.S." Bloomberg reported that Google has finally awakened. Musk and Altman praised it. Some netizens joked that this is the GPT-5 Altman envisioned.

The CEO of the cloud content management platform Box, after trying Gemini 3 in advance, stated that the performance improvement was so incredible that they initially doubted their evaluation methods. However, repeated tests confirmed that the model won in all internal assessments by a double-digit margin.

The CEO of Salesforce said he used ChatGPT for three years, but Gemini 3 changed his perception in just two hours: "Holy shit… there's no going back. This is a qualitative leap; reasoning, speed, image and video processing… all sharper and faster. It feels like the world has turned upside down again."

Gemini 3

Why is Gemini 3 performing so outstandingly, and what has Google done?

The head of the Gemini project posted, "Simply put: improved pre-training and post-training." Some analysts say that the model's pre-training still follows the logic of Scaling Law—optimizing pre-training (such as larger datasets, more efficient training methods, more parameters, etc.) to enhance model capabilities.

The person most eager to understand the secrets of Gemini 3 is undoubtedly Altman.

Last month, before the release of Gemini 3, he sent a warning in an internal letter to OpenAI employees, stating, "From any perspective, Google's recent work has been outstanding," especially in pre-training, where Google's progress may bring "some temporary economic headwinds" to the company, and "the atmosphere from the outside will be quite severe for a while."

Although in terms of user numbers, ChatGPT still has a significant advantage over Gemini, the gap is narrowing.

In these three years, ChatGPT's user base has grown rapidly. In February of this year, its weekly active users were 400 million, and by this month, it surged to 800 million. Gemini reported monthly active user data, with 450 million monthly active users in July, which has now risen to 650 million.

With about 90% of the global search market share, Google naturally controls the core channel for promoting its AI models, allowing it to directly reach a vast number of users.

OpenAI is currently valued at $500 billion, making it the highest-valued startup in the world. It is also one of the fastest-growing companies in history, with revenue skyrocketing from nearly zero in 2022 to an estimated $13 billion this year. However, it also expects to burn over $100 billion in the coming years to achieve general artificial intelligence, while needing to spend hundreds of billions on server rentals. In other words, it still needs to seek financing.

Google has an undeniable advantage: a thicker wallet.

Google's latest quarterly financial report shows that its revenue has surpassed $100 billion for the first time, reaching $102.3 billion, a year-on-year increase of 16%, with profits of $35 billion, a year-on-year increase of 33%. The company's free cash flow is $73 billion, and capital expenditures related to AI are expected to reach $90 billion this year.

It also does not need to worry about its search business being eroded by AI, as its search and advertising still show double-digit growth. Its cloud business is thriving, and even OpenAI rents its servers.

In addition to having self-sustaining cash flow, Google also possesses resources that OpenAI cannot match, such as vast amounts of ready-made data for training and optimizing models, as well as its own computing infrastructure.

On November 14, Google announced a $40 billion investment to build new data centers.

OpenAI has been skillfully negotiating, signing computing power trading agreements worth over $1 trillion with various parties. Therefore, as Google rapidly approaches with Gemini, investors' doubts grow stronger: Can the growth pie drawn by OpenAI truly fill the gap?

Cracks

A month ago, Nvidia's market value surpassed $5 trillion, and the market's enthusiasm for artificial intelligence pushed this "AI arms dealer" to new heights. However, the TPU chips used by Google's Gemini 3 have opened a crack in Nvidia's solid fortress.

The Economist cited data from investment research firm Bernstein, stating that Nvidia's GPUs account for more than two-thirds of the total cost of a typical AI server rack. In contrast, Google's TPU chips are priced at only 10% to 50% of the equivalent performance Nvidia chips. These savings accumulate to a considerable amount. Investment bank Jefferies estimates that Google will produce about 3 million of these chips next year, nearly half of Nvidia's output.

Last month, the well-known AI startup Anthropic planned to adopt Google's TPU chips on a large scale, with rumored transaction amounts reaching hundreds of billions of dollars. Reports on November 25 indicated that tech giant Meta is also in talks to use TPU chips in its data centers by 2027, valued at several billion dollars.

Google CEO Sundar Pichai introduces TPU chips

Silicon Valley's internet giants are also betting on chips, either developing them in-house or collaborating with chip companies, but no company has made such progress as Google.

The history of TPU dates back more than a decade. At that time, Google began developing a dedicated acceleration chip for internal use to improve the efficiency of search, maps, and translation. Starting in 2018, it began selling TPUs to cloud computing customers.

Since then, TPUs have also been used to support Google's internal AI development. During the development of models like Gemini, the AI team and the chip team interacted: the former provided actual needs and feedback, and the latter customized and optimized the TPU accordingly, which in turn improved AI development efficiency.

Nvidia currently holds over 90% of the AI chip market. Its GPUs were initially used for realistic rendering of game graphics, relying on thousands of computing cores to process tasks in parallel, which has also made it far ahead in AI operations.

In contrast, Google's TPUs are what are known as application-specific integrated circuits (ASICs), designed specifically for certain computing tasks. They sacrifice some flexibility and applicability, resulting in higher energy efficiency. Nvidia's GPUs, on the other hand, are like "generalists," flexible in function and highly programmable, but at a higher cost.

However, at the current stage, no company, including Google, has the capability to completely replace Nvidia. Despite the TPU chips having developed to the seventh generation, Google remains a major customer of Nvidia. An obvious reason is that Google's cloud business needs to serve thousands of customers worldwide, and utilizing the computing power of GPUs ensures its attractiveness to clients.

Even companies purchasing TPUs must embrace Nvidia. Shortly after Anthropic announced its collaboration with Google TPU, it also announced a significant deal with Nvidia.

The Wall Street Journal stated, "Investors, analysts, and data center operators say that Google's TPU is one of the biggest threats to Nvidia's dominance in the AI computing market, but to challenge Nvidia, Google must start selling these chips more widely to external customers."

Google's AI chips have become one of the few alternatives to Nvidia's chips, directly lowering Nvidia's stock price. Nvidia quickly posted to calm the market panic triggered by the TPU. It expressed happiness for "Google's success" but emphasized that Nvidia is a generation ahead of the industry, and its hardware is more versatile than TPUs and other similar chips designed for specific tasks.

Nvidia is also under pressure from market concerns about bubbles, with investors fearing that massive capital investments do not match profit prospects. Investment sentiment can switch at any time, fearing that Nvidia's business will be taken away while also worrying that AI chips won't sell.

Famous American short-seller Michael Burry said he has bet over $1 billion against Nvidia and other tech companies. He gained fame for shorting the U.S. real estate market in 2008, and his story was later adapted into the critically acclaimed film "The Big Short." He stated that today's AI frenzy is similar to the internet bubble of the early 21st century.

Michael Burry

Nvidia distributed a seven-page document to analysts, rebutting criticisms from Burry and others. However, this document did not quell the controversy.

Model

Google is enjoying a sweet period, with its stock price rising against the trend in the AI bubble. Buffett's company purchased its stock in the third quarter, Gemini 3 received positive feedback, and the TPU chips have investors excited, all pushing Google to new heights.

In the past month, AI concept stocks like Nvidia and Microsoft have fallen over 10%, while Google's stock price has risen about 16%. Currently, it ranks third in the world with a market value of $3.86 trillion, only behind Nvidia and Apple.

Analysts refer to Google's artificial intelligence model as vertical integration.

As a rare "full-stack self-manufacturing" player in the tech circle, Google has the entire chain in its hands: deploying self-developed TPU chips on Google Cloud, training its own AI large models, and seamlessly embedding these models into core businesses like search and YouTube. The advantages of this model are evident; it does not rely on Nvidia and possesses efficient, low-cost computing sovereignty.

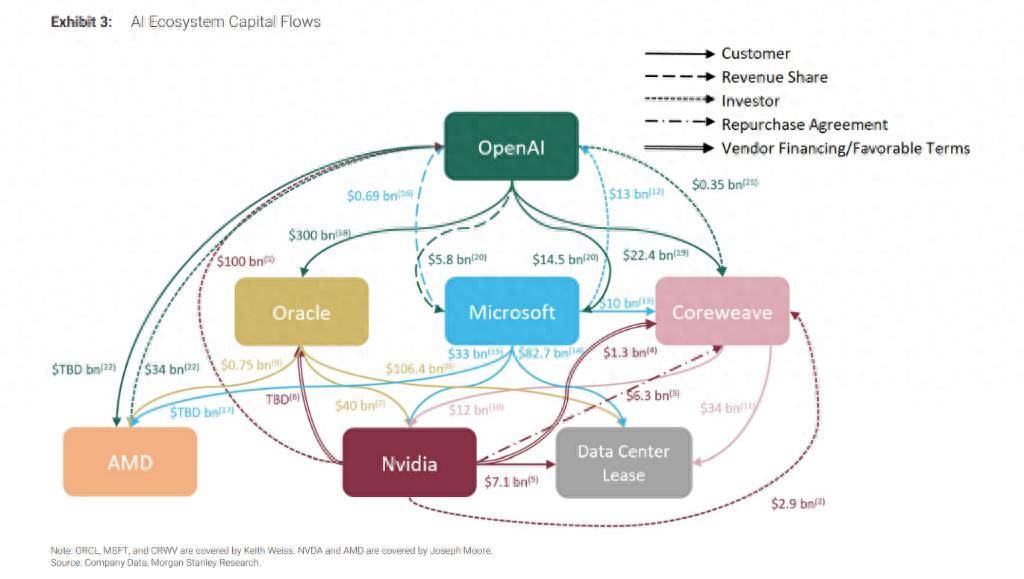

Another model is the more common loose alliance model. Giants perform their respective roles, with Nvidia responsible for GPUs, OpenAI, Anthropic, and others responsible for developing AI models, and cloud giants like Microsoft purchasing GPUs from chip manufacturers to host these AI labs' models. In this network, there are no absolute allies or opponents: when collaboration is possible, they work together for mutual benefit, and when competition arises, they do not hold back.

Players have formed a "circular structure," with funds circulating in a closed loop among a few tech giants.

Generally speaking, the circular financing model works like this: Company A first pays Company B a sum of money (such as investment, loans, or leasing), and then Company B uses that money to purchase products or services from Company A. Without this "startup capital," B might not be able to afford the purchase at all.

One example is OpenAI spending $300 billion to buy computing power from Oracle, which then spends billions to purchase Nvidia chips to build data centers, while Nvidia invests up to $100 billion back into OpenAI—on the condition that OpenAI continues to use its chips. (OpenAI pays $300 billion to Oracle → Oracle uses that money to buy Nvidia chips → Nvidia invests the profits back into OpenAI.)

Such cases have given rise to a maze-like map of funding. Morgan Stanley analysts depicted the capital flow in Silicon Valley's AI ecosystem in a report on October 8, using a photo. Analysts warned that the lack of transparency makes it difficult for investors to clarify the real risks and returns.

The Wall Street Journal commented on this photo, saying, "The arrows connecting them are as tangled as a plate of spaghetti."

With the boost of capital, the outline of that giant object is waiting to take shape, and no one knows its true form. Some are panicking, while others are surprised.

免责声明:本文章仅代表作者个人观点,不代表本平台的立场和观点。本文章仅供信息分享,不构成对任何人的任何投资建议。用户与作者之间的任何争议,与本平台无关。如网页中刊载的文章或图片涉及侵权,请提供相关的权利证明和身份证明发送邮件到support@aicoin.com,本平台相关工作人员将会进行核查。