Special thanks to Devansh Mehta, Davide Crapis and JulianZawistowski for feedback and review, Tina Zhen, Shaw Walters and othersfor discussion.

If you ask people what they like about democratic structures, whethergovernments, workplaces, or blockchain-based DAOs, you will often hearthe same arguments: they avoid concentration of power, they give theirusers strong guarantees because there isn't a single person who cancompletely change the system's direction on a whim, and they can makehigher-quality decisions by gathering the perspectives and wisdom ofmany people.

If you ask people what they dislike about democraticstructures, they will often give the same complaints: average voters arenot sophisticated, because each voter only has a small chance ofaffecting the outcome, few voters put high-quality thought into theirdecisions, and you often get either low participation (making the systemeasy to attack) or de-facto centralization because everyone justdefaults to trusting and copying the views of some influencer.

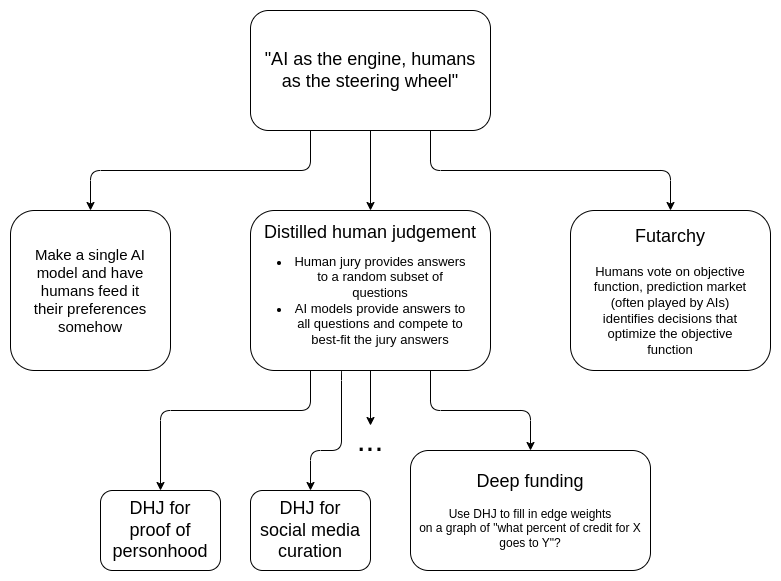

The goal of this post will be to explore a paradigm that couldperhaps use AI to get us the benefits of democratic structures withoutthe downsides. "AI as the engine, humans as the steeringwheel". Humans provide only a small amount of information intothe system, perhaps only a few hundred bits, but each of those bits is awell-considered and very high-quality bit. AI treats this data as an"objective function", and tirelessly makes a very large number ofdecisions doing a best-effort at fitting these objectives. Inparticular, this post will explore an interesting question: can we dothis without enshrining a single AI at the center, insteadrelying on a competitive open market that any AI (or human-AI hybrid) isfree to participate in?

Table of contents

- Why not just put a single AI in charge?

- Futarchy

- Distilled human judgement

- Deep funding

- Adding privacy

- Benefits of engine + steering wheel designs

Why not just put asingle AI in charge?

The easiest way to insert human preferences into an AI-basedmechanism is to make a single AI model, and have humans feed theirpreferences into it somehow. There are easy ways to do this: you canjust put a text file containing a list of people's instructions into thesystem prompt. Then you use one of many "agentic AI frameworks" to givethe AI the ability to access the internet, hand it the keys to yourorganization's assets and social media profiles, and you're done.

After a few iterations, this may end up good enough for many usecases, and I fully expect that in the near future we are going to seemany structures involving AIs reading instructions given by a group (oreven real-time reading a group chat) and taking actions as a result.

Where this structure is not ideal is as a governingmechanism for long-lasting institutions. One valuable property forlong-lasting institutions to have is credibleneutrality. In my post introducing this concept, I listed fourproperties that are valuable for credible neutrality:

- Don't write specific people or specific outcomes into themechanism

- Open source and publicly verifiable execution

- Keep it simple

- Don't change it too often

An LLM (or AI agent) satisfies 0/4. The model inevitably has ahuge amount of specific people and outcome preferences encodedthrough its training process. Sometimes this leads to the AI havingpreferences in surprising directions, eg. see this recentresearch suggesting that major LLMs value lives in Pakistan far morehighly than lives in the USA (!!). It can be open-weights, butthat's farfrom open-source; we really don't know whatdevils are hiding in the depths of a model. It's the opposite ofsimple: the Kolmogorov complexity of an LLM is in the tens of billionsof bits, about the same as that of allUS law (federal + state + local) put together. And because of howrapidly AI is evolving, you'll have to change it every three months.

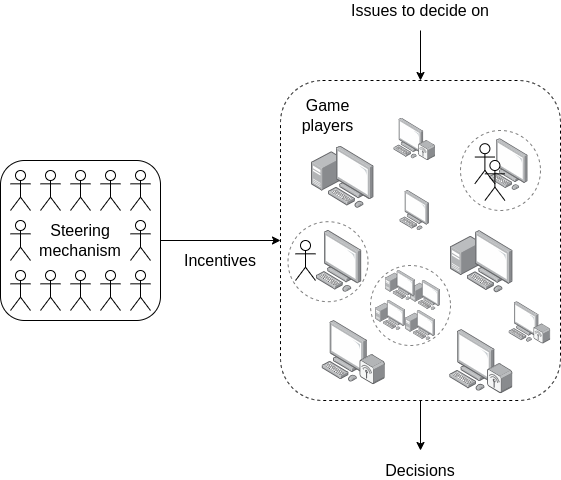

For this reason, an alternative approach that I favor exploring formany use cases is to make a simple mechanism be the rules of thegame, and let AIs be the players. This is the same insight thatmakes markets so effective: the rules are a relatively dumb system ofproperty rights, with edge cases decided by a court system that slowlyaccumulates and adjusts precedents, and all of the intelligence comesfrom entrepreneurs operating "at the edge".

The individual "game players" can be LLMs, swarms of LLMs interactingwith each other and calling into various internet services, various AI +human combinations, and many other constructions; as a mechanismdesigner, you do not need to know. The ideal goal is to have a mechanismthat functions as an automaton - if the goal of the mechanism ischoosing what to fund, then it should feel as much as possible likeBitcoin or Ethereum block rewards.

The benefits of this approach are:

- It avoids enshrining any single model into themechanism; instead, you get an open market of many differentparticipants and architectures, all with their own different biases.Open models, closed models, agent swarms, human + AI hybrids, cyborgs,infinitemonkeys, etc, are all fair game; the mechanism does notdiscriminate.

- The mechanism is open source. While theplayers are not, the game is - and this is a patternthat is already reasonably well-understood (eg. political parties andmarkets both work this way)

- The mechanism is simple, and so there arerelatively few routes for a mechanism designer to encode their ownbiases into the design

- The mechanism does not change, even if thearchitecture of the underlying players will need to be redesigned everythree months from here until the singularity.

The goal of the steering mechanism is to provide a faithfulrepresentation of the participants' underlying goals. It only needs toprovide a small amount of information, but it should be high-qualityinformation.

You can think of the mechanism as exploiting an asymmetrybetween coming up with an answer and verifying the answer. This issimilar to how a sudoku is difficult to solve, but it's easy to verifythat a solution is correct. You (i) create an open market ofplayers to act as "solvers", and then (ii) maintain a human-runmechanism that performs the much simpler task of verifying solutionsthat have been presented.

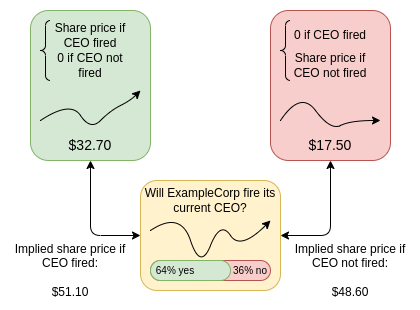

Futarchy

Futarchy was originally introduced by Robin Hanson as "vote values, but betbeliefs". A voting mechanism chooses a set of goals (which can beanything, with the caveat that they need to be measurable) which getcombined into a metric M. When you need to make a decision (forsimplicity, let's say it's YES/NO), you set up conditionalmarkets: you ask people to bet on (i) whether YES or NO will bechosen, (ii) value of M if YES is chosen, otherwise zero, (iii) value ofM if NO is chosen, otherwise zero. Given these three variables, you canfigure out if the market thinks YES or NO is more bullish for the valueof M.

"Price of the company share" (or, for a cryptocurrency, a token) isthe most commonly cited metric, because it's so easy to understand andmeasure, but the mechanism can support many kinds of metrics: monthlyactive users, median self-reported happiness of some group ofconstituents, some quantifiable measure of decentralization, etc.

Futarchy was originally invented in the pre-AI era. However,futarchy fits very naturally in the "sophisticated solver, easyverifier" paradigm described in the previous section, andtraders in a futarchy can be AI (or human+AI combinations) too. The roleof the "solvers" (prediction market traders) is to determine how eachproposed plan will affect the value of a metric in the future. This ishard. The solvers make money if they are right, and lose money if theyare wrong. The verifiers (the people voting on the metric, adjusting themetric if they notice that it is being "gamed" or is otherwise becomingoutdated, and determining the actual value of the metric at some futuretime) need only answer the simpler question "what is the value of themetric now?"

Distilled human judgement

Distilled human judgement is a class of mechanisms that works asfollows. There is a very large number (think: 1 million) ofquestions that need to be answered. Natural examplesinclude:

- How much credit does each person in this list deserve for theircontributions to some project or task?

- Which of these comments violate the rules of a social media platform(or sub-community)?

- Which of these given Ethereum addresses represent a real and uniquehuman being?

- Which of these physical objects contributes positively or negativelyto the aesthetics of its environment?

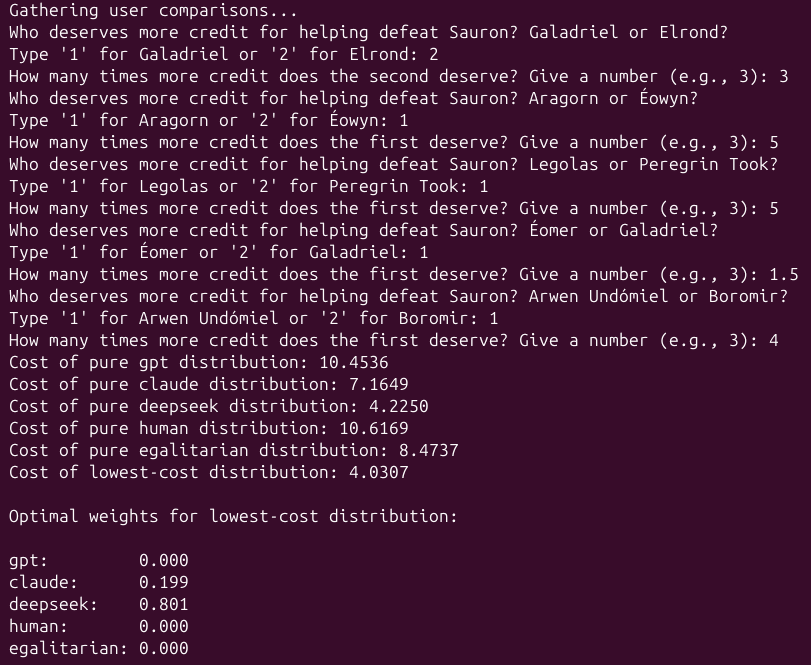

You have a jury that can answer such questions, though at the cost ofspending a lot of effort on each answer. You ask the jury toonly a small number of the questions (eg. if the total list has1 million items, the jury perhaps only provides answers on 100 of them).You can even ask the jury indirect questions: instead of asking "whatpercent of total credit does Alice deserve?", you can ask "does Alice orBob deserve more credit, and how many times more?". When designing thejury mechanism, you can reuse time-tested mechanisms from the real worldlike grants committees, courts (determining value of a judgement),appraisals, etc, though of course the jury participants arethemselves welcome to use new-fangled AI research tools to helpthem come to an answer.

You then allow anyone to submit a list of numerical responsesto the entire set of questions (eg. providing an estimate forhow much credit each participant in the entire list deserves).Participants are encouraged to use AI to do this, though they can useany technique: AI, human-AI hybrid, AI with access to internet searchand the ability to autonomously hire other human or AI workers,cybernetically enhanced monkeys, etc.

Once the full-list providers and the jurors have both submitted theiranswers, the full lists are checked against the jury answers, and somecombination of the full lists that are most compatible with thejury answers is taken as the final answer.

The distilled human judgement mechanism is different from futarchy,but has some important similarities:

- In futarchy, the "solvers" aremaking predictions, and the "ground-truthdata" that their predictions get checked against (to reward orpenalize solvers) is the oracle that outputs the value of themetric, which is run by the jury.

- In distilled human judgement, the"solvers" are providing answers to a very largequantity of questions, and the "ground-truthdata" that their predictions get checked against ishigh-quality answers to a small subset of thosequestions, provided by a jury.

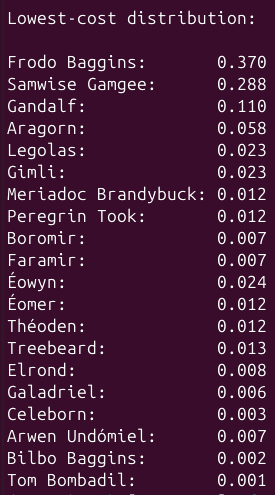

Toy example of distilled human judgement for creditassignment, see pythoncode here. The script asks you to be the jury, and containssome AI-generated (and human-generated) full lists pre-included in thecode. The mechanism identifies the linear combination of full lists thatbest-fits the jury answers. In this case, the winning combination is0.199 * Claude's answer + 0.801 * Deepseek's answer; thiscombination matches the jury answers better than any single model does.These coefficients would also be the rewards given to thesubmitters.

The "humans as a steering wheel" aspect in this "defeating Sauron"example is reflected in two places. First, there is high-quality humanjudgement being applied on each individual question, though this isstill leveraging the jury as "technocratic" evaluators of performance.Second, there is an implied voting mechanism that determines if"defeating Sauron" is even the right goal (as opposed to, say, trying toally with him, or offering him all the territory east of some criticalriver as a concession for peace). There are other distilled humanjudgement use cases where the jury task is more directly values-laden:for example, imagine a decentralized social media platform (orsub-community) where the jury's job is to label randomly selected forumposts as following or not following the community's rules.

There are a few open variables within the distilled human judgementparadigm:

- How do you do the sampling? The role of the fulllist submitters is to provide a large quantity of answers; the role ofthe jurors is to provide high-quality answers. We need to choose jurors,and choose questions for jurors, in such a way that a model's ability tomatch jurors' answers is maximally indicative of its performance ingeneral. Some considerations include:

- Expertise vs bias tradeoff: skilled jurors aretypically specialized in their domain of expertise, so you will gethigher quality input by letting them choose what to rate. On the otherhand, too much choice could lead to bias (jurors favoring content frompeople they are connected to), or weaknesses in sampling (some contentis systematically left unrated)

- Anti-Goodharting:there will be content that tries to "game" AI mechanisms, eg.contributors that generate large amounts of impressive-looking butuseless code. The implication is that the jury can detect this, butstatic AI models do not unless they try hard. One possible way to catchsuch behavior is to add a challenge mechanism by which individuals canflag such attempts, guaranteeing that the jury judges them (and thusmotivating AI developers to make sure to correctly catch them). Theflagger gets a reward if the jury agrees with them or pays a penalty ifthe jury disagrees.

- What scoring function do you use? One idea that isbeing used in the current deep funding pilots is to ask jurors "does Aor B deserve more credit, and how much more?". The scoring function is

score(x) = sum((log(x[B]) - log(x[A]) - log(juror_ratio)) ** 2 for (A, B, juror_ratio) in jury_answers): that is, for each jury answer, it asks how far away the ratio in thefull list is from the ratio provided by the juror, and adds a penaltyproportional to the square of the distance (in log space). This is toshow that there is a rich design space of scoring functions, and thechoice of scoring functions is connected to the choice of whichquestions you ask the jurors. - How do you reward the full list submitters?Ideally, you want to often give multiple participants a nonzero reward,to avoid monopolization of the mechanism, but you also want to satisfythe property that an actor cannot increase their reward by submittingthe same (or slightly modified) set of answers many times. One promisingapproach is to directly compute the linear combination (withcoefficients non-negative and summing to 1) of full lists that best fitsthe jury answers, and use those same coefficients to split rewards.There could also be other approaches.

In general, the goal is to take human judgement mechanisms that areknown to be effective and bias-minimizing and have stood the test oftime (eg. think of how the adversarial structure of a court systemincludes both the two parties to a dispute, who have high informationbut are biased, and a judge, who has low information but is probablyunbiased), and use an open market of AIs as a reasonably high-fidelityand very low-cost predictor of these mechanisms (this is similar to how"distillation" of LLMs works).

Deep funding

Deep funding is the application of distilled human judgement to theproblem of filling in the weights of edges on a graph representing "whatpercent of the credit for X belongs to Y?"

It's easiest to show this directly with an example:

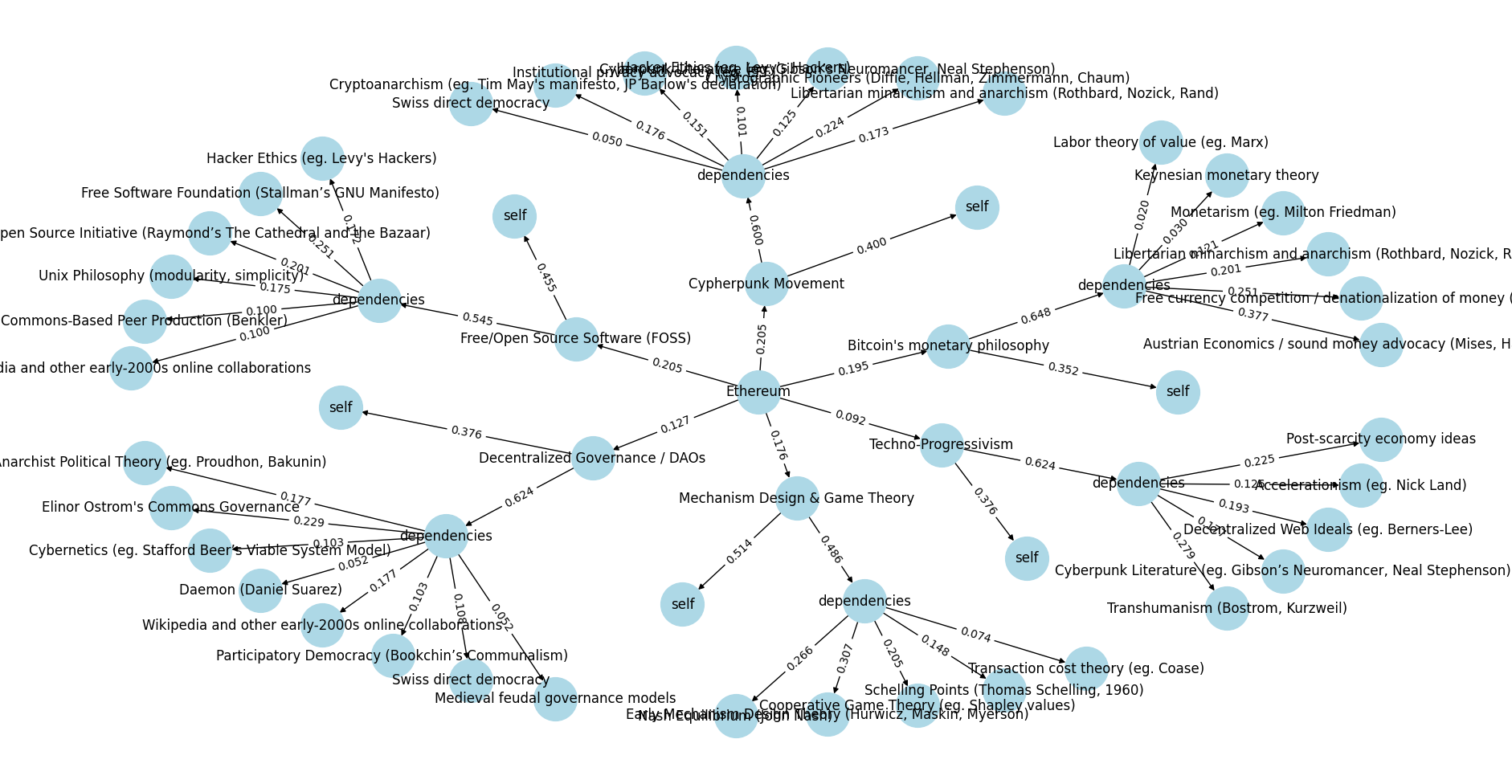

Output of two-level deep funding example: the ideological originsof Ethereum. See pythoncode here.

Here, the goal is to distribute the credit for philosophicalcontributions that led to Ethereum. Let's look at an example:

- The simulated deep funding round shown here has assigned 20.5% ofthe credit to the Cypherpunk Movement and 9.2% toTechno-Progressivism.

- Within each of those nodes, you ask the question: to whatextent is it an original contribution (so it deserves credit foritself), and to what extent is it a recombination of other upstreaminfluences? For the Cypherpunk Movement, it's 40% new and 60%dependencies.

- You can then look at influences further upstream of those nodes:Libertarian minarchism and anarchism gets 17.3% of the credit for theCypherpunk Movement but Swiss direct democracy only gets 5%.

- But note that Libertarian minarchism and anarchism also inspiredBitcoin's monetary philosophy, so there are two pathways by which itinfluenced Ethereum's philosophy.

- To compute the total share of contribution of Libertarian minarchismand anarchism to Ethereum, you would multiply up the edges along eachpath, and add the paths:

0.205 * 0.6 * 0.173 + 0.195 * 0.648 * 0.201 ~= 0.0466. Andso if you had to donate $100 to reward everyone who contributes to thephilosophies that motivated Ethereum, according to this simulated deepfunding round, Libertarian minarchists and anarchists would get$4.66.

This approach is designed to work in domains where work is built ontop of previous work and the structure of this is highly legible.Academia (think: citation graphs) and open source software (think:library dependencies and forking) are two natural examples.

The goal of a well-functioning deep funding system would be to createand maintain a global graph, where any funder that is interested insupporting one particular project would be able to send funds to anaddress representing that node, and funds would automatically propagateto its dependencies (and recursively to their dependencies etc) based onthe weights on the edges of the graph.

You could imagine a decentralized protocol using a built-indeep funding gadget to issue its token: some in-protocoldecentralized governance would choose a jury, and the jury would run thedeep funding mechanism, as the protocol automatically issues tokens anddeposits them into the node corresponding to itself. By doing so, theprotocol rewards all of its direct and indirect contributors in aprogrammatic way reminiscent of how Bitcoin or Ethereum block rewardsrewarded one specific type of contributor (miners). By influencing theweights of the edges, the jury gets a way to continuously define whattypes of contributions it values. This mechanism could function as adecentralized and long-term-sustainable alternative to mining, sales orone-time airdrops.

Adding privacy

Often, making good judgements on questions like those in the examplesabove requires having access to private information: an organization'sinternal chat logs, information confidentially submitted by communitymembers, etc. One benefit of "just using a single AI", especially forsmaller-scale contexts, is that it's much more acceptable to give one AIaccess to the information than to make it public for everyone.

To make distilled human judgement or deep funding work in thesecontexts, we could try to use cryptographic techniques to securely giveAIs access to private information. The idea is to use multi-partycomputation (MPC), fully homomorphic encryption (FHE), trusted executionenvironments (TEEs) or similar mechanisms to make the privateinformation available, but only to mechanisms whose only output is a"full list submission" that gets directly put into the mechanism.

If you do this, then you would have to restrict the set of mechanismsto just being AI models (as opposed to humans or AI + humancombinations, as you can't let humans see the data), and in particularmodels running in some specific substrate (eg. MPC, FHE, trustedhardware). A major research direction is figuring out near-termpractical versions of this that are efficient enough to make sense.

Benefits of engine +steering wheel designs

Designs like this have a number of promising benefits. By far themost important one is that they allow for the construction ofDAOs where human voters are in control of setting the direction, butthey are not overwhelmed with an excessively large number of decisionsto make. They hit the happy medium where each person doesn'thave to make N decisions, but they have more power than just making onedecision (how delegation typically works), and in a way that is morecapable of eliciting rich preferences that are difficult to expressdirectly.

Additionally, mechanisms like this seem to have an incentivesmoothing property. What I mean here by "incentive smoothing"is a combination of two factors:

- Diffusion: no single action that the votingmechanism takes has an overly large impact on the interests of any onesingle actor.

- Confusion: the connection between voting decisionsand how they affect actors' interests is more complex and difficult tocompute.

The terms confusion and diffusion here are taken fromcryptography, where they are key properties of what makes ciphersand hash functions secure.

A good example of incentive smoothing in the real world today is therule of law: the top level of the government does not regularly takeactions of the form "give Alice's company $200M", "fine Bob's company$100M", etc, rather it passes rules that are intended to apply evenly tolarge sets of actors, which then get interpreted by a separate class ofactors. When this works, the benefit is that it greatly reduces thebenefits of bribery and other forms of corruption. And when it'sviolated (as it often is in practice), those issues quickly becomegreatly magnified.

AI is clearly going to be a very large part of the future, and thiswill inevitably include being a large part of the future of governance.However, if you are involving AI in governance, this has obvious risks:AI has biases, it could be intentionally corrupted during the trainingprocess, and AI technology is evolving so quickly that "puttingan AI in charge" may well realistically mean "putting whoever isresponsible for upgrading the AI in charge". Distilled humanjudgement offers an alternative path forward, which lets us harness thepower of AI in an open free-market way while keeping a human-rundemocracy in control.

Anyone interested in more deeply exploring and participating in thesemechanisms today is highly encouraged to check out the currently activedeep funding round at https://cryptopond.xyz/modelfactory/detail/2564617.

免责声明:本文章仅代表作者个人观点,不代表本平台的立场和观点。本文章仅供信息分享,不构成对任何人的任何投资建议。用户与作者之间的任何争议,与本平台无关。如网页中刊载的文章或图片涉及侵权,请提供相关的权利证明和身份证明发送邮件到support@aicoin.com,本平台相关工作人员将会进行核查。