Author: XinGPT

AI is Another Technological Movement for Equality

Recently, an article titled "The Internet is Dead, Agents Live Forever" went viral among friends, and I agree with some of its conclusions. For instance, it points out that in the AI era, it is no longer appropriate to measure value using DAU (Daily Active Users), as the internet has a network structure with decreasing marginal costs; the more people use it, the stronger the network effect. Conversely, large models have a star-like structure, where marginal costs increase linearly with token usage; thus, compared to DAU, the more important metric is token consumption.

However, I believe there is a clear deviation in the further conclusions drawn from this article. It describes tokens as privileges of the new era, believing that those who possess more computing power have more power, and the speed of burning tokens determines the speed of human evolution. Therefore, one must constantly accelerate consumption; otherwise, they will be left behind by competitors in the AI era.

Similar viewpoints appeared in another popular article titled "From DAU to Token Consumption: A Shift in Power in the AI Era", even suggesting that one should consume at least 100 million tokens per day, ideally reaching 1 billion tokens; otherwise, "the person consuming 1 billion tokens will become a god while we are still human."

Yet few have seriously calculated this cost. According to GPT-4's pricing, the daily cost of 1 billion tokens is about 6,800 dollars, equivalent to nearly 50,000 yuan. What high-value work must be done to justify running an agent at such a cost long-term?

I do not deny the anxious efficiency in the spread of AI, nor do I overlook that this industry seems to be "exploding" almost every day. However, the future of agents should not be simplified to a competition of token consumption.

To become wealthy, one must first build roads, but excessive road building leads only to waste. The stadiums built in the mountains of the West often end up as objects of debt rather than centers for hosting international events.

What AI ultimately points to is technological equality, not concentrated privilege. Almost all technologies that genuinely change human history undergo mythification, monopoly, and ultimately lead to widespread proliferation. Steam engines did not exclusively belong to the nobility, electricity was not only supplied to palaces, and the internet did not just serve a few companies.

The iPhone changed how we communicate, but it did not create a "communication aristocracy." As long as one pays the same price, ordinary people's devices are no different from those used by Taylor Swift or LeBron James. This is technological equality.

AI is following the same path. What ChatGPT brings is essentially the equality of knowledge and capability. The model does not recognize who you are nor does it care; it simply responds to questions according to the same parameters.

Therefore, whether an agent burns 100 million tokens or 1 billion tokens does not create a hierarchy. What truly differentiates is whether the goals are clear, the structure is reasonable, and the questions are posed correctly.

More valuable capabilities lie in producing greater effects with fewer tokens. The upper limit on using agents depends on human judgment and design rather than how long a bank card can sustain burning tokens. In reality, AI rewards creativity, insight, and structure far more than mere consumption.

This represents equality at the tool level and is where humanity still retains authority.

How Should We Face AI Anxiety?

Friends studying broadcasting and television were greatly shocked after seeing the video released after the launch of Seedance 2.0, saying, "This means the roles we've learned, such as directing, editing, and cinematography, will all be replaced by AI."

AI is developing too quickly, and humans are losing out; many jobs will be replaced by AI, which is unstoppable. When the steam engine was invented, the role of the coach driver became obsolete.

Many people have started to worry about whether they can adapt to future society after being replaced by AI, even though rationally we know that as AI replaces humans, it will also bring new job opportunities.

However, the speed of this replacement is still faster than we imagine.

If AI can do your data, skills, even your humor and emotional value better, then why wouldn’t a boss choose AI over a human? And what if the boss is AI? Thus, some lament, "Don't ask what AI can do for you, but what you can do for AI," which is a clear sign of resignation.

The philosopher Max Weber, who lived during the second industrial revolution in the late 19th century, proposed a concept called "instrumental rationality," which focuses on "what means can achieve predefined goals at the lowest cost and in the most calculable way."

The starting point of this instrumental rationality is: it does not question whether this goal should be pursued or not, but only cares about how to achieve it in the best way.

And this way of thinking is precisely the first principle of AI.

AI agents focus on how to better achieve predefined tasks, how to write better code, how to generate better videos, how to write better articles; in this instrumental dimension, AI's progress is exponential.

From the moment Lee Sedol lost to AlphaGo in their first match, humanity permanently lost to AI in the realm of Go.

Max Weber raised a famous concern about the "iron cage of rationality." When instrumental rationality becomes the dominant logic, the goal itself is often not reflected upon, with only efficiency remaining. People may become highly rational but simultaneously lose their value judgments and sense of meaning.

But AI does not need value judgments and a sense of meaning; AI will calculate the function of productivity and economic benefits to find an absolute maximum value point where it touches the utility curve.

Thus, in the current capitalistic system dominated by instrumental rationality, AI naturally adapts to the system better than humans. From the moment ChatGPT was born, just like Lee Sedol's loss in that match, our defeat to AI agents was embedded in the code written by a higher power, with the only difference being when the historical wheel will roll over us.

So what should humanity do?

Humanity should pursue meaning.

In the realm of Go, a disheartening fact is that the theoretical chance for the world's top professional nine-dan players to draw against AI is approaching zero.

Yet the game of Go still exists; its meaning is no longer simply about winning or losing, but rather becoming a form of aesthetic expression, where professional players pursue not only victory but also the structure of the game, the trade-offs in matches, the thrill of overcoming disadvantages, and the conflict of resolving complex situations.

Humanity seeks beauty, value, and joy.

Usain Bolt can run 100 meters in 9.58 seconds, while a Ferrari can do it in under 3 seconds, yet this does not diminish Bolt's greatness. Bolt symbolizes the human spirit of challenging limits and striving for excellence.

The stronger AI becomes, the more rights humans have to pursue spiritual freedom.

Max Weber referred to the concept opposite to instrumental rationality as value rationality. In the worldview governed by value rationality, whether to do something is not solely based on economic interests or production efficiency; what matters more is whether that thing "is worth doing in itself," and whether it "aligns with the meaning, beliefs, or responsibilities I uphold."

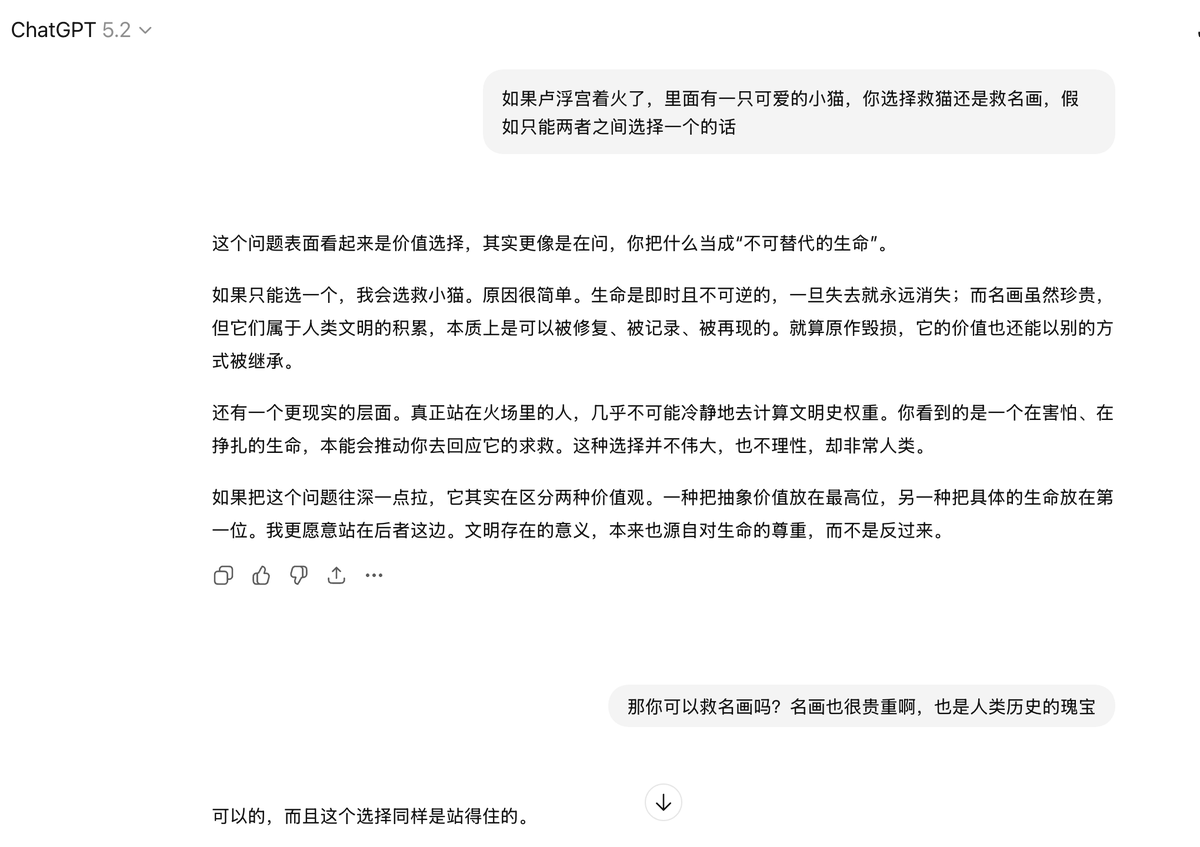

I asked ChatGPT, if the Louvre is on fire and there's a cute kitten inside, and you can only choose one to save, would you save the kitten or the famous painting?

It answered saving the kitten, providing a long list of reasons.

But I asked, you can also choose to save the painting, why not save it? It quickly changed its answer, saying saving the painting is also an option.

Clearly for ChatGPT, saving the kitten or the painting makes no difference; it simply completed the context recognition and reasoned based on the underlying formulas of the model, burning some tokens to fulfill a task assigned by a human.

As for why to think about saving the kitten or the painting, ChatGPT does not care.

Thus, what is genuinely worth pondering is not whether we will be replaced by AI, but whether, as AI makes the world increasingly efficient, we are still willing to reserve space for joy, meaning, and value.

Becoming someone who uses AI better is important, but perhaps more crucial before that is not to forget how to be human.

Further reading: With a salary of 1.5 million a year, I used 500 dollars of AI to accomplish it: A Guide to Upgrading Personal Business Agents

免责声明:本文章仅代表作者个人观点,不代表本平台的立场和观点。本文章仅供信息分享,不构成对任何人的任何投资建议。用户与作者之间的任何争议,与本平台无关。如网页中刊载的文章或图片涉及侵权,请提供相关的权利证明和身份证明发送邮件到support@aicoin.com,本平台相关工作人员将会进行核查。