Original Title: "The job with an annual salary of 1.5 million, I completed with AI worth 500 dollars: A guide to upgrading personal business agents."

Original Author: XinGPT, cryptocurrency researcher

During the Spring Festival of 2026, I made a decision: to turn all of my business processes into agents.

A week later today, this system has nearly processed 1/3 of its operations. Although this system is still being improved, I have managed to reduce my daily routine work from 6 hours to 2 hours, while business output has increased by 300%.

More importantly, I have validated a hypothesis: The transformation of personal business into agents is feasible, and I believe everyone should build such an operating system.

Having an agent system means a complete shift in your thinking, from "How do I complete this work?" to "What kind of agent should I build to complete this work?" The impact of this shift from passive to proactive thought is profound.

In this article, I will not deliver any feel-good AI generated content, nor will I deliberately create anxiety about AI replacement. Instead, I will thoroughly dissect how I completed this transformation step by step, and how you can replicate this method for free.

This is the first article on building an agent productivity system. Click to save and follow for future updates to avoid getting lost.

Why Agentization is a Necessity, Not an Option

Let's first discuss a harsh reality:

If your business model is "exchanging time for income," then your income ceiling is already locked by the laws of physics. There are only 24 hours in a day, and even if you work all year round, the limit on hourly billing is still there.

· Fund manager's salary: ¥1.5 million/year ≈ ¥720/hour (based on 2080 working hours)

· Consulting partner's salary: ¥2 million/year ≈ ¥960/hour

· Top finance KOL annual income: ¥3 million/year ≈ ¥1440/hour

Seems high? But this is already the limit of the manpower model.

The rationale for agentization is completely different: Your income is no longer determined by working hours, but by the operational efficiency of the system.

A real turning point

On a Friday night at 11 PM in January 2026, I was still at my computer organizing the market data from that day.

The US market had plunged, and I needed to:

· Read through 50+ important news articles

· Analyze after-hours performance of 10 key companies

· Update my investment portfolio strategy

· Write a market interpretation article

I calculated that I would need at least 3 more hours. And the next day at 8 AM, I would have to repeat the same process.

At that moment, I suddenly realized: I was not spending my time on investment analysis thinking and decision-making; I was just a data mover.

Decisions that truly required my judgment probably only accounted for 20% of my time. The remaining 80% was all about repetitive information collection and organization.

This was the starting point for my decision to agentize.

My investment research agent system now automatically processes:

· 20,000+ global finance news articles

· Updates from 50+ companies' earnings reports

· 30+ macroeconomic indicators

· 10+ industry research reports

If done manually, this would require a 5-person team. My cost is: $500 a month for API calls + 1 hour of review time each day.

This is the essence of agentization: Using algorithms to replicate your judgment framework, replacing manpower costs with API costs.

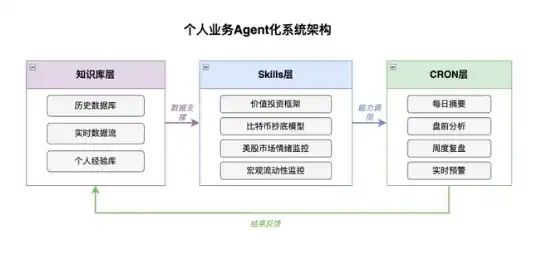

01 Deconstruct Your Business: The Three Layers from Human to System

Any knowledge work can be dismantled into three layers:

The First Layer: Knowledge Base

This is the "memory system" of the agent.

Taking investment research as an example, my approach was to build a knowledge base containing the information and data I needed for investing, which includes:

1. Historical Database

· Macroeconomic data from the past 10 years (Federal Reserve, CPI, non-farm data)

· Earnings report data from the Top 50 US companies

· Recap notes of significant market events (2008 financial crisis, 2020 pandemic, 2022 rate hike cycle)

2. Important Indicators and News

· Major financial media and information channels I follow

· Federal Reserve policies and the dates major companies release earnings reports

· 50 Twitter accounts I follow (macro analysts, fund managers)

· Important macroeconomic indicators

· Important industry research and data tracking

3. Personal Experience Base

· Records of my investment decisions over the past 5 years

· Reviews of the accuracy of each judgment

A specific case: Market crash in early February 2026

In early February, the market suddenly crashed, gold and silver plummeted, cryptocurrencies faced a flood, and US and Hong Kong equities dropped sharply.

The interpretations in the market mainly consisted of several points:

· Anthropic's legal AI is too powerful, software stocks crashed

· Google's capital expenditure guidance was too high

· The incoming Federal Reserve chairman, Warsh, is hawkish

My agent system issued a warning 48 hours before the crash because it monitored:

· Sudden increase in Japanese bond yields, significant narrowing of the US2Y-JP2Y yield spread

· High TGA account balances, the Treasury continuously withdrawing from the market

· CME consecutively raised margin requirements for gold and silver futures 6 times

All of these were clear signals of tightening liquidity. My knowledge base contained a complete recap of the market fluctuations caused by yen arbitrage trades that closed in August 2022.

The agent system automatically matched historical patterns and provided a "tight liquidity + high valuation → reduce position" suggestion before the crash.

This warning helped me avoid at least a 30% drawdown.

This knowledge base holds over 500,000 structured data points and gets updated automatically with 200+ new entries daily. Maintaining this manually would require 2 full-time researchers.

The Second Layer: Skills (Decision Framework)

This layer is the easiest to overlook but the most critical.

Most people use AI in the following way: open ChatGPT → input a question → get an answer. The issue with this approach is that AI does not know your judgment criteria.

My approach is to break down my decision logic into independent skills. Taking investment decisions as an example:

Skill 1: US Stock Value Investment Framework

(The following skills are examples and do not represent my actual investment criteria, and my investment judgment criteria will be updated in real time):

markdown

Input: Company earnings report data

Judgment criteria:

- ROE > 15% (sustained for over 3 years)

- Debt ratio < 50%

- Free cash flow > 80% of net profit

- Moat assessment (brand/network effects/cost advantages)

Output: Investment rating (A/B/C/D) + Reason

Skill 2: Bitcoin Bottom-Buying Model

markdown

Input: Bitcoin market data

Judgment criteria:

- K-line technical indicators: RSI 30 and oversold at the weekly level

- Trading volume: Shrinking transaction volume post-panic sell-off (below the 30-day average)

- MVRV ratio: 1.0 (market cap lower than realized market cap, overall loss of holders)

- Social media sentiment: Twitter/Reddit panic index > 75

- Miner shutdown price: Current price close to or below mainstream miner shutdown price (e.g., S19 Pro cost line)

- Long-term holder behavior: LTH supply ratio rising (bottom-buying signal)

Trigger conditions:

- Meet 4 or more indicators → Partial build-up signal

- Meet 5 or more indicators → Heavy-bottom buying signal

Output: Bottom-buy rating (strong/medium/weak) + Suggested position ratio

Skill 3: US Stock Market Sentiment Monitoring

markdown

Monitoring indicators:

- NAAIM Exposure Index: Ratio of stocks held by active investment managers

· Value > 80 and median reaching 100 → Institutional accumulation space nearing warning

- Institutional stock allocation ratio: Data from large custodial institutions like State Street

· At historical extremes since 2007 → Reverse warning signals

- Retail net buying amount: Daily retail fund flow tracked by JPMorgan

· Daily average buying amount > 85% of historical level → Overheated sentiment signal

- S&P 500 forward P/E ratio: Monitor whether approaching historical valuation peak

· Approaching 2000 or 2021 levels → Fundamentals deviate from stock prices

- Hedge fund leverage ratio: Crowded positions in a high-leverage environment

· Leverage ratio at historical highs → Potential volatility amplifier

Trigger conditions:

- More than 3 indicators simultaneously warning → Reduce position signal

- All 5 indicators warning → Major reduction or hedging

Output: Sentiment rating (extremely greedy/greedy/neural/panic) + Position suggestion

Skill 4: Macroeconomic Liquidity Monitoring

markdown

Monitoring indicators:

- Net liquidity = Federal Reserve total assets - TGA - ON RRP

- SOFR (overnight financing rate)

- MOVE Index (US Treasury volatility)

- USDJPY + US2Y-JP2Y yield spread

Trigger conditions:

- Net liquidity single-week drop > 5% → Warning

- SOFR breaks 5.5% → Reduce position signal

- MOVE Index > 130 → Risk asset stop-loss

The essence of these skills is: to make my judgment criteria explicit and structured, allowing AI to work according to my thought framework.

The Third Layer: CRON (Automated Execution)

This is the key to making the system run effectively.

I have set up the following automation tasks:

Now my mornings look like this:

7:50 AM, wake up and check my phone while brushing my teeth. The agent has already pushed the overnight global market summary:

· US stocks slightly rose last night, led by tech shares

· Bank of Japan maintained interest rates, yen depreciated slightly

· Oil prices rose 2% due to geopolitical tensions

· Today's focus: US CPI data, Nvidia earnings report

8:10 AM, having breakfast, I open my computer to see the detailed analysis. The agent has already generated the strategy for today:

· CPI data expectations align with market expectations, neutral impact on the market

· Key focus on AI chip order guidance in Nvidia earnings report

· Suggestion: Maintain tech stock positions, look for opportunities in the energy sector

8:30 AM, work begins, and I only need to make the final decision based on the agent's analysis: whether to adjust positions and how much.

The whole process takes 30 minutes.

I no longer need to frantically flip through the news every morning; AI has done the pre-reading for me.

More importantly, investment decisions are no longer easily swayed by emotions; instead, they follow a complete investment logic, clear judgment criteria, and are reviewed, summarized, and iterated based on investment performance; this is the correct path for investment in the AI era, rather than continuing to hire a bunch of interns to work overtime updating Excel profit forecast sheets or making bets with 50x leverage, waiting for miracles to happen.

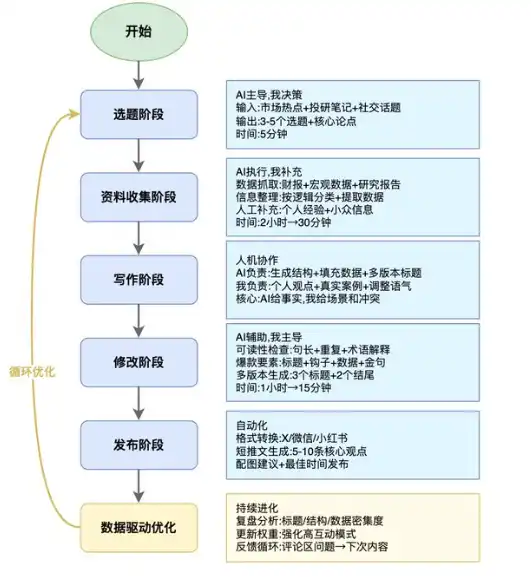

02 Agentization of Content Production: From Craft Workshop to Production Line

My second main business is content creation, currently mainly on Twitter, as well as exploring YouTube and other video formats.

Previously, my typical process for writing an article was:

· Find a topic (1 hour)

· Research (2 hours)

· Writing (3 hours)

· Editing (1 hour)

· Publishing + interaction (1 hour)

In total, 8 hours for one article, with quality being inconsistent.

I reflected on the main problems with my published articles, which included:

· Topics were too broad, lacking a focal point

· Content was too theoretical, lacking specific examples

· Titles were not engaging enough

· Timing of publication

But integrating agentization into content production is a systematic engineering project!

Therefore, my agentization transformation in content involves three steps:

Step 1: Build a Hit Content Knowledge Base

I did something that many people overlook: systematically researching the patterns of hit articles.

Specific approach:

1. Scraped the top 200 hit articles in the finance/technology field on X platform from the past year

2. Used AI to analyze their commonalities: title structure, opening methods, argument logic, ending design

3. Extracted reusable "hit formula"

Here are a few examples:

Title Formulas:

· Numerical impact type: "After my assets shrunk by 70%, I realized..."

· Counterintuitive type: "The internet is dead, agents live forever"

· Value promise type: "Save you... No need to buy on second-hand platforms"

Opening Formulas:

· Specific event entry: "In January 2025, I made a decision..."

· Extreme contrast: "If you continue at the current pace... but 6 months later..."

· Deconstruct and reconstruct: "The interpretations in the market mainly consist of several points... I think they are all incorrect."

Argument Structure:

· Viewpoint → Data support → Case validation → Counter-argument

· Clear layered with 1/2/3

· Professional terms + layman explanations

I have organized these patterns into a "hit content framework library" to feed to AI.

Step 2: Human-AI Collaborative Content Production Line

Now my content production process has become an efficient human-AI collaborative production line, with clear roles in each stage.

Topic Selection Stage (AI-led, I make decisions)

Every Monday morning, my agent automatically pushes 3-5 topic suggestions.

Input sources:

· This week’s global market hotspot events (automatically captured)

· My investment research notes and latest thoughts

· High-frequency discussion topics on social media

· Frequently asked questions in the readers' comment sections

AI output format:

markdown

Topic 1: The liquidity logic behind Bitcoin surpassing 100,000 dollars

Core argument: It's not driven by demand, but by the result of dollar liquidity expansion

Potential explosive point: Data-dense + counterintuitive viewpoint

Estimated interaction rate: High

Topic 2: Why AI companies are losing money, but stock prices are still rising

Core argument: The market is pricing future cash flows, not current profits

Potential explosive point: Resolving public confusion

Estimated interaction rate: Medium to high

Topic 3: Retail sentiment indicators at all-time highs, should we escape the peak?

Core argument: Sentiment indicators need to be assessed in conjunction with liquidity environment

Potential explosive point: Practical tools + methodologies

Estimated interaction rate: Medium

I will choose the topic that most aligns with current market sentiments and where I have unique insights.

Data Collection Stage (AI executes, I supplement)

After selecting a topic, the agent automatically initiates the data collection process:

1. Data scraping (automation)

Latest earnings data from relevant companies

Historical trends of macroeconomic indicators

Core points from industry research reports

Representative viewpoints on social media

2. Information organization (AI processes)

Classifying scattered information according to argument logic

Extracting key data and citation sources

Generating a preliminary argument framework

3. Manual supplementation (my value)

Adding my personal experiences and cases

Supplementing niche information sources that the agent cannot find

Highlighting which viewpoints need to be emphasized in arguments

This stage has been shortened from 2 hours to 30 minutes.

Writing Stage (Human-AI collaboration)

This is the most critical stage, where the division of labor between me and AI is very clear:

AI is responsible for:

· Generating article structure based on hit frameworks

· Filling in data and factual content

· Generating multiple title and opening versions for selection

· Ensuring the completeness of argument logic

I am responsible for:

· Injecting personal viewpoints and value judgments

· Including real cases and details

· Adjusting tone and expression

· Deleting AI-generated "correct but unnecessary" content

· Editing Stage (AI-assisted, I lead)

After the first draft is completed, I let the agent handle several tasks:

1. Readability check

Are sentences too long (sentences over 30 words are highlighted in red)

Are there any repetitive expressions

Do professional terms need explanations

2. Hit factors check

Does the title conform to high interaction rate models

Do the first 3 paragraphs have hooks

Is there specific data support

Are there quotable golden phrases

3. Multi-version generation

Generate 3 different styles of titles

Generate 2 different perspectives for conclusions

I choose the most suitable version

This stage has been shortened from 1 hour to 15 minutes.

Publishing Stage (automation)

After finalizing the article, the agent automatically executes:

· Converting to formats suitable for various platforms (X/WeChat official account/Little Red Book)

· Generating image suggestions (confirmed by me before generation)

· Automatically publishing at optimal times (based on historical data analysis)

Step 3: Data-Driven Continuous Optimization

Key understanding: Content agents are not a one-time setup, but a continuously evolving system.

I will conduct weekly reviews:

· Which aspects did the agent perform well in?

· Which aspects still require human intervention?

· How to adjust skills to make the agent conform more closely to my standards?

Step 4: Commercialization (to be completed this year)

Once your agent system is running stably, consider:

· Is this method valuable to peers?

· If so, how much are they willing to pay?

· Can you package it as a product?

If the answer is yes, congratulations, you have found a new business model.

免责声明:本文章仅代表作者个人观点,不代表本平台的立场和观点。本文章仅供信息分享,不构成对任何人的任何投资建议。用户与作者之间的任何争议,与本平台无关。如网页中刊载的文章或图片涉及侵权,请提供相关的权利证明和身份证明发送邮件到support@aicoin.com,本平台相关工作人员将会进行核查。