Imagine such a scenario.

You owe three months of utility bills, you visited the hospital last month, and you dutifully file your taxes every year. These things seem to have no connection.

But in a certain AI system, these three pieces of information are gathered together, generating a red dot on a map. That red dot represents your location and a law enforcement directive aimed at you.

This is not science fiction. At the beginning of 2026, the U.S. Federal Immigration Bureau initiated a large-scale round-up operation in Minneapolis, using this very logic. The relevant AI system comes from a data company called Palantir, which integrates immigrants' medical records, utility bills, and tax information into its algorithm, coldly marking each target for capture.

AI is being weaponized. This is not futuristic; it is happening now.

The question is no longer whether it will happen, but who has the right to decide what it can and cannot do.

On February 27, 2026, this question received a troubling answer.

On that day, a company called Anthropic—developer of the conversational AI product Claude, a competitor on par with ChatGPT—was officially banned by the U.S. government. The reason for the ban was its refusal to allow its AI to be used to monitor U.S. citizens and to autonomously decide on kill targets.

It was labeled a "national security risk" for saying "it cannot be used this way."

What a Company Preparing for IPO Needs Most

Before answering "Why did Anthropic not compromise?", we need to understand its current position.

Anthropic is currently one of the highest-valued AI companies in the world, valued at $380 billion, with projected annual revenue of $14 billion in 2025. Its largest investor is Amazon, holding a larger stake than any other shareholder. Its AI model Claude is one of the fastest-growing AI products in enterprise market procurement.

By any standard, this is a company ready to go public. In fact, Anthropic is indeed preparing for an IPO this year, allowing ordinary people to buy its stock.

What does a company preparing to go public need most? The answer hardly requires thought: stability, predictability, and no regulatory troubles. Any negative incident could affect investor confidence in the company, thereby lowering the IPO pricing.

Then, things developed in the completely opposite direction.

In July 2025, the Pentagon signed contracts worth up to $200 million with Anthropic, OpenAI (the developer of ChatGPT), Google, and Elon Musk's xAI simultaneously, aiming to integrate the most advanced AI into the U.S. military system. This was the largest government procurement in AI history.

Notably, Anthropic's contract included a detail that the other three companies did not have. It explicitly stated two usage restrictions: Claude cannot be used for mass surveillance of U.S. citizens, nor can it be used for autonomous weapon systems without human intervention.

OpenAI, Google, and xAI all agreed that the military could use AI for all lawful purposes without any additional restrictions. Only Anthropic drew two red lines in its contract.

These two red lines later became the starting point for all troubles.

Ultimatum

In February 2026, the Pentagon decided to put pressure on Anthropic.

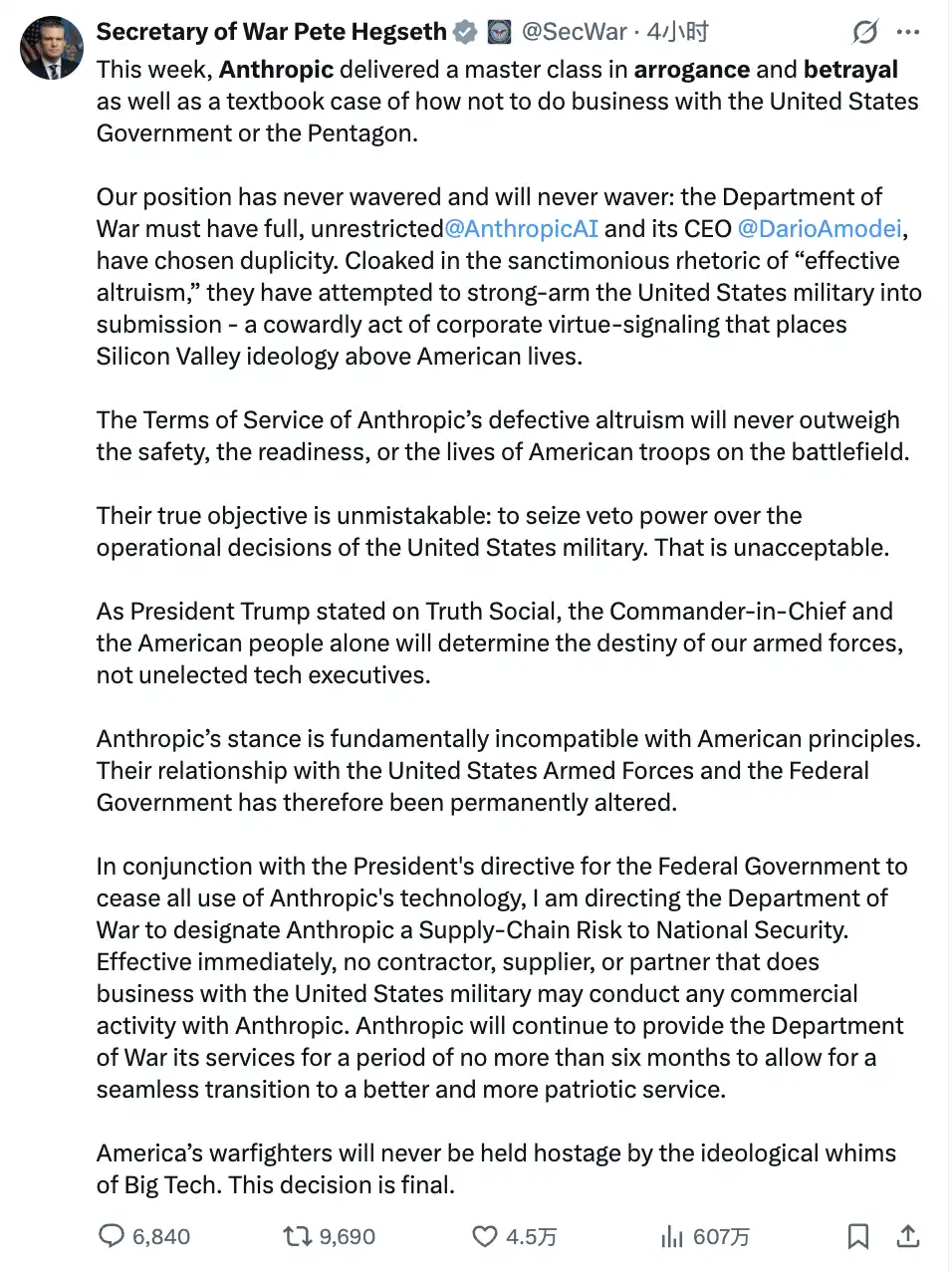

Defense Secretary Pete Hegseth issued an ultimatum to Anthropic’s CEO Dario Amodei: remove the usage restrictions in the contract and allow Claude to be used for all lawful purposes. Otherwise, the Pentagon would cancel the contract and classify Anthropic as a "supply chain risk." The deadline was set for the afternoon of February 27, local time.

Dario's response was: No.

In a public statement, he wrote: "We understand that military decisions are made by the government, not private companies. But in rare cases, we believe that AI might undermine rather than defend democratic values. We have a clear conscience and cannot accept their demands."

The Pentagon's technology officer Emil Michael immediately took to social media to claim that Dario was a fraud, "had a god complex," and was "trying to control the U.S. military, putting national security at risk."

Then Trump personally intervened. He wrote on his social platform Truth Social that Anthropic was a "radical left woke company," "made a catastrophic mistake," and ordered: All federal government agencies to immediately cease using all products from Anthropic and to complete the transition within six months.

The Pentagon then listed Anthropic as a "supply chain risk."

This label had previously only been used to mark companies with ties to foreign adversaries such as China. It had been used against Huawei and Semiconductor Manufacturing International Corporation. Now, it was applied to an American AI company valued at $380 billion, headquartered in San Francisco.

$200 million is a Trivial Matter; The Real Trouble Lies Here

Many people's first reaction is: Losing a $200 million government contract is just small change for Anthropic, which has an annual revenue of $14 billion.

This judgment is correct, but misreads the situation.

The real threat comes from the label "supply chain risk" itself. It means that any company with a partnership with the U.S. military must prove that it has not engaged with Anthropic in any part of its business. This is not a soft moral pressure but a hard compliance requirement.

Following this line, the scope of impact starts to look less optimistic.

Amazon Web Services is the main operating infrastructure for Claude and is also the largest cloud service provider to the U.S. government, with deep integration between the two. The data analytics company Palantir has integrated Claude into its services for the U.S. military and intelligence agencies. Defense technology company Anduril uses Claude for data processing in Pentagon-related projects.

These clients now face a choice: continue using Claude and bear the pressure of working with a "supply chain risk" company, or replace Claude, removing a tool deeply embedded in their workflows.

Both options represent a loss for Anthropic. This is the real risk that needs management, far more concerning than the $200 million contract amount.

And all of these troubles occurred before the IPO.

So Why Did They Still Refuse?

The question has become intriguing.

A company rushing to IPO, precisely at this juncture where it needs trouble the least, chooses to stand firm against government pressure. Is this a misjudgment in business judgment, or a calculated gamble?

When placing the two options side by side, the logic becomes clearer.

If it compromises, it secures the contract, maintains its government relations, and the current troubles disappear. But the core brand narrative of Anthropic over the past three years—"the most responsible AI company," "safety first"—would show cracks at that moment.

The greater risk is not in the immediate. If Claude were truly used for mass surveillance or autonomous weapon decision-making, and any incident occurred, what would be lost is not just a contract, but the entire valuation logic of the company. The premium that investors initially paid for the story of "Responsible AI" would evaporate overnight.

If it refuses, it loses $200 million, triggers the supply chain risk label, and effectively shuts down government markets. But the narrative of "safety first" receives the most expensive public endorsement ever. No public relations budget can buy this effect: being banned by the government for refusing to let AI monitor its own citizens.

Dario said something in his statement worth highlighting: "Our valuation and revenue will only increase after this stance."

Is this a public relations statement or a business judgment? Probably both, but they are not contradictory.

There is a reverse case to refer to. Palantir is the data company mentioned earlier that helped the Federal Immigration Bureau track targets using AI—taking a completely opposite route. It deeply cooperated with every need of the government, accepting all requests for immigration tracking, intelligence analysis, and military target locking. Thus, it was ostracized from the mainstream capital sphere that values social responsibility for nearly twenty years, often unable to raise funds, facing rejections from large funds.

Then in 2024, when the AI military narrative became the mainstream market story, its stock price increased by 150% over the year, and its market value surpassed $400 billion.

Two paths, two fates, each with its own logic. Palantir exchanged twenty years of patience for today's explosion. Anthropic is betting on another valuation logic—in today's increasingly homogeneous competition among AI companies, "the safest AI" is not just a moral stance; it is a real business moat.

Which gamble is bigger is still unknown. But Anthropic clearly believes that the cost of compromise is higher than refusal.

Can Dario's Bet Win?

The answer to this question depends on several variables that have not yet materialized.

First, is the real response of enterprise clients. Will Amazon, Palantir, and Anduril proactively cut Claude due to the label "supply chain risk," or will they choose to wait and see how the situation unfolds? Currently, these companies have not made any public statements. If they choose to wait, Anthropic's actual loss would really only be that $200 million government contract.

Second, is the actual difficulty of government replacement. Departments within the Pentagon that use Claude admit: Claude is currently one of the most powerful available AI models, and Musk's Grok still lags behind it in technical capability. Replacing it means downgrading. Who will bear this cost?

Finally, what unexpected chain reactions this situation may trigger. On the same day Anthropic was banned, Sam Altman, CEO of OpenAI, came out publicly to state: OpenAI and Anthropic "share the same red lines," and their positions on the use boundaries of military AI are consistent.

In other words—if the government wants to find an alternative that is willing to fully comply with no restrictions, the options are far fewer than one might think.

The process of weaponizing AI will not slow down because of this incident. Musk's Grok has already entered the Pentagon's secret networks, and there has been no shortage of military contracts for other AI companies; AI-assisted weapon systems continue to iterate.

Anthropic's choice cannot change this direction. The only thing that has changed is where it stands on this direction.

But one thing is certain: this is the first time in the history of the AI industry that a mainstream company has publicly resisted government pressure for clear principles—even at the cost of being banned. Regardless of how this bet turns out, the precedent has already been set.

The final arithmetic problem is left for the reader: A company valued at $380 billion gave up its entire government market for two principles written in a contract, then was labeled a national security risk.

In today's accelerating weaponization of AI, how this account is settled—depends on what you think the most valuable thing in the future is.

免责声明:本文章仅代表作者个人观点,不代表本平台的立场和观点。本文章仅供信息分享,不构成对任何人的任何投资建议。用户与作者之间的任何争议,与本平台无关。如网页中刊载的文章或图片涉及侵权,请提供相关的权利证明和身份证明发送邮件到support@aicoin.com,本平台相关工作人员将会进行核查。