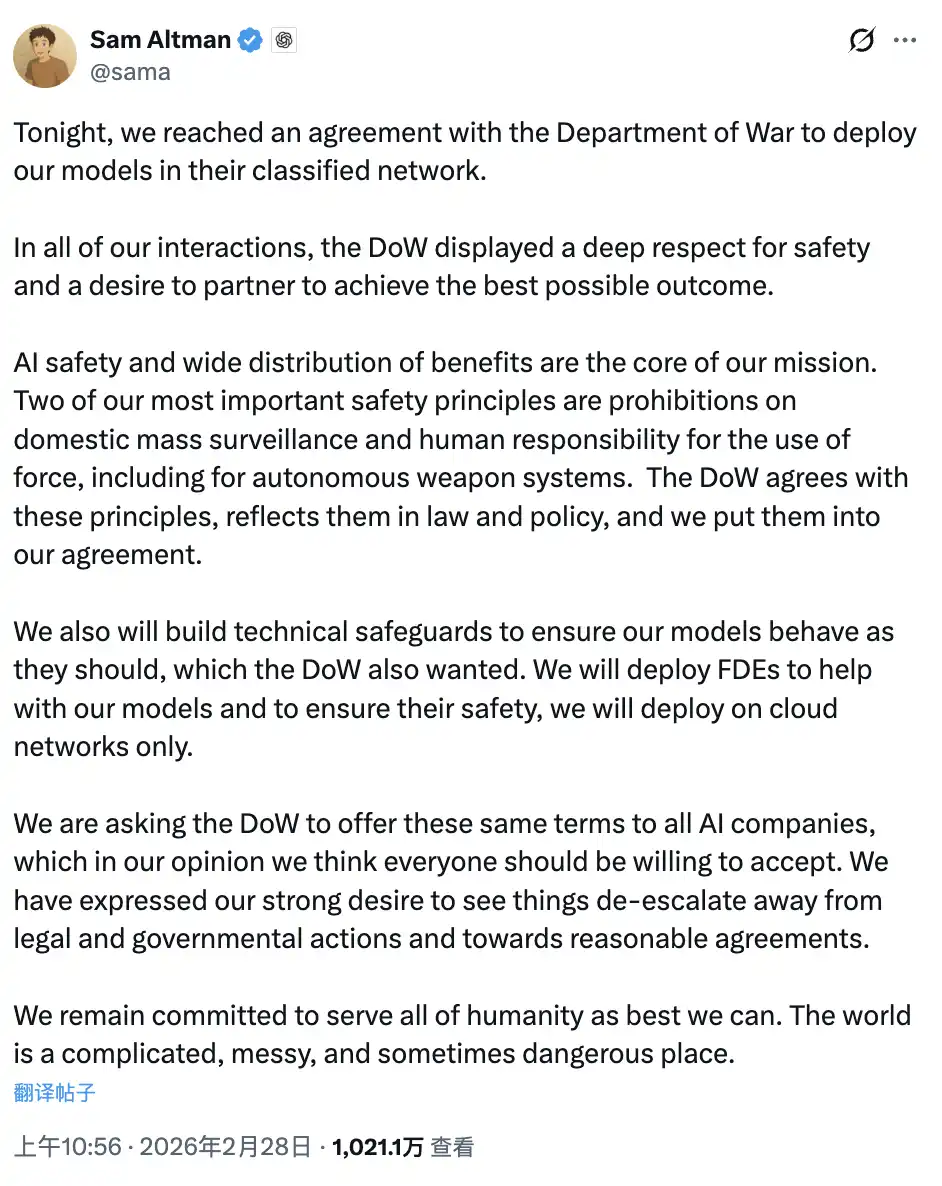

On February 28, Beijing time, Sam Altman tweeted: "Tonight, we reached an agreement with the US Army to deploy our models into their secure network."

Fast forward about twelve hours to the evening of February 27, Beijing time. Similarly, he appeared on CNBC's Squawk Box, calmly stating: "For Anthropic, despite our many differences, I basically trust that company. I think they really care about safety." He also said: "I don't think the Pentagon should threaten these companies using the Defense Production Act."

In less than twelve hours, the same mouth spoke two very different statements. What happened in between is worth elaborating on.

Two Similar Terms, Two Different Outcomes

Let’s line up the core contents of the two contracts side by side.

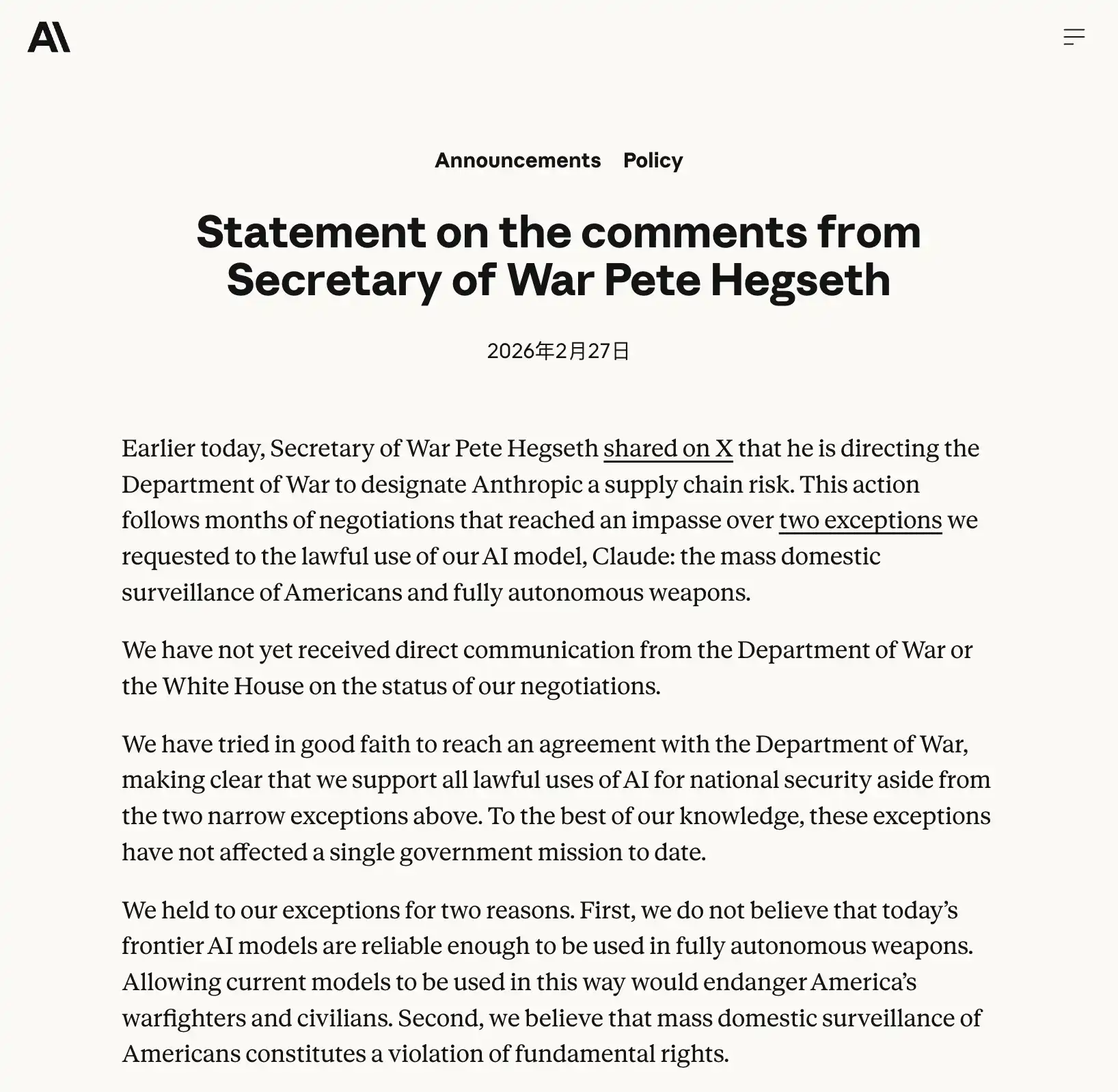

Anthropic's requirements: Claude must not be used for mass surveillance of American citizens, nor for autonomous weapon systems without human intervention.

When Altman announced the agreement in the tweet, he cited the same two principles: "We have long believed that AI should not be used for mass surveillance or autonomous lethal weapons, and humans should always be present in high-risk automated decision-making." He also wrote: "The Pentagon agrees with these principles and will reflect them in law and policy; we have written them into the contract."

The wording is almost identical.

One company was banned, labeled as "supply chain risk," and personally denounced as a "radical leftist woke company" by Trump on Truth Social. The other secured a contract, entered the Pentagon's secure network, and Sam Altman used the term "reached an agreement" in his tweet—calm, businesslike, as if a normal B2B transaction had been completed.

This is the question the entire article seeks to answer: Why do similar terms lead to completely different outcomes?

The answer does not lie in the terms themselves, but in the logic behind them.

There is a background fact that needs to be clarified first: Anthropic is the only one of the four companies (the other three being OpenAI, Google, and xAI) that has been allowed to access the Pentagon's secure network. OpenAI's original contract only covered non-confidential daily office scenarios. This negotiation was essentially about OpenAI wanting to gain access to the secure network, while the Pentagon set the entry conditions, specifically that controversial "for all lawful purposes" clause. Anthropic is already in, but the Pentagon is demanding to dismantle the security gates that it set up when entering.

The Pentagon Cares Less About What Is Written and More About Who Calls the Shots

To understand this matter, one needs to grasp what Anthropic's Dario Amodei really said in that open letter.

He wrote: "Anthropic understands that military decisions are made by the Pentagon, not private companies. We have never objected to specific military actions, nor have we attempted to temporally restrict the use of our technology."

Then he shifted his tone: "But in rare cases, we believe AI can undermine rather than uphold democratic values. Threats will not change our position: we act with a clear conscience, and we cannot accept their demands."

What does this mean in contractual language? Anthropic demands that the principles be written into the contract terms, forming a hard constraint. If the other party violates it, it has the right to refuse to continue service.

What did the Pentagon hear? A private company telling the government's military: In certain cases, I can choose not to execute your orders, and I will define the boundaries.

This is unacceptable to any military. Not because they genuinely want mass surveillance, but because "who has the decision-making power" is the most sensitive nerve in the military command system. Military procurement chief Jerry McGinn articulated it clearly: military contractors typically do not have the authority to dictate to the Pentagon how their products can or cannot be used, "otherwise every contract would require discussing specific use cases, which is unrealistic."

OpenAI gave a completely different response.

Altman informed employees in a memo that OpenAI would propose to the Pentagon that they build a "safety stack," a multilayered protection system composed of technological controls, policy frameworks, and human review, embedded between the AI models and their actual use. OpenAI also stated that they could send licensed researchers into the secure network to continuously monitor AI behavior; the models would only be deployed on the cloud and would not enter edge systems like drones.

In translation: You come to see, you come to supervise, you witness everything happening. If something goes wrong, we share the burden together; it's not you who has to explain to me.

"Rules written in stone, I execute," versus "I embed myself in, you supervise," are two completely different power dynamics, and the Pentagon only accepts the latter.

What OpenAI Excels At is Exactly What the Pentagon Wants Most

There is an uncomfortable irony that needs clarification.

OpenAI's promises of "technical transparency" and "continuous monitoring" towards the Pentagon have already been practiced on its own users.

In August 2025, OpenAI disclosed a new monitoring mechanism in an official blog post about users' mental health crisis: When the system detects that a user is "planning to harm others," the conversation is routed to a dedicated channel handled by a trained team of human reviewers authorized to report the situation to law enforcement. This was actively published by OpenAI but buried in a mid-length article about mental health, reflecting a calm reaction.

In February 2026, just before this contract was signed, OpenAI launched an advertising system and updated its privacy policy, clarifying one thing: free and basic paid users would have their conversations analyzed for context, displaying related ads based on conversation topics. For example, if you are discussing recipes, you might see ads for meal delivery services. OpenAI emphasized that the conversation content itself would not be shared with advertisers, but the analysis would occur in real time. Ads began testing on February 9.

In November 2025, OpenAI's third-party analytics provider Mixpanel experienced a breach, leaking certain API users' names, emails, approximate geographic locations, operating systems, and browser information. OpenAI subsequently ended its partnership with Mixpanel, and litigation has been initiated. This incident primarily affected API developers, while ordinary ChatGPT users were impacted only if they had submitted help desk tickets through the platform.

This is the company that promised the Pentagon "technical transparency, continuous monitoring, and letting you see everything happening."

What it excels at is precisely allowing others to look in, because it has become accustomed to treating its own users this way.

Anthropic believes rules can constrain the user, while OpenAI believes embedding their people is more effective than any terms. The former is an idealistic compliance logic, while the latter is a realistic influence logic. The Pentagon chose the latter because it is more familiar and controllable for them.

What Happened in Those In-Between Hours?

Time goes back to February 28, 5:01 PM Eastern Time, which is early morning February 28 in Beijing.

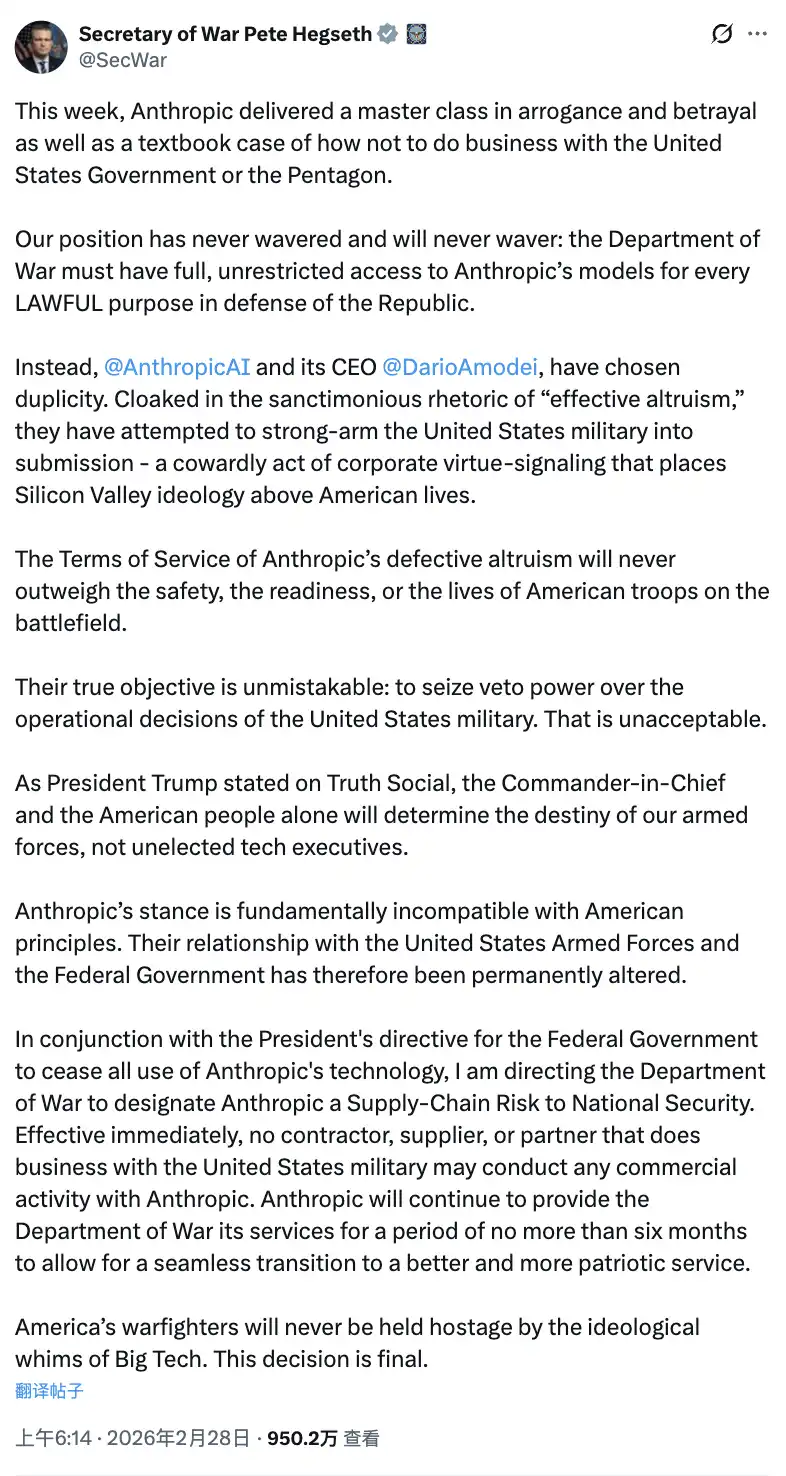

The deadline for Anthropic's ultimatum arrived. Dario did not compromise. Trump announced the ban on Truth Social, Hegseth tweeted on X labeling Anthropic as a "supply chain risk," and Anthropic announced it would respond through legal means.

There is a noteworthy line in Hegseth's statement: "Anthropic's position is fundamentally incompatible with American principles." Then, in the same statement, he noted that Anthropic could continue to provide services to the Pentagon "for no more than six months for a smooth transition." In other words, they just designated a company as a national security risk while still continuing to use its products. This logical paradox was never explained directly.

A few hours later, Altman tweeted.

Looking back at his earlier statements during an all-hands meeting that day: he said he hoped OpenAI could "help cool the situation" and find a solution that could "set a framework for the entire industry." This was not the tone of a person passively waiting.

This is not the first time Silicon Valley has witnessed such maneuvers.

In 2023, OpenAI's nonprofit board fired Altman on the grounds of "not being candid enough," claiming he was moving too quickly and there were internal communication issues. Five days later, Altman returned backed by a letter of collective support from employees, and the board dissolved. Subsequently, he led the company's restructuring from a nonprofit to a for-profit entity, and the previously constraining nonprofit mission was wrapped into a new legal framework.

This time, it was called "finding a common framework."

What Did Dario Lose?

Jerry McGinn, director of the Center for Strategic and International Studies' Industrial Base Program, gave a calm assessment of the situation: "This is excellent public relations for Anthropic, and they do not even need that $200 million."

This judgment is financially accurate. Anthropic is projected to have $14 billion in total revenue in 2025, with a valuation of $380 billion. Amazon, its largest shareholder, is unlikely to reconsider its investment logic due to a government ban. Legal avenues may offer a turnaround. The IPO valuation is unlikely to suffer substantial harm; the narrative of "refusing government pressure while upholding safety principles" is likely something no public relations budget could buy equivalently.

However, there is one thing that Dario lost.

The actual usage standards for AI in the military domain will be set by OpenAI within the Pentagon, not by Anthropic. That safety stack embedded in the secure network, those licensed OpenAI researchers, that monitoring system continuously tracking AI behavior. They will evolve into de facto industry standards in the coming years.

Anthropic preserved its position by adhering to principles, but lost its seat at the table of rule-making.

And the one who took that seat is the same person who completed the transformation from "support" to "signing" in less than twelve hours.

The most ironic part is that the company taking AI safety most seriously was expelled from the place where AI safety needed the most serious attention.

The company that replaced it had just two weeks prior launched a mechanism that integrated user conversation topics into its advertising system, experienced a third-party data breach three months ago, and earlier quietly disclosed a system that could report users to law enforcement.

In Silicon Valley, Altman's operation in less than twelve hours has a name. It's not called backstabbing, it's called timing.

免责声明:本文章仅代表作者个人观点,不代表本平台的立场和观点。本文章仅供信息分享,不构成对任何人的任何投资建议。用户与作者之间的任何争议,与本平台无关。如网页中刊载的文章或图片涉及侵权,请提供相关的权利证明和身份证明发送邮件到support@aicoin.com,本平台相关工作人员将会进行核查。