Original Title: "AI Usage Guide for Humanities Workers"

Original Author: Han Yang MASTERPA, Founder of Funes

Humanities workers do not create changes in the world, but they endure the changes in the world.

Sometimes I feel that those selling AI tutorials always treat AI as a kind of magic: give you a magical prompt, and you can do anything. The reality is certainly not like that. For a period of time, due to the establishment of FUNES, we had to produce a large amount through AI every day. Combined with "Ephemeral World" and my own writing and other content production, relying solely on human power was no longer enough. Therefore, we extensively explored how to use AI to assist our content market and humanities research work.

Later, when new colleagues joined the company, I made a simple Keynote presentation. Upon hearing about it, Master Jia Xingjiajia invited me to share. My partner Keda and I named this presentation "AI Usage Guide for Humanities Workers." At that time, it was a purely informal sharing, focusing mainly on some general principles. Later, we did it a few more times, gradually expanding.

In the past year or so, I have shared this set of experiences on how to use AI with many friends engaged in content creation, research, and knowledge products. Its goal is not to teach you to memorize a few magical prompts, nor to treat AI as a panacea; on the contrary, it is more like a set of working methods: enabling you to truly integrate the large model into your writing, research, editing, topic selection, data organization, and production processes without writing code, and ensuring traceability, supervision, and verification, so you are still willing to sign your name on the works.

This method comes from the pitfalls we encountered in real projects: when content enters large-scale production, relying solely on human power will collapse; AI writing directly will hallucinate, slack off, or write like AI. Therefore, we had to transform creation into a production line and the production line into an iterative system.

Today, I do not want to give you various prompts directly; I hope to provide you with some key guiding thoughts and principles.

Before the Principles: Three Bottom Lines of This Guide

Before specific methods, it is important to clarify three bottom lines. They determine "how to use AI" and also define "why you should use it this way."

1. The process must be traceable, supervisable, and verifiable

You cannot just want a result without wanting the process. For humanities work, a black box is the most dangerous: hallucinations, misdirection, and conceptual substitution can silently happen in a black box.

2. Must be manipulable

You must be able to control how it does, by what standards it does, where to slow down, and where to be stricter. You are not "drawing cards"; you are in production.

3. You are still willing to sign your name in the end

"Am I willing to put my name on it?" is the final quality check. If you are not willing to sign, it is usually not an ethical issue, but rather that your will has not been implemented in the process—meaning the quality is uncontrollable.

Principle 0: Don’t Wish on AI, Treat It as a Workbench

Many people use AI in a way that essentially consists of wishing:

"Give me a good joke," "Help me write a good article," "Explain this paper."

The problem is—“explanation” itself has countless interpretations: for outsiders, undergraduate students, graduate students, or peers, it is not the same task at all. AI cannot automatically know your background, purpose, taste, and standards. If you do not articulate it clearly, it can only provide the least effort answer using the "average human" default.

Thinking of the large model as a workbench means: you do not ask it for results, but instead mobilize its tools to complete a process. What you need to do is clarify the task, clarify the standards, and arrange the steps.

For example, ask AI to explain a paper

You can change a wishful request (give me an explanation of this paper) into a workbench-style task like this:

· Clearly define the target audience: smart, curious, but not experts in the field, graduate students

· Clearly define the explanation method: heuristic, step-by-step, academically rigorous

· Clearly define structural requirements: first explaining the significance, then adding background, then restoring the research process, then discussing key technical points, and finally offering insights

· Clearly define the tone: respectful of intellect, not condescending, not pretending the other party has a deep foundation

You will find: the more your request resembles "assignment requirements," the less AI behaves like AI and more like a competent teaching assistant.

Principle 1: To Get AI to Do Well, Reflect on Yourself First—You Are in Charge

If you hire a secretary, you wouldn’t just say:

"Fix that article by Han Yang on America's Rust Belt."

You would definitely add:

Why this article is being written, who it’s for, what the current bottlenecks are, what problem you hope it solves, what parts cannot be changed, what style you want, and what metrics matter to you.

AI is the same. You need to treat it like a very diligent, very polite colleague who doesn’t understand the implicit assumptions in your mind. The real "prompt engineering" is not a technique, but a sense of responsibility: any task is still yours, AI is just helping you out.

When you are not satisfied with AI's output, the most effective first reaction is not "AI is no good," but rather:

· Did I articulate "audience/target/purpose" clearly?

· Did I provide enough background materials and constraints?

· Did I break down "abstract desires" into "executable actions"?

· Did I provide a standard to judge right or wrong?

Principle 2: Ask at Least 3 Models About the Same Question—Every AI Has "Personality" and Areas of Expertise

In our company, I hope that any colleague who is first contacting a large model will ask three different AIs for each question during the early use. AI has differences like people: some are better at writing word choices, some are better at solving problems logically, some are better at coding or tool invocation. A more practical point is: models of the same product, even a new version of the same model, will continually fine-tune their "style" and "boundaries."

So a very simple but extremely effective habit is: for the same question, throw it to at least 3 different AIs, and you will quickly get a "sense":

· Which is better at writing, which better at thinking, which better at researching, which is more likely to slack off

· Which tasks are suitable for who as the "first draft," and which are suitable for who as "reviewers"

· Which is better at "topic/structure," and which is better at "paragraph/sentence"

The value of this step is not to "select the strongest model," but to start managing models like managing a team, rather than treating it as the sole oracle.

Principle 3: AI Is Not All-Knowing—Treat It as Having the Common Sense Level of a "Good Undergraduate"

A very practical expectation management is:

AI's level of common sense ≈ that of a 985 undergraduate.

If you feel that "even an excellent undergraduate might not know," then you should assume that AI doesn't know either; at least assume that it will "make it sound like it knows" when it doesn't.

This leads to two direct actions:

1. Any content beyond common sense must be taught to it

For example: if you want it to write jokes, create truly unique copy, or write highly professional arguments—you can’t just say "write it better," you need to provide examples, standards, boundaries, and corpus. I believe it would take you some time to explain to a friend what kind of text you think is good; so how could you assume AI automatically knows?

2. You should treat it as an intern collaborator, not as a deity

It can do a lot of "micro interpolation" work: completing the scaffolding you provide and weaving the materials into a readable text. However, the "scaffolding" and "direction" still come from you.

Principle 4: Let AI Approach the Goal Step by Step—White Box Step by Step Is More Reliable Than Black Box All at Once

The advantage of AI is not "giving you the correct answer directly," but that it can stably complete many small steps within the process you design. The more you ask it to "hit the mark all at once," the more it is likely to become a "seemingly complete, yet actually lazy" black box.

A particularly intuitive example is handling TTS (text-to-speech) or reading scripts. Rather than saying "pay attention to homophones, don't misread," it is better to break the task into a string of steps, such as:

· Marking pauses/stress/speed changes

· Identifying potential homophones

· Verifying against a dictionary or authoritative pronunciation (checking first if necessary)

· Pre-marking characters that are easy to misread but common

· Ultimately, using homophones without ambiguity to replace them, fundamentally eliminating the possibility of misreading

Principle 5: First Industrialize, Then AI-ize—You Can't Jump from the Agricultural Era Directly to the AI Era

If your writing/research process is random, inspiration-based, and lacks data management, it will indeed be difficult to hand it over to AI. Because AI can only grasp what you can "describe and reproduce."

A more realistic path is:

1. First turn your work into a "production line": splittable, reusable, and quality-checkable

2. Then hand over the sub-steps to AI: let it become a workstation, not a deity

We have done a very simple yet critical job: breaking down how I write a non-fiction article. Including:

· Why start with this story

· Why choose this sentence

· How to score examples

· How to transition, how to connect, how to conclude

· How to connect small stories to a larger picture

In the end, we broke it down into dozens of steps, letting different AIs work on just one step. The result was:

the models didn’t suddenly become stronger, rather the processes connected its ability of “operating a little bit each time.”

When you can clearly describe "how my article is made," you will find: the upper quality limit has never been determined by "which large model to use," but whether you have clearly explained the working methods.

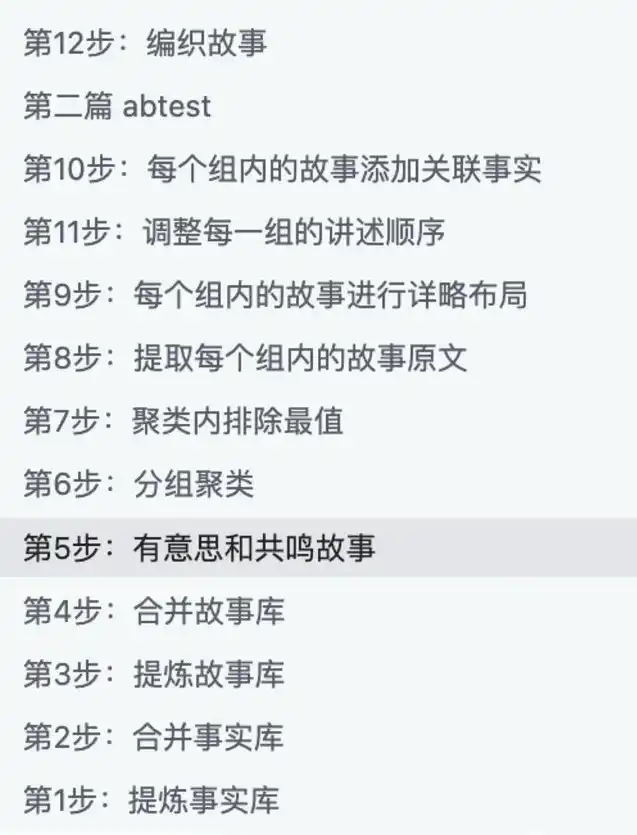

Some steps when testing at that time

However, I strongly recommend you listen to the program for more details.

Principle 6: Anticipate AI Will Slacken—It Will Conserve Computing Power; You Need to Clear "Format Barriers" for It

AI will slack, and it is "systematically lazy": it will avoid opening webpages if possible, avoid reading PDFs if it can, and skip if it can. It’s not that it’s bad, but rather that under the constraints of computing power and time, it naturally tends to take the least effort path.

So what you need to do is: focus AI's computing power on "understanding text," rather than wasting it on "processing formats."

Very effective modifications include:

· Try to convert materials into plain text/Markdown, then feed them to AI

· Copy web content into clean text (removing navigational elements, ads, and footnote noise)

· Conduct "fact extraction/structure extraction" for long materials, then allow it to write

· Integrate PDFs/EPUBs/webpages in a uniform manner as searchable TXT files, then perform subsequent tasks

You will find: many people resist this type of "physical work," believing that "machines should do the dirty work for me." But in human-AI collaboration, it is quite the opposite—your willingness to do a little mechanical labor sharpens and makes the AI’s intellectual part more reliable.

Principle 7: Remember Context is Limited—Try to Reframe Tasks as "Compression," Do Not Expect It to "Expand Out of Thin Air"

AI has a context window and a "memory limit." If you give it 20,000 words, it may not remember much; if you give it 200,000 words, it might only scan the titles. An apt analogy is: lock a person in a small room for a day and throw them a book of 200,000 words, then let them come out and recite what they remember—how much they can recite is probably how much AI can "remember."

Hence, there is a rather counterintuitive but extremely important experience:

1. Compressing is Much Easier than Expanding

Compressing 1,000,000 words to 10,000 words is often more reliable than expanding 10,000 words to 1,000,000 words.

This directly changes the way you request AI:

· Don't use 100-word prompts to request a paper

· On the contrary, feed in as much material as possible (in batches, retrieval, RAG, etc.), allowing it to extract structure, viewpoints, and the main text based on sufficient materials

Your past writing articles and papers used to be "reading massive materials → extracting → organizing → writing" (at least that’s how I do it). When it comes to AI, do not suddenly double standard, expecting it to grow out of thin air.

Principle 8: Restrain the Impulse of "I Can Fix It Immediately"—Change the Production Line, Not the Result

Many talented writers are most likely to crash in front of AI:

AI produces a 59-point draft, and you think you can raise it to an 80 with a few changes, so you start editing; as you go, you end up rewriting it; once done, you say "I might as well do it myself," and then you never use AI again.

The solution is not to work harder on "editing," but to shift your focus upstream:

· Don’t aim for AI to deliver a direct 100 points

· Your goal is to have the production line consistently produce 75-80 points

· What you need to do is iterate the process to raise the "average score," not to make each "individual piece" perfect

Principle 9: Treat the Production Line as Product Iteration—Reliability Itself Is Value

When you have a system that can consistently provide you with a 70-point starting point, its value is not "how much it resembles you," but rather:

· You can obtain a usable draft at nearly zero cost

· You can focus your energy on higher-level judgments: topics, structures, evidence, taste, and trade-offs

What you want is not an all-powerful being able to replace you, but a reliable factory: it may not be perfect, but it is stable.

Principle 10: Quantity is the Primary Task—Let It Produce More Before Filtering

Only letting AI give you one version will usually yield the most mediocre, conservative, and "average" response. You need to use "quantity" to combat "mediocrity."

A more effective approach is:

· Summary: request 5 versions at once

· Introduction: request 5 intros at once for A/B testing

· Topics: request 50 topics at once, then regroup and select

· Structure: request 3 sets of structures at once, then combine

· Expression: request 10 different phrasings at once, then choose the best

As you raise the average score and increase output, "surprising samples" of 85 or 90 points will naturally emerge in distribuion. Often, it’s not "that one stroke of genius," but rather you've finally started working in a statistical manner.

Principle 11: Don’t Overstep—Command, Taste, and Let It Rework Like an Executive Chef

If you are the executive chef in a restaurant, you wouldn’t personally go chop cucumbers. You would:

· Taste a sample

· Determine if it meets qualifications

· Provide clear feedback (what's wrong, how to improve)

· Ask the chef to redo it

Collaboration with AI is the same. You should respect its subjectivity of "generating in its own way"—what you need to do is teach it how to meet your standards, rather than jumping in and polishing each result into a finished product.

Otherwise, you will be exhausted by endless "fixing."

The Final Fundamental Principle: Return to the Real World—Materials × Taste Determine the Work's Upper Limit

In the AI era, the quality of a work increasingly resembles: Materials × Taste.

Models will change, methods will iterate, but these two things remain unchanged:

1. Materials Come from the Real World

When given two choices to write an article:

· Use the latest model but only online data

· Use an old model, but you have complete archives, oral history, and field interviews

It is often the latter that is more likely to produce a good piece.

2. Taste Comes from Long-Term Training

When "generation" becomes inexpensive, what is truly scarce is:

· You know what is worth writing

· You know which evidence is stronger

· You know which narrative has more power

· You are willing to put in physical labor for materials: going to remote places, digging up research

AI changes the efficiency and manner in which you interact with materials; but the subject of the work remains you, and the object is still the materials. AI is just part of the "verb."

Conclusion: Transform Anxiety into a Sense of Touch

Many people cannot use AI not because they are not smart, but because they remain stuck in the cycle of "wishing—disappointment—giving up." What can truly help you break through is to treat it as a workbench, engineer the tasks, whitebox the processes, and then cultivate a sense of touch through continuous friction.

When you can achieve this, you will not hastily conclude that "AI is not good"; you will more resemble someone who can manage new tools in a new profession: neither looking down on it nor overestimating it, placing it within processes, within reality, and within works you are willing to sign your name on.

免责声明:本文章仅代表作者个人观点,不代表本平台的立场和观点。本文章仅供信息分享,不构成对任何人的任何投资建议。用户与作者之间的任何争议,与本平台无关。如网页中刊载的文章或图片涉及侵权,请提供相关的权利证明和身份证明发送邮件到support@aicoin.com,本平台相关工作人员将会进行核查。