Author: Luo Yihang, Silicon-based Stance

In the past, the token that was seen because it was believed is now visible without belief. It is the next one after Watt, Ampere, and Bit.

In January 2009, an anonymous individual invented something called "token." You invest computing power, obtain tokens, and tokens circulate, are priced, and traded within a consensus network. The entire cryptocurrency economy was born. Over a decade later, people are still debating whether this token has any value.

In March 2025, a man in a leather jacket redefined another thing called token. You invest computing power, produce tokens, and tokens are immediately consumed in an AI inference and reasoning process: thinking, reasoning, coding, making decisions. The entire AI economy thus accelerated. No one debates whether this token has value because you just used millions of them this morning.

Two types of tokens, same name, same underlying structure: computing power goes in, valuable things come out.

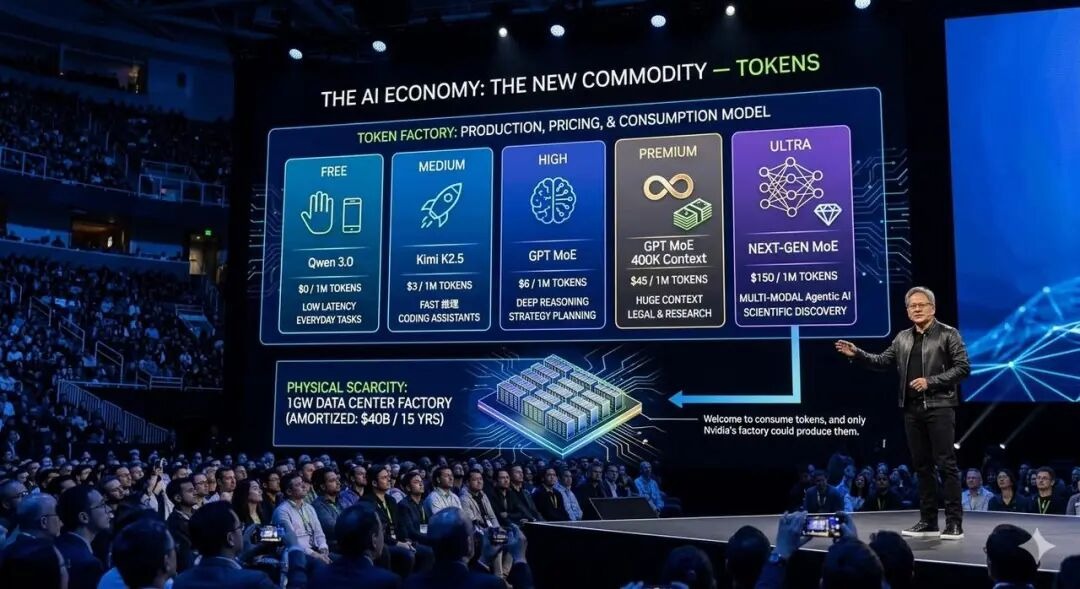

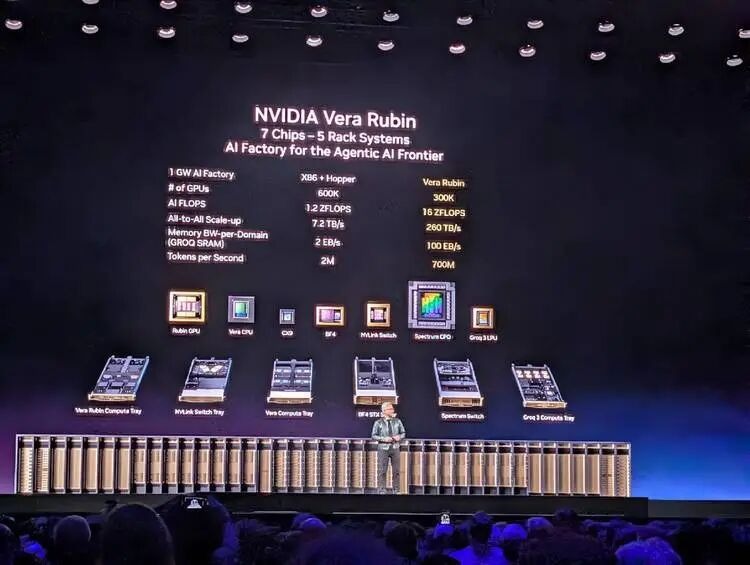

In March 2026, I sat in the NVIDIA GTC conference hall, listening to Jensen Huang give a keynote speech that hardly sold any products. Yes, he released Vera Rubin, a product that combines CPU and GPU. But this time, he didn’t talk about chip parameters or manufacturing processes; he talked about a complete economics of token production, pricing, and consumption—

Which model corresponds to which token speed; which token speed corresponds to which pricing range; what level of hardware is needed to support a given pricing range.

He even prepared a computing power allocation plan for the CEOs and decision-makers holding the corporate checkbook in the audience: 25% for the free tier, 25% for the mid-tier, 25% for the high-end, and 25% for the high premium tier.

Yes, this time he didn't specifically sell any GPUs like he did two years ago with Blackwell. But this time, he was selling something larger. After two hours, I felt that the sentence he most wanted to convey was: Welcome to consume tokens, and only Nvidia's factory could produce them.

At that moment, I realized that this man, and the anonymous individual who dug out the first token 17 years ago, were doing the structurally same thing.

The Same Set of Transformation Rules

The anonymous individual known as "Satoshi Nakamoto" wrote a nine-page white paper in 2008 and designed a set of rules: invest computing power, complete a mathematical proof (Proof of Work), and obtain crypto tokens as a reward.

The brilliance of this rule lies in its requirement that no one needs to trust anyone—once you accept this set of rules, you automatically become a participant in this economy. This rule is correct, after all, it brought so many deceitful people together.

And Jensen Huang did exactly the same structurally on the GTC 2026 stage.

He showcased a graph highlighting the relationship and tension between reasoning efficiency and token consumption: the Y-axis is throughput (how many tokens are produced per megawatt of power), and the X-axis is interactivity (the token speed perceived by each user). Then he marked five pricing tiers below the X-axis: Free using Qwen 3, $0/million tokens; Medium using Kimi K2.5, $3/million tokens; High using GPT MoE, $6/million tokens; Premium using GPT MoE 400K context, $45/million tokens; and Ultra, $150/million tokens.

This graph could almost serve as the cover of Jensen Huang's "token economics" white paper.

Satoshi defined "what valuable computation is"—completing a SHA-256 hash collision is valuable. And Jensen defined "what valuable reasoning is"—producing tokens at specific speeds for specific scenarios under given power constraints is valuable.

Neither Satoshi nor Jensen directly produced tokens; they both defined the rules for token production and pricing mechanisms.

One sentence spoken by Huang on stage could almost be written directly into the abstract of a token economics white paper—

Tokens are the new commodity, and like all commodities, once it reaches an inflection, once it becomes mature, it will segment into different parts.

Tokens are the new commodity. Once commodities mature, they naturally segment. He is not describing the current state; he is anticipating a market structure and then precisely laying out his hardware product line across each layer of this structure.

The production processes of the two types of tokens even exhibit semantic symmetry: mining is called mining, inference is called inference.

The essence of mining and inference is the same: turning electricity into money. Miners spend electricity to mine crypto tokens and then sell them; inference models and AI agents spend electricity to produce AI tokens, which are then priced in millions and sold to developers. The middle processes differ, but both ends are the same: on the left is the electricity meter, on the right is the revenue.

Two Ways of Writing Scarcity

Satoshi's most important design decision was not Proof of Work, but the cap of 21 million bitcoins. He created artificial scarcity with code—no matter how many mining machines flood in, the total number of bitcoins will never exceed 21 million. This scarcity is the value anchor of the entire cryptocurrency economy.

Jensen, on the other hand, created natural scarcity using physical laws. He stated:

"You still have to build a gigawatt data center. You still have to build a gigawatt factory, and that one gigawatt factory for 15 years amortized... is about $40 billion even when you put nothing on it. It's $40 billion. You better make for darn sure you put the best computer system on that thing so that you can have the best token cost."

A 1GW data center will never become 2GW. This is not a code limitation; it is a physical law.

Land, electricity, heat dissipation—each has a physical limit. The number of tokens a factory you built for $40 billion can produce over its 15-year lifecycle entirely depends on what computing architecture you put into it.

Satoshi's scarcity can be forked. If you dislike the cap of 21 million, fork a new chain, change it to 200 million, call it Ether or whatever, go ahead, and publish a white paper while you're at it. And people have indeed done so, relishing the process.

However, the scarcity created by Jensen cannot be forked. After all, you cannot fork the second law of thermodynamics, you cannot fork the capacity of a city's power grid, and you cannot fork the physical area of a piece of land.

But whether it is Satoshi or Jensen, the scarcity they create leads to the same result: hardware arms race.

The history of mining is: CPU → GPU → FPGA → ASIC. Each generation of specialized hardware makes the previous generation obsolete. The history of AI training and inference is also replaying: Hopper → Blackwell → Vera Rubin → Groq LPU. General hardware starts, specialized hardware locks in. Huang's Groq LPU, released after acquiring Groq, is a deterministic data flow processor. Static compilation, compiler scheduling, no dynamic scheduling, 500MB on-chip SRAM—its architectural philosophy is an ASIC for the inference field. It does one thing, but does it to perfection.

Interestingly, GPUs have played a key role in both waves of this trend.

Around 2013, miners found that GPUs were better suited for mining crypto tokens than CPUs, causing NVIDIA graphics cards to sell out. Ten years later, researchers found that GPUs were the best tools for training and inferring AI models, leading to NVIDIA data center cards selling out again. GPUs, as a class of processors, have sequentially served two generations of the token economy.

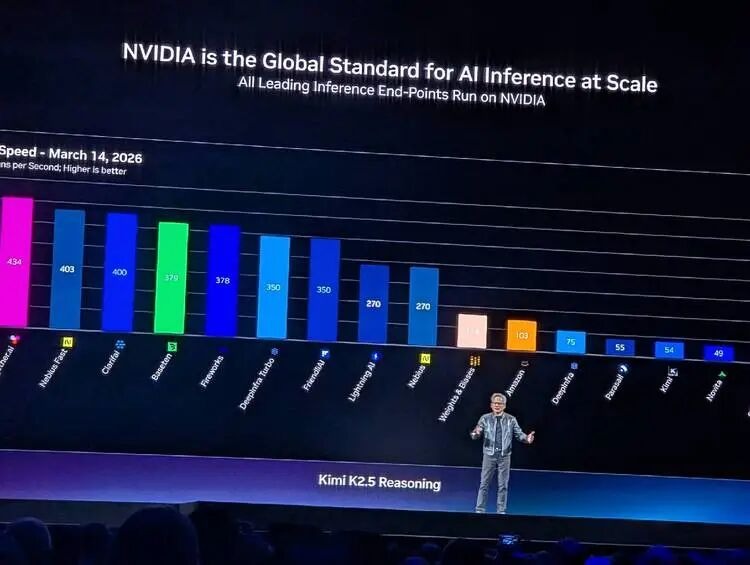

The difference is, in the first wave, NVIDIA was a passive beneficiary, and there was nothing more beyond that. In the second wave, when the battlefield for AI computing power consumption shifted from pre-training to inference, NVIDIA quickly seized the opportunity and actively designed the entire game, becoming the writer of AI game rules.

The World's Most Profitable Shovel

In the gold rush, the most profitable were not the prospectors, but Levi Strauss, who sold the shovels. In the mining frenzy, the most profitable were not the miners, but Bitmain and Jihan Wu, who sold mining machines. In the AI pre-training and inference waves, the most profitable are not the base models and agents, but NVIDIA, who sells GPUs.

Honestly, the roles of Bitmain and NVIDIA in their respective industries are no longer comparable.

- Bitmain only sells mining machines, while NVIDIA was once a supplier to Bitmain. Once you buy a mining machine, what coin to mine, which pool to use, and at what price to sell, have nothing to do with Bitmain. It is a pure hardware supplier, earning one-time equipment profits.

- NVIDIA is different. It doesn’t just sell hardware; now, especially since the explosion of inference-side AI since 2025, it has deeply defined what GPU should be used for mining, how to price tokens, to whom tokens are sold, and how data centers should allocate computing power… all of these are in Huang's presentation PPT: he segmented the market into five tiers, each corresponding to what models, context lengths, interaction speeds, and prices… NVIDIA has standardized and formatted the future AI inference-driven market.

Around 2018, global computing power concentrated in a few major mining pools—F2Pool, Antpool, BTC.com—competing for shares of computing power, but the source of mining machines was highly concentrated in Bitmain.

Like today’s NVIDIA, 60% of its income comes from competing "hyperscalers," such as AWS, Azure, GCP, Oracle, CoreWeave, while 40% comes from decentralized AI natives, sovereign AI projects, and enterprise clients. Large "mining pools" contribute the majority of revenue, while small "miners" provide resilience and diversification.

The structures of the two ecosystems are exactly the same. But Bitmain later faced competitors—Whatsminer, Innosilicon, and Canaan Creative all eroded its share. Mining machines are relatively simple ASIC designs, and followers have opportunities. However, shaking NVIDIA seems to become increasingly difficult: 20 years of CUDA ecosystem, hundreds of millions of GPU installations, NVLink six generations of interconnect technologies, and the decoupled inference architecture after Groq integration—NVIDIA's technical complexity and ecological barriers have rendered most competitive tools ineffective.

This might continue for 20 years.

The Fundamental Fork Between Two Types of Tokens

What fundamentally distinguishes cryptocurrency tokens from AI training and inference tokens is the motivation and psychology of their usage.

The demand side for crypto tokens is speculation. No one "needs" Bitcoin to complete work. All white papers claiming blockchain tokens can solve problems are fraudulent. You hold crypto because you believe someone will buy it from you at a higher price in the future. Bitcoin's value comes from a self-fulfilling prophecy: as long as enough people believe it has value, it has value. This is faith economy.

On the other hand, the demand side for AI tokens is productivity. Nestlé needs tokens to make supply chain decisions—its supply chain data refreshes from every 15 minutes to every 3 minutes, reducing costs by 83%. This value can be directly mapped to P&L. NVIDIA's 100% engineers already need tokens to write code instead of doing it manually; research teams need tokens for scientific research. You don’t need to believe tokens have value; you only need to use them; the value proves itself through use.

This is the essence of the difference between the two types of tokens. Crypto tokens are produced to be held and traded—its value lies in non-usage. AI tokens are produced to be immediately consumed—its value lies in the moment of use.

One is digital gold, becoming more valuable as it's hoarded; the other is digital electricity, produced to be burned.

This distinction determines that the AI token economy will not become as bubbled as the crypto token economy. Bitcoin experiences great fluctuations because the prices of speculative products are driven by emotions. However, the price of tokens is driven by usage and production costs; as long as AI remains useful—so long as people are still using Claude Code to write code, using ChatGPT to write reports, and using Agents to conduct business workflows, the demand for tokens will not collapse. It does not rely on faith; it relies on necessity.

- In 2008, the Bitcoin white paper needed to repeatedly emphasize why a decentralized electronic cash system is valuable. Seventeen years later, people are still debating.

- In 2026, token economics did not trigger any debate; it even became a consensus without needing proof. When Huang stood on the GTC stage and said "tokens are the new commodity," no one questioned it. Because every person in the audience had used up millions of tokens with Claude Code or ChatGPT that morning. They did not need convincing about the value of tokens—their credit card bills had already proven it.

In this sense, Huang is indeed a copy of Satoshi, the one who monopolized mining machine production, defined the usage scenarios and specifications of tokens for Satoshi, and annually hosted a show at the SAP Center in San Jose to demonstrate how powerful the next generation of "mining machines" supporting AI training and inference are.

Satoshi possesses a cautiously desirous charm; he designed the rules, handed them to the code, and then disappeared. This is the romance of cypherpunks. Huang, however, is more businessman than any scientist; he designs the rules, personally maintains them, continuously builds, and strengthens his castle moat.

You once saw that token because you believed in it; you can now see it without belief. It is the next one after Watt, Ampere, and Bit.

免责声明:本文章仅代表作者个人观点,不代表本平台的立场和观点。本文章仅供信息分享,不构成对任何人的任何投资建议。用户与作者之间的任何争议,与本平台无关。如网页中刊载的文章或图片涉及侵权,请提供相关的权利证明和身份证明发送邮件到support@aicoin.com,本平台相关工作人员将会进行核查。