Author: Deep Thought Circle

Most people think AI is just a chatbot. You open ChatGPT to help you revise an email, and it works like magic. You satisfyingly close the page, feeling like you understand what AI is about. But it's like swiping a credit card at a restaurant and thinking you understand how Visa makes money— you used the product, but you didn't see the system.

Investor Anish Moonka recently published an in-depth article that systematically analyzes the value chain structure of the AI industry. He spent nearly a year figuring out how money flows in the AI sector. Frankly, he admits in his article that he took many detours, focusing on products like ChatGPT, Claude, and Gemini that you can see and touch, while $700 billion quietly flowed into infrastructures whose names he couldn't even pronounce—chips you've never heard of, seemingly made-up packaging technologies, cooling systems, and power plants. Concrete is being poured in Texas, Iowa, and Hyderabad.

This article inspired me greatly. It made me realize that our understanding of AI may have been misaligned from the start. What we see is just the tip of the iceberg, while true wealth creation happens quietly beneath the surface.

The Five-Layer Cake: Why No One Talks About the Bottom Four Layers

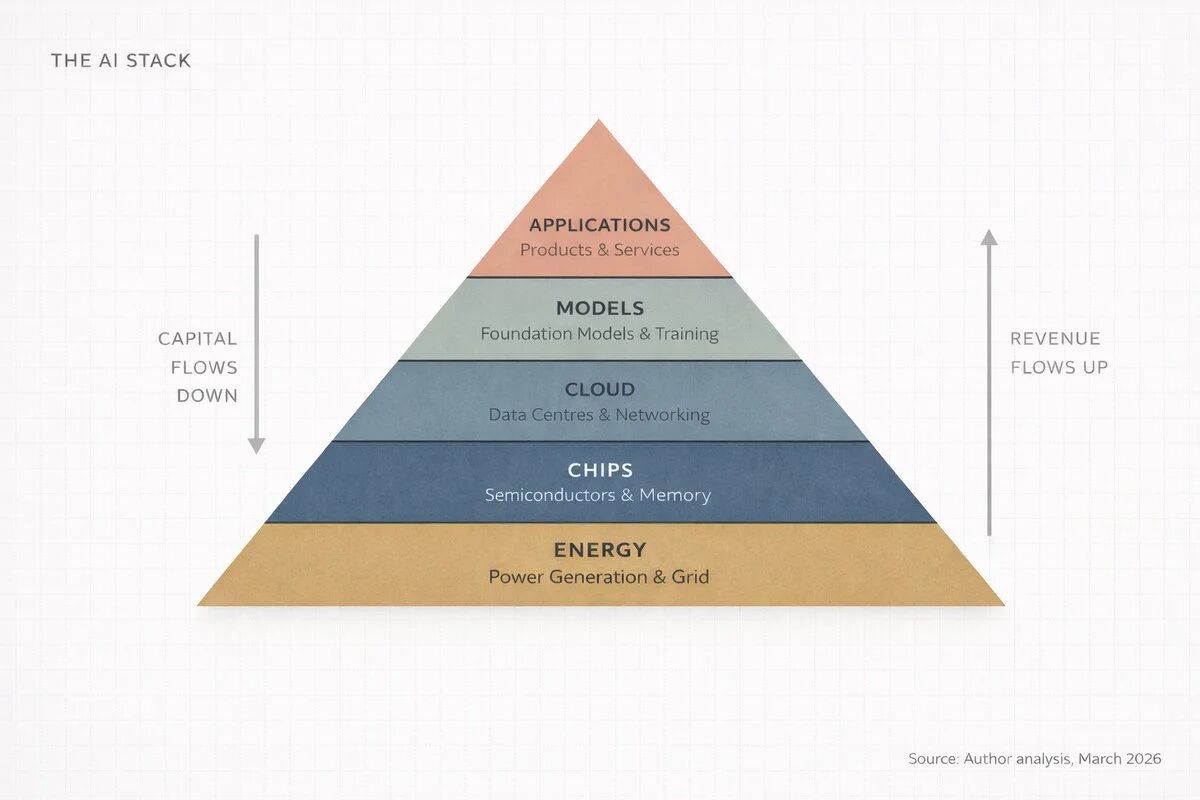

Nvidia CEO Jensen Huang described AI as a five-layer system at the Davos Forum in January 2026: energy, chips, cloud computing, models, applications. He referred to the entire system as "the largest infrastructure build in human history." Anish Moonka calls this framework the AI Stack and points out that each layer supports the layers above it, with capital flowing bidirectionally between these layers.

This five-layer structure is actually quite easy to understand. The energy layer provides electricity; AI data centers have astonishing power consumption, with a single large training run using as much electricity as an entire town in a year. The chip layer provides dedicated processors for massive mathematical computations, which are not the chips you find in ordinary laptops. The cloud computing layer is gigantic warehouses filled with these chips, connected by ultra-fast networks. The model layer is the actual AI software—the "brain" that learns patterns from data. The application layer is where people actually use the products, such as ChatGPT, Google Search, and bank fraud detection systems.

I found it interesting that almost all discussions about AI focus on the fifth layer, the application layer. That's because it's what we can see, touch, and use. But Anish points out a critical fact: focusing solely on discussions about the fifth layer ignores 80% of the whole picture. For investors, entrepreneurs, or anyone wanting to understand the direction of the world, what truly matters is understanding how money flows between these layers—it concentrates, compounds, and aggregates, and right now, it is concentrating in places that most people are not even watching.

Think about the meaning of the word "infrastructure." Roads, power grids, water supply systems—these are things that keep civilization running, yet no one thinks about them until they break down. AI is becoming one of those things—unseen, essential, and extremely costly to build. This also explains why no one discusses data center cooling systems or grid capacity at cocktail parties; precisely this "no discussion" signifies that real money is flowing there.

Where the Money Went: A Counterintuitive Truth

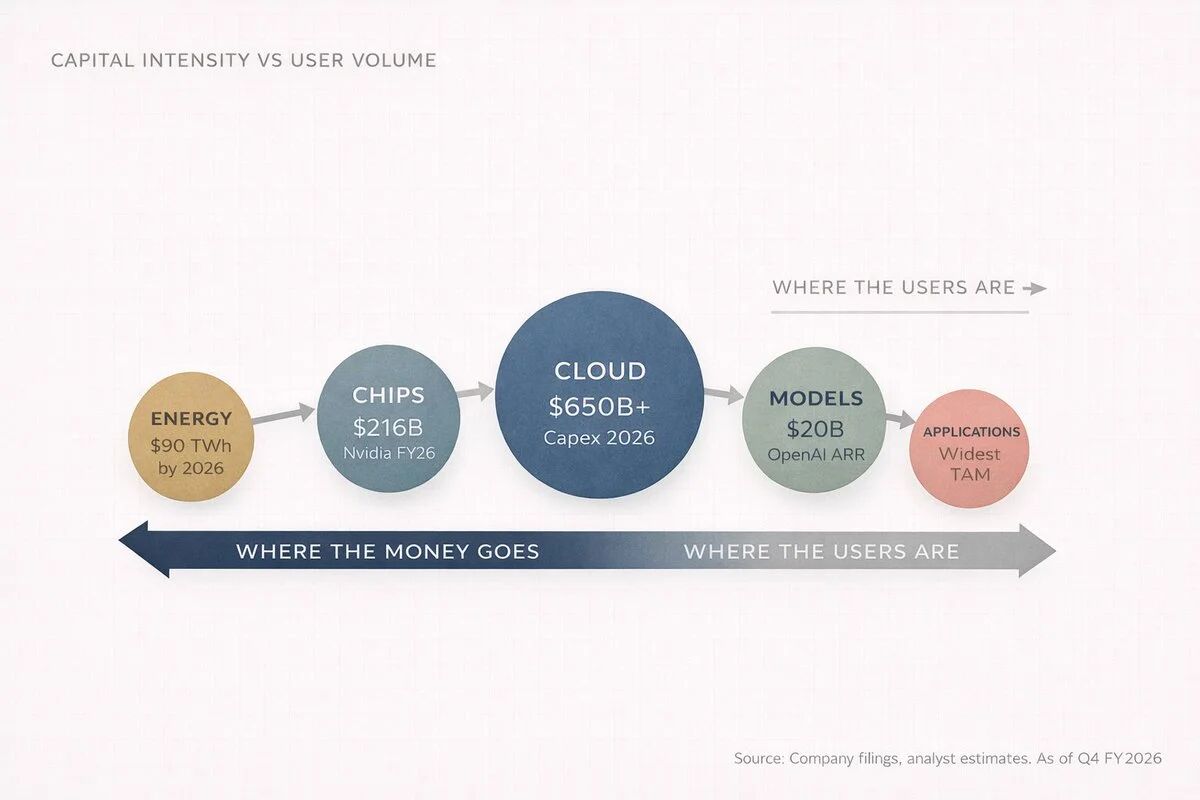

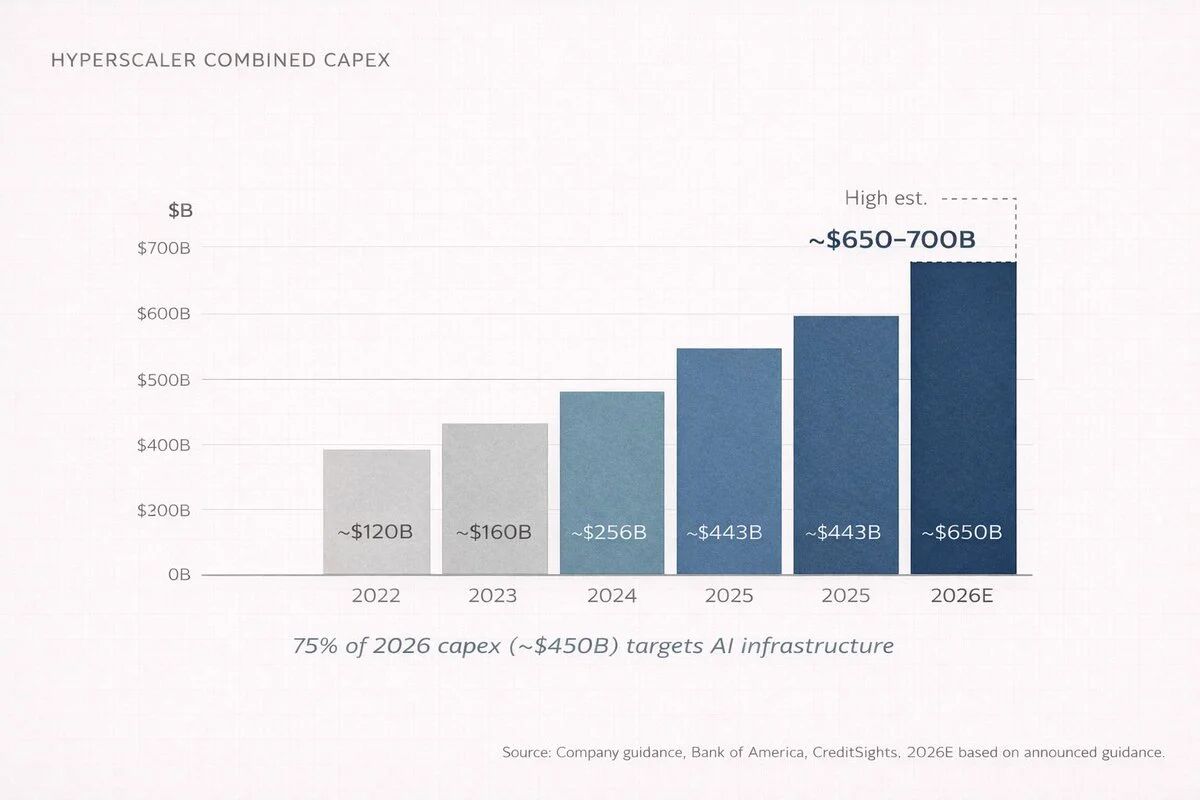

Anish revealed a set of astonishing numbers in his article. By 2026, the four major cloud computing companies—Amazon, Microsoft, Google, and Meta—are expected to invest between $650 billion to $700 billion in capital expenditures (capex). What does that mean? It's roughly equivalent to Switzerland's GDP for a year. About 75% of that, or $450 billion, will be directly invested in AI infrastructure—not chatbots, not applications, but buildings, chips, cables, and cooling systems.

This figure made me rethink the entire logic of the AI industry. Before anyone uses ChatGPT, someone has to build a data center the size of a shopping mall, fill it with thousands of dedicated processors, connect them with network equipment worth more than the total value of most companies, and then provide the entire system with enough electricity to power a small city every day. This is what is happening from the first layer to the third layer—the invisible layers where serious capital is being deployed at scale.

But here lies a deeper contradiction. Everyone thinks companies like OpenAI are making big money, and they indeed are. OpenAI reached an annual recurring revenue (ARR) of $20 billion by the end of 2025, up from $6 billion a year earlier and $2 billion two years prior. A tenfold growth in two years; historically, no company has been able to scale from such a base at this speed.

However, Anish revealed a key fact: OpenAI burned about $9 billion in cash in 2025 and is expected to reach $17 billion in cash burn by 2026. Their inference costs—what it actually costs to run AI when you ask it questions—reached $8.4 billion in 2025 and are expected to hit $14.1 billion in 2026. They expect to achieve positive cash flow by 2029 or 2030.

Where did all that burned cash go? Anish provided the answer: it flows downward through the entire tech stack. It goes to Microsoft Azure (OpenAI is obliged to pay Microsoft 20% of its total revenue until 2032), to Nvidia for chip purchases, to companies that build and equip data centers, and to power companies generating electricity. There is an almost circular pattern here: Microsoft invests in OpenAI, OpenAI spends on Azure, Azure generates more revenue to buy more Nvidia chips, Nvidia reports record earnings, and everyone is celebrating. Cash keeps flowing downwards.

I think this reveals a fundamental cognitive gap: most users are at the top of the tech stack, while most profits are at the bottom. This disconnect is at the core of the investment logic. In Anish's words, this is the first lesson of the AI value chain: revenue flows up, capital flows down. As investors or observers, we often get attracted by revenue growth while overlooking that capital accumulation is the real moat.

History Repeats Itself: Lessons from the Power Revolution

Anish made a brilliant historical analogy in his article. If you want to understand what's happening with AI, look at what happened during the power revolution from 1880 to 1920. When Thomas Edison built the first commercial power station on Pearl Street in Manhattan in 1882, people thought electricity was just a novelty, a fancy way to light up a room. Why do we need this when gas lamps work just fine?

But in just 40 years, electricity reshaped every industry on Earth—manufacturing, transportation, communication, healthcare, and entertainment. The winning companies weren't the ones that invented light bulbs, but rather those that built power plants, laid copper wires, and manufactured generators: General Electric, Westinghouse Electric, utility companies, copper miners, and builders.

The same pattern is playing out in the AI space, only compressed from decades to years. Anish refers to this phenomenon as "Infrastructure Gravity." Whenever a new computing platform emerges, initial wealth creation happens in "picks and shovels." Applications may come later, getting all the media attention, but infrastructure captures all the profit margins.

One can grasp the power of this reasoning simply by looking at the numbers. Nvidia reported $215.9 billion in revenue for the 2026 fiscal year (ending January 2026), a 65% increase from the previous year. Their data center division alone brought in $62.3 billion in the last quarter, up 75% year-over-year. This single division now accounts for over 91% of Nvidia's total revenue. A company generating $68 billion in a quarter, with nine-tenths coming from a single line of business.

TSMC, which actually manufactures Nvidia chips and nearly all other mainstream chips, held nearly 70% of the global wafer foundry market in 2025, generating $122.5 billion in sales. The nearest competitor, Samsung, had only 7.2%. Anish commented that this dominance would make even Standard Oil uneasy.

I particularly agree with Anish's point: if you ask anyone what the internet revolution was about, they'll say Google, Amazon, and Facebook. But if you ask where the early money was actually made, the answer is Cisco, Corning, and the companies that laid fiber optics. The same story, different decade. Infrastructure always wins first; the question is just how long this window remains open.

The Investor's Map: Layering Opportunities

Anish spent considerable space in the article breaking down investment opportunities by layer. I find this section particularly valuable, as it turns abstract concepts into actionable investment frameworks.

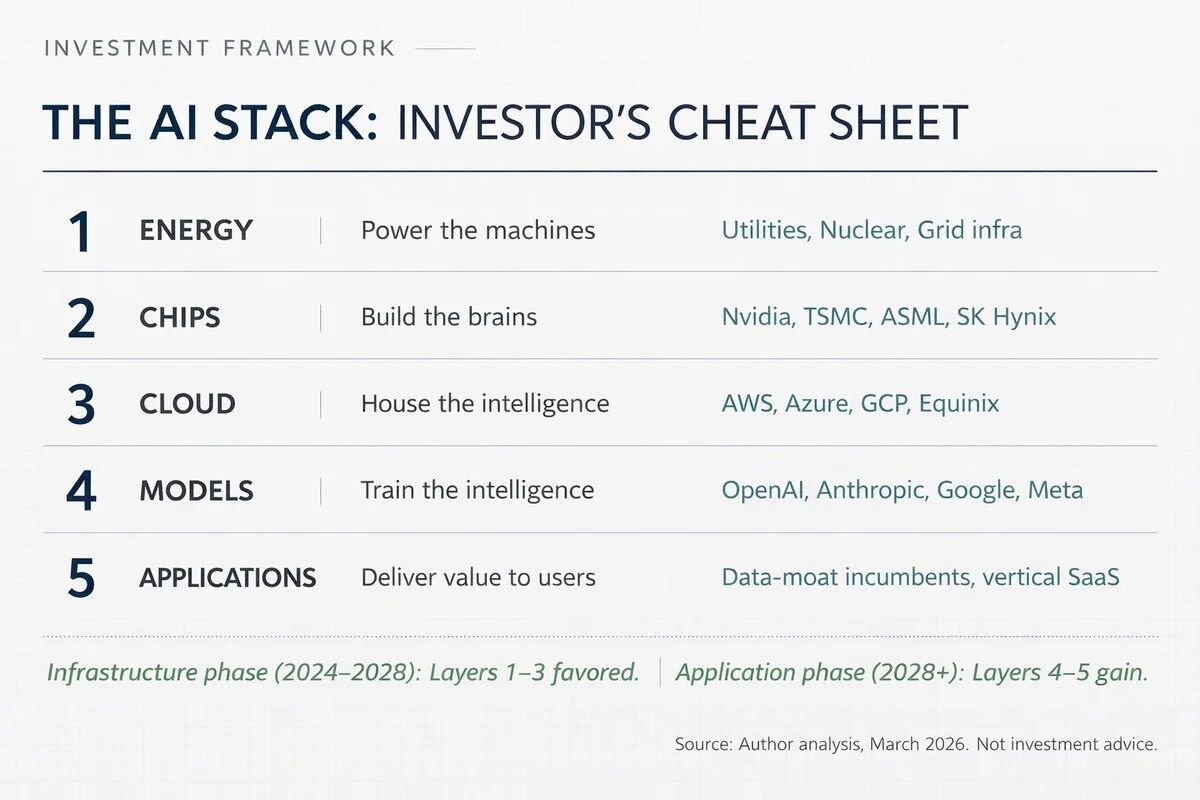

First layer: Energy. The power consumption of AI data centers is astonishing, projected to reach about 90 terawatt-hours annually by 2026, growing nearly tenfold from 2022 levels. This creates a direct investment argument: any company that can generate, transmit, and provide reliable power to data centers will benefit. Jensen Huang's comment in October 2025 is particularly illustrative: "The speed at which data centers generate their own power may far exceed that of accessing the grid." This means tech companies are becoming their own utility providers, bypassing traditional power grids. This trend leads me to think that investment opportunities in energy infrastructure may be closer to the tech industry than most people imagine.

Second layer: Chips. This is the level most people have heard of because of Nvidia. But Anish points out that the chip layer is far more complex than any one company. It has its own sub-layers: designers (Nvidia, AMD, Broadcom, etc.), manufacturers (TSMC dominates with a 70% market share), equipment suppliers (ASML is the only company on Earth that makes EUV extreme ultraviolet lithography machines), memory suppliers (SK Hynix, Samsung, Micron), and packaging technology providers.

The concentration here is impressive. Nvidia holds about 92% of the AI data center GPU market. TSMC manufacturers chips for nearly all major chip designers. ASML is the sole supplier of EUV lithography machines. One company designs, one company builds, and one company manufactures the machines constructed. Anish notes that this concentration is both an investment thesis and a geopolitical risk. I think this observation is crucial—this extreme concentration means high profits coexist with high risks.

Third layer: Cloud computing and data centers. The market is dominated by three cloud computing giants: Amazon Web Services (31% market share), Microsoft Azure (24%), and Google Cloud (11%). But this layer is much more than just these giants. Foxconn currently assembles about 40% of AI servers worldwide, Arista Networks and Credo Technology build network infrastructure, Vertiv handles liquid cooling, data center REITs (real estate investment trusts) own land and buildings, and there are even companies that must pour concrete.

Anish mentioned a shocking number: according to Bank of America, cloud computing giants will allocate 90% of their operating cash flow to capex in 2026, up from 65% in 2025. Morgan Stanley estimates these companies will borrow over $400 billion this year to fund construction, more than double the $165 billion in 2025. This single-year $400 billion bond issuance is merely for building computer warehouses. The scale is unprecedented.

Fourth layer: Models. This is the "brain" layer, including OpenAI (GPT series, ARR over $20 billion), Anthropic (Claude, reportedly around $19 billion annualized revenue at the start of 2026), Google DeepMind (Gemini), Meta AI (Llama), and others. Anish's assessment of this layer is very accurate: it is both the most hyped and the least profitable. The problem with the business model is structural—models improve as you spend more computational resources, but this expenditure growth outpaces revenue. It’s a bit like running a restaurant where each dish requires more expensive ingredients than the last, but customers expect prices to remain the same. Profit margins are constantly being squeezed.

Fifth layer: Applications. This is the level we see every day, including ChatGPT, Google Search, Microsoft Copilot, etc. This is the widest and most crowded level, and while it will ultimately become the largest total addressable market, it is also currently the thinnest in profits and the most uncertain in competition. Anish points out that the differentiating factor at this level is data. Companies with unique proprietary data will build lasting advantages—Salesforce has enterprise CRM data, Bloomberg has financial data, and Epic has medical records.

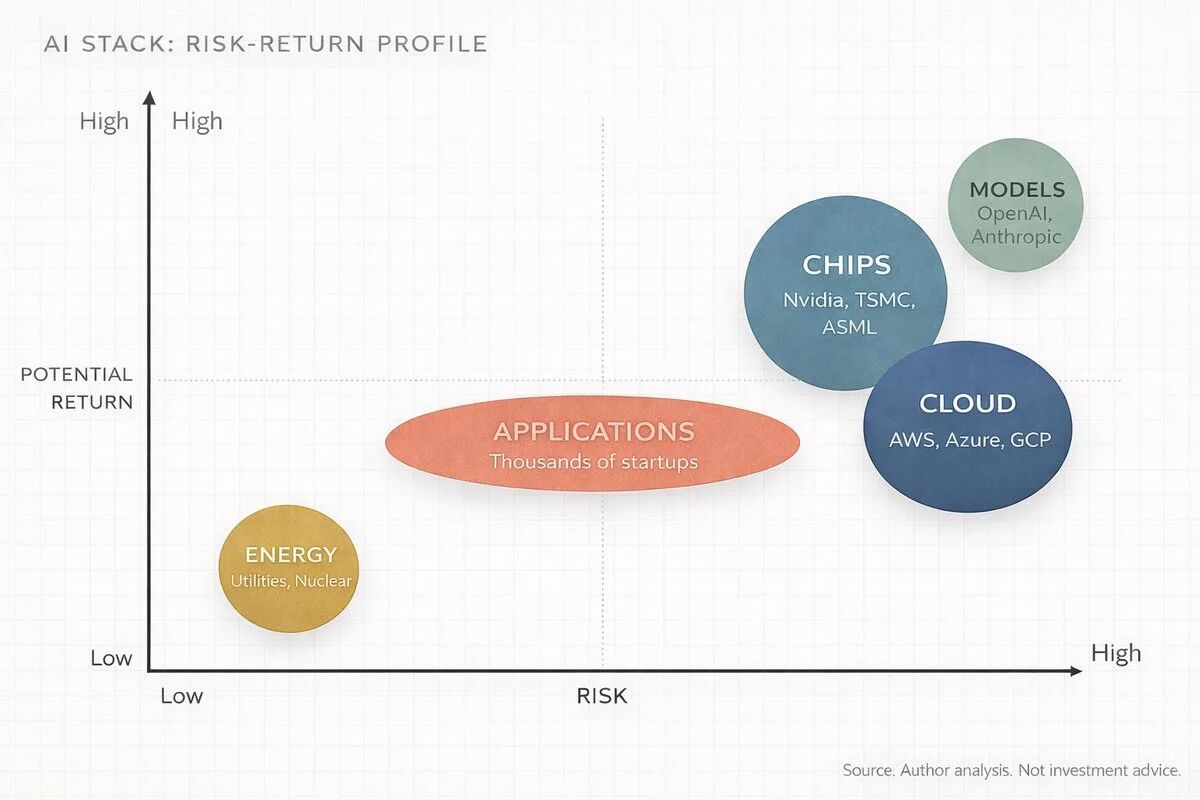

I strongly agree with Anish's judgment: the best returns in the next 3 to 5 years may come from investing in infrastructure now and then applications later. The smartest capital has already positioned itself accordingly. Companies that will truly win at the application level are those sitting on data that others cannot access, and most don’t even call themselves AI companies.

Is This a Bubble: A Question That Must Be Faced

Anish directly addressed a core question in his article: "Isn't this just a repeat of the internet bubble? Massive infrastructure spending, no profits, and everyone is swept up in the hype?" His answer is quite persuasive.

The distinction lies in the timing of demand. During the internet bubble, companies were building infrastructure for demands that had yet to materialize. Fiber networks and servers were built, but users were still dialing in to connect. Infrastructure was built, but the demand didn't truly explode until 5 to 7 years later, and everything in between was liquidated.

By 2026, AI demand is present and rapidly growing. Nvidia can't produce chips fast enough, TSMC's advanced packaging capacity is sold out, cloud computing rental prices are rising instead of falling, and OpenAI added 400 million weekly active users from March to October 2025 alone. Models are being used, computing is being consumed, and customers are paying.

However, Anish also honestly pointed out three major risks. The risk of misallocation of capital—if AI service revenue cannot materialize quickly enough to justify the more than $650 billion spent, some companies will face severe profit compression, and even Amazon's free cash flow could turn negative this year. Concentration risk—TSMC manufactures nearly 70% of the world’s chips, ASML is the sole supplier of EUV machines, and Nvidia designs 92% of AI data center GPUs, meaning any geopolitical or natural disaster interruption could ripple through the tech stack. Finally, the DeepSeek problem—in January 2025, Chinese AI lab DeepSeek achieved near-frontier performance at a fraction of the training cost, challenging the assumption that "higher spending equals better AI."

I think Anish's honesty about the risks makes his analysis more credible. He doesn't shy away from these issues but lays them out clearly. Yet even considering these risks, McKinsey estimates that global data center investments could reach $6.7 trillion by 2030, while PwC estimates that AI could contribute $15.7 trillion to global GDP by 2030. Even if these numbers are wrong by 50%, we are still talking about the largest tech-driven economic shift since the internet.

Anish said something with which I strongly agree: "It's fine to be skeptical of the model, to be skeptical of the timelines, but don't be ignorant of the supply chain. Those are different things. One is a healthy intellectual stance, the other will cost you money."

Playing the Game at the Right Level

Anish used a gaming metaphor to summarize investment strategies. Imagine AI as a video game with five levels, each with different difficulties and rewards. The energy layer is the tutorial stage, providing low-risk, steady returns. The chip layer is the boss battle, with the highest profits but set to a difficult difficulty. The cloud computing layer is a multiplayer server where giants take a cut from everything. The model layer is the PvP arena, where brutal competition results in most players being eliminated. The application layer is the open world, filled with infinite possibilities but no guaranteed loot.

His meta strategy is simple: you don't have to play all five levels. Most people try to play the fifth level because it's the most obvious, but smart money is grinding on the second and third levels because that's where the highest XP is right now.

I believe the value of this framework lies in how it clarifies that your position in the tech stack determines what you should focus on. For non-technical individuals, you don’t need to understand how GPUs work; you need to comprehend that someone has to manufacture them, someone has to house them, and someone has to power them, and these "someones" are publicly traded companies. For technical people, you already know models are advancing, but you may be underestimating how fast physical constraints are becoming bottlenecks. For investors, the AI value chain consists of five distinct transactions, each with different risk and return profiles; treating "AI" as a single industry is as naïve as treating "tech" as a single industry back in 1998.

Anish finally pointed out that this infrastructure advantage won't last forever. At some point, infrastructure building will mature, the application layer will consolidate, and value will shift up the tech stack, just as it did during the internet era—where the value ultimately captured by Amazon, Google, and Facebook exceeded that of fiber companies and server manufacturers. But that moment has not yet arrived; we are still in the infrastructure phase, the picks and shovels phase. And picks and shovels are printing money.

After reading Anish's lengthy article, my biggest takeaway is understanding a simple yet profound truth: consumers look at products, investors look at supply chains, and the best investors see supply chains long before products ship. Five years from now, the names that win this cycle will feel obvious; they always do. The game is to see the structure before others catch on.

Ten years from now, understanding the AI tech stack will be as fundamental as understanding balance sheets. Learn the tech stack, map the layers, and follow the capital. That's the game.

免责声明:本文章仅代表作者个人观点,不代表本平台的立场和观点。本文章仅供信息分享,不构成对任何人的任何投资建议。用户与作者之间的任何争议,与本平台无关。如网页中刊载的文章或图片涉及侵权,请提供相关的权利证明和身份证明发送邮件到support@aicoin.com,本平台相关工作人员将会进行核查。