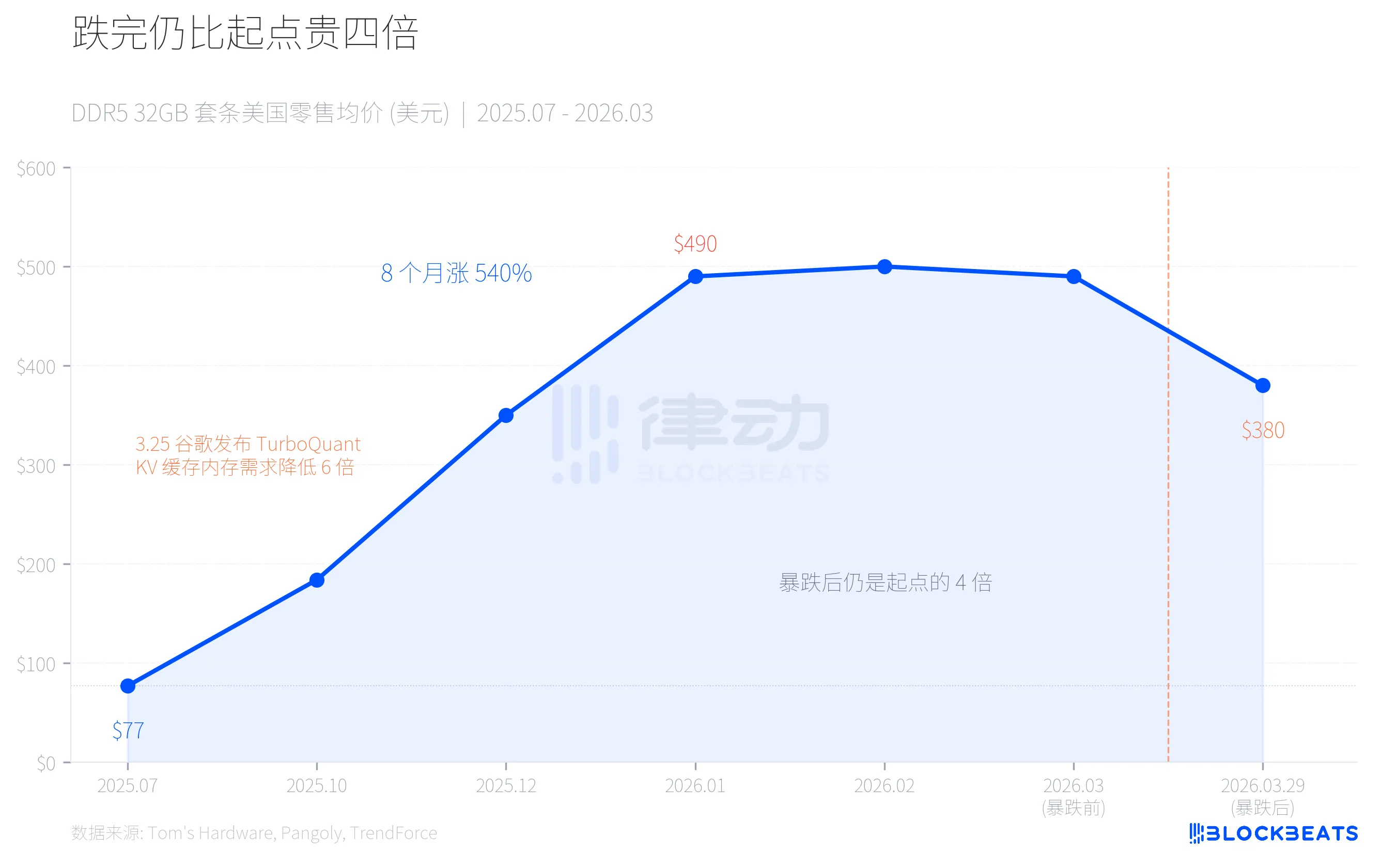

On March 29, Huaqiangbei and the U.S. retail market simultaneously experienced a cliff-like drop in memory prices. Corsair's 32GB DDR5-6400 kit fell from $490 to $380, a single-day decline of 22%. In the domestic market, the price of 32GB DDR5 high-frequency kits plummeted by 800 yuan in just one week, with channel dealers in a panic sell-off, some distributors stating, "It dropped more than a hundred in a single day."

However, when looking at this number over a longer timeline, the picture looks completely different: even after the drop, the current DDR5 price is still four times that of July 2025. This was a precise mismatch of supply and demand in the AI industry chain, where the same force first created a shortage, then caused a panic over excess.

Roller Coaster: 540% Increase in Eight Months, 22% Decrease in One Month

In July 2025, a mainstream 32GB DDR5-6000 kit in the U.S. retail market cost only $77. By January 2026, the price of the same kit soared to $490. An increase of 540% in eight months.

The price increase was not due to consumers suddenly rushing to upgrade their computers. According to TrendForce data, the DRAM contract price in the first quarter of 2026 rose by 90%-95% month-on-month, with PC DRAM increasing over 100%, marking the largest quarterly increase on record. The driving force behind this was the insatiable demand for a special type of memory for AI infrastructure construction.

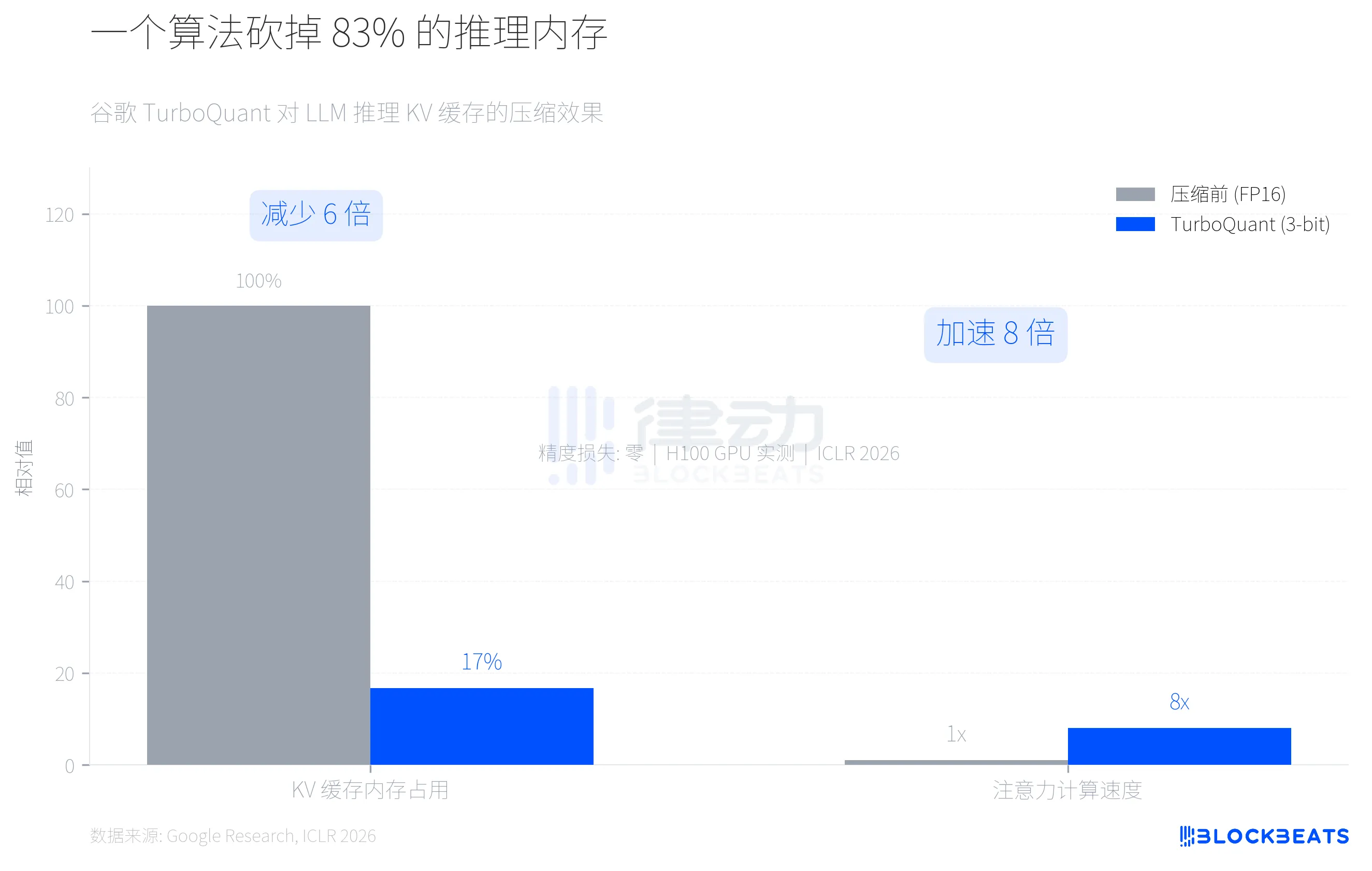

Then, on March 25, Google released a compression algorithm called TurboQuant. Four days later, memory prices collapsed.

Where Did the Capacity Go? HBM Consumed Your Memory Modules

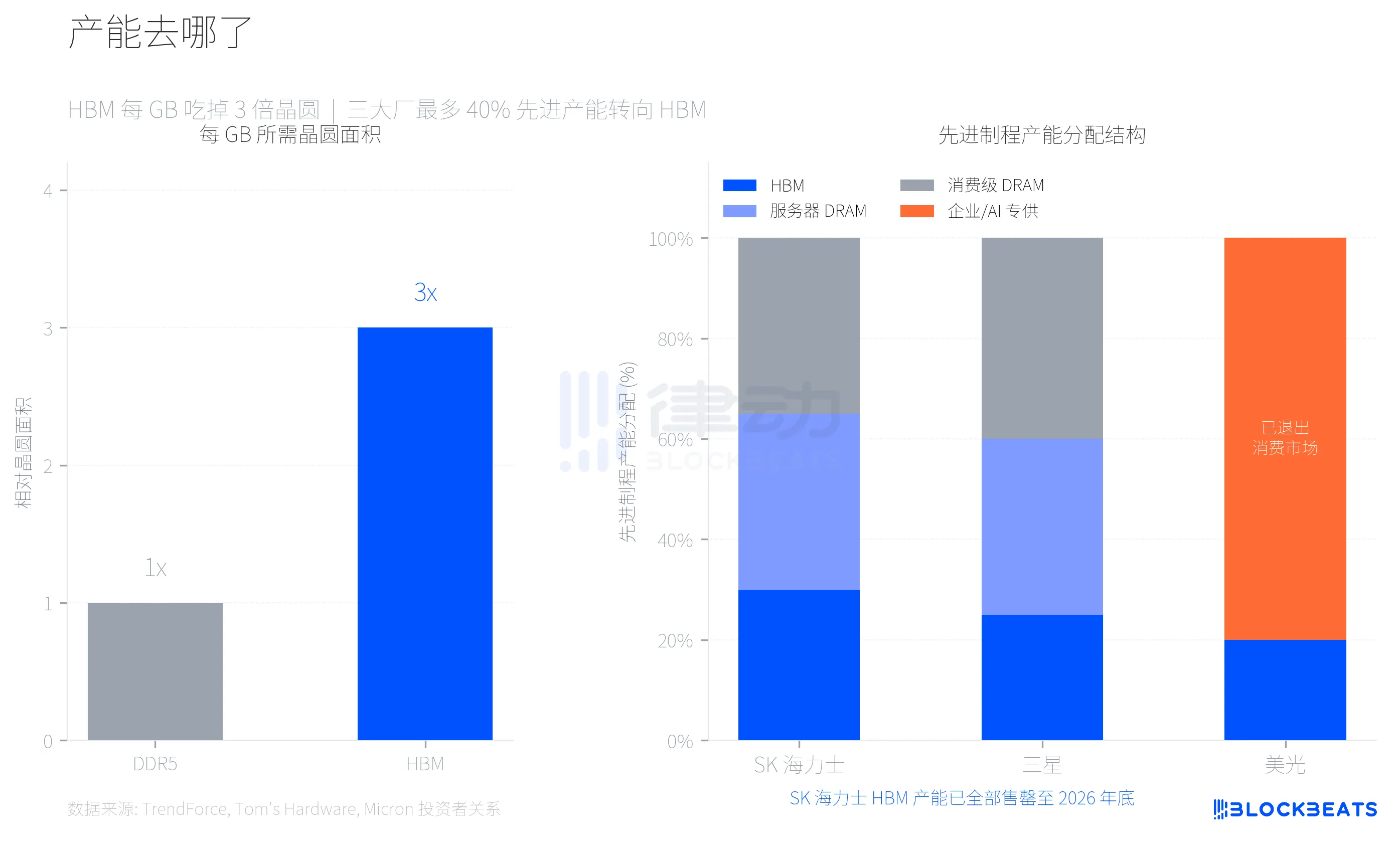

To understand this round of price increases, one must first comprehend a key technical parameter. HBM (High Bandwidth Memory, a dedicated memory for NVIDIA AI chips) consumes three times the wafer area per GB compared to regular DDR5. According to Tom's Hardware, this means that for the same wafer, producing HBM can only yield one-third of DDR5 capacity.

Samsung, SK Hynix, and Micron, the three major memory manufacturers, made a rational choice in response to the high profit margins of HBM, shifting up to 40% of their advanced process wafer capacity towards HBM production. According to TrendForce data, by the first quarter of 2026, the profit margin of DDR5 is expected to exceed that of HBM3e for the first time, reflecting how much consumer-grade memory supply has been squeezed.

Micron's choice was the most aggressive. In December 2025, the company announced it would close its consumer brand Crucial, which had operated for 29 years, completely exiting the consumer memory and storage market and fully transitioning to enterprise and AI customers. According to Micron's investor relations announcement, its total revenue for fiscal year 2025 was $37.38 billion, with data centers and AI applications accounting for 56% of total revenue. The consumer market was no longer worth pursuing.

SK Hynix's HBM capacity is already fully sold out until the end of 2026. Samsung plans to increase its monthly HBM production capacity from 170,000 wafers to 250,000 wafers by the end of 2026. The new fabs (Samsung P4L and SK Hynix M15X) will not achieve mass production until at least 2027-2028. In other words, the supply gap of consumer-grade DRAM is structural and cannot be alleviated in just one or two quarters.

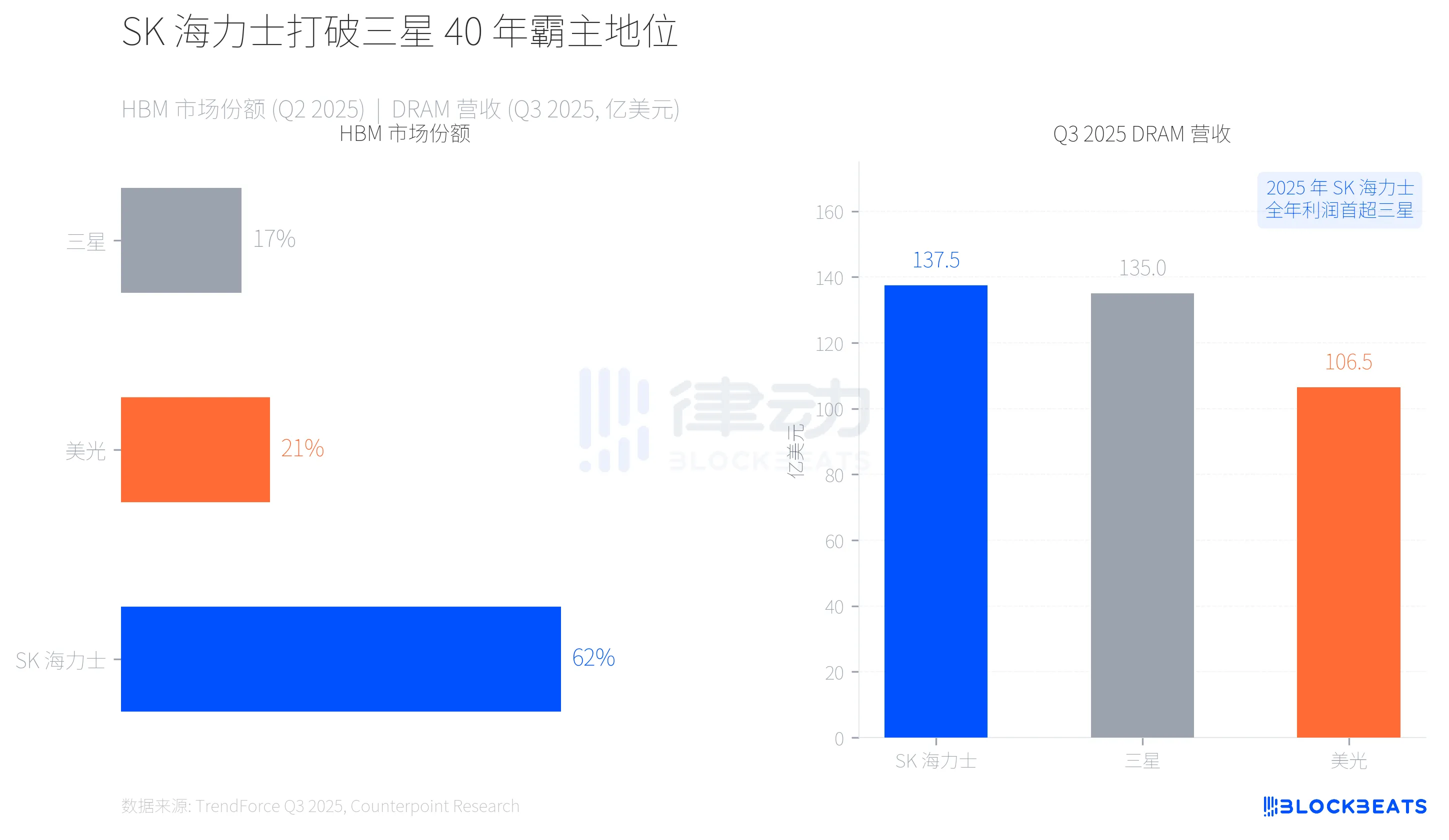

Power Shift, SK Hynix Breaks Samsung's 40-Year Domination

This shift in capacity has also rewritten the power landscape of the memory industry. According to TrendForce data, in the second quarter of 2025, SK Hynix secured 62% of the HBM market share due to its deep ties with NVIDIA, while Samsung held only 17% and Micron 21%.

More significantly, there was a reversal on the revenue front. According to TrendForce's Q3 2025 report, SK Hynix, with $13.75 billion in quarterly DRAM revenue, topped the list for the first time, while Samsung followed closely with $13.50 billion. The gap between them was only $250 million, marking the first time Samsung lost the top position in memory revenue in nearly 40 years. According to CNBC, SK Hynix's operating profit for the entire year of 2025 also surpassed Samsung for the first time.

The early-mover advantage in HBM gave SK Hynix sufficient leverage, but this race is far from over. Samsung is fully racing to catch up with the mass production timeline of HBM4, while Micron, although having exited the consumer market, is seeing the fastest revenue growth in enterprise and AI sectors among the three major manufacturers (Q3 quarter-on-quarter growth of +53.2%).

How One Algorithm Shook the Pricing Logic?

On March 25, Google presented the TurboQuant algorithm at ICLR 2026. This algorithm did one thing: it compressed the KV cache (key-value cache, the part consuming the most memory during inference) used during large language model inference from FP16 precision down to 3-bit, reducing memory usage by at least 6 times, while achieving up to 8 times attention computation acceleration on H100 GPUs. According to Google's research blog, there was zero accuracy loss on five long context benchmark tests, including Needle-in-a-Haystack.

The market quickly did the math. If TurboQuant or similar algorithms are widely adopted by mainstream AI companies, the incremental demand for DRAM from AI inference will drastically shrink. The core narrative supporting the rising memory prices over the past six months was precisely that "AI infrastructure consumed too much memory capacity."

Four days later, channel confidence collapsed.

It should be noted that TurboQuant targets the KV cache on the AI inference side, not the HBM demand on the training side. The supply and demand relationship of HBM will not change in the short term due to a single optimization algorithm for inference. However, the market does not always make a distinction between the two. According to Sina Finance, before the crash, domestic channels were flooded with non-industry investors hoarding due to price increases, leading to more than a 60% drop in retail sales under high prices, with chain sell-offs under tight cash flows amplifying the decline.

An entire AI industry chain simultaneously created both a shortage of memory and a panic of excess. The physical capacity pressure of HBM left consumer-grade memory in short supply, while the efficiency breakthrough of TurboQuant caused expectations for AI memory demand to plummet. The same force manufactured both price increases and the collapse.

免责声明:本文章仅代表作者个人观点,不代表本平台的立场和观点。本文章仅供信息分享,不构成对任何人的任何投资建议。用户与作者之间的任何争议,与本平台无关。如网页中刊载的文章或图片涉及侵权,请提供相关的权利证明和身份证明发送邮件到support@aicoin.com,本平台相关工作人员将会进行核查。