Text | Sleepy.md

Unfortunately, in this era, the more seriously you work without reservation, the more likely you are to accelerate your distillation into a skill that can be replaced by AI.

In recent days, social media trends and news channels have been flooded with "Colleague.skill." As this issue continues to ferment on major social platforms, the public's focus has almost predictably been swept up in great anxieties surrounding "AI layoffs," "capital exploitation," and "the digital immortality of workers."

These indeed cause anxiety, but what makes me most anxious is a line in the project README document that reads:

"The quality of raw materials determines the quality of the skill: it is recommended to prioritize collecting long articles they voluntarily write > decision-making replies > daily messages."

The most easily perfectly distilled by the system and pixel-level restored are precisely those who work the most diligently.

It is those who, after every project conclusion, still sit down to write retrospective documents; those who, when faced with disagreements, are willing to spend half an hour typing long messages in the chat box, sincerely analyzing their decision-making logic; those who are extremely responsible, meticulously entrusting all work details to the system.

Seriousness, once the most revered workplace virtue, has now become a catalyst for rapidly transforming workers into AI fuel.

Exploited Workers

We need to reconceptualize a term: context.

In everyday contexts, "context" is the background for communication. But in AI, especially in the world of rapidly growing AI Agents, context is the fuel for the engine's roar, the blood that sustains the pulse, and the only anchor point that allows the model to make precise judgments amidst chaos.

An AI stripped of context, no matter how impressive its parameters, is nothing but an amnesiac search engine. It cannot recognize who you are, cannot fathom the undercurrents hidden beneath business logic, nor can it know the long pulls and balances you experienced on the network formed by resource constraints and interpersonal games when making a decision.

The reason "Colleague.skill" has created such a huge ripple is precisely because it coldly and accurately locks onto that mountain of high-quality context—modern enterprise collaboration software.

Over the past five years, the Chinese workplace has undergone a quiet yet bone-deep digital transformation. Tools like Feishu, DingTalk, and Notion have turned into massive corporate knowledge bases.

Taking Feishu as an example, ByteDance has publicly stated that the number of documents generated internally each day is enormous, and these densely packed characters faithfully encapsulate every instance of brainstorming, every heated meeting confrontation, and every strategic compromise made by more than one hundred thousand employees.

This digital penetrability far exceeds that of any previous era. Once upon a time, knowledge had warmth; it lay dormant in the minds of seasoned employees, wafted through casual chit-chat in the breakroom; but now, all human wisdom and experience have been forcibly drained of their moisture, ruthlessly settling in the cold, frosty block of server arrays in the cloud.

In this system, if you don't write documents, your work cannot be seen, and new colleagues cannot collaborate with you. Modern enterprises' efficient operations are built on every employee day after day "offering" context to the system.

Diligent workers, full of hard work and goodwill, unreservedly expose their thinking trajectories on these cold platforms. They do so to ensure smoother meshing of the team's gears, to strive to prove their value to the system, to urgently seek their place within this intricate business behemoth. They are not actively giving up themselves; they are merely clumsily and earnestly adapting to the survival rules of the modern workplace.

But it is precisely this context left for interpersonal collaboration that becomes AI's perfect fuel.

Feishu's management backend has a feature allowing super administrators to batch export members' documents and communication records. This means that the project retrospectives and decision-making logic you've spent three years crafting, writing through countless late nights, can, with just one API interface, be easily packaged into a soulless compressed file in just a few minutes.

When Humans are Reduced to APIs

With the explosive popularity of "Colleague.skill," we began to see extremely uncomfortable derivatives appear in GitHub Issues and on various social platforms.

Some have created "Ex-Partner.skill," trying to feed past chat records from WeChat into AI, letting it continue arguments or expressions of affection in that familiar tone; some have crafted "White Moonlight.skill," demoting unattainable excitement to a cold interpersonal sandbox, repeatedly rehearsing probing dialogues, cautiously seeking optimal emotional solutions; others have produced "Dad Flavor Boss.skill," pre-chewing those oppressive PUA phrases in the digital space, building a sorrowful psychological defense for themselves.

The usage scenarios for these skills have completely diverged from the realm of work efficiency. Involuntarily, we have become adept at wielding the cold logic reserved for tools to dissect and objectify fleshy, living humans.

The German philosopher Martin Buber once proposed that the foundational color of human relationships exists in two entirely different modes: "I and thou" and "I and it."

In the encounter of "I and thou," we transcend prejudice and regard the other as a complete and dignified life form. This bond is open without reservation, filled with vibrant unpredictability, and, due to its sincerity, seems particularly fragile; however, once it falls into the shadow of "I and it," a living human is reduced to an object that can be dismantled, analyzed, and categorized with labels. Under this extremely utilitarian gaze, what we care about is solely, "What is this thing useful for to me?"

The emergence of products like "Ex-Partner.skill" marks the complete invasion of the tool rationality of "I and it" into the most private emotional realms.

In a real relationship, a person is three-dimensional, full of wrinkles, constantly flowing with contradictions and rough edges. A person’s reaction can vary drastically depending on specific contexts and emotional interactions. Your ex may react completely differently to the same statement upon waking in the morning versus after working late at night.

However, when you distill a person into a skill, what you strip away is merely that part of them that was "useful" to you, able to "generate utility" in that particular bond. The originally warm and self-aware individual is entirely drained of their soul in this cruel purification, alienating into a "functional interface" that you can casually plug and unplug, drawing upon as you please.

It must be admitted that AI did not conjure this chilling harshness out of thin air. Before the emergence of AI, we had already become accustomed to labeling others, accurately measuring the "emotional value" and "network weight" of each relationship. For instance, we quantify people’s attributes into charts on dating markets; we categorize colleagues at work as "capable" or "slackers." AI merely makes this implicit functional extraction between people explicit.

Humans have been flattened, leaving only the segment of "what use is it to me."

Electronic Patina

In 1958, Hungarian-British philosopher Michael Polanyi published "Personal Knowledge." In this book, he proposed a compelling concept: tacit knowledge.

Polanyi famously stated, "We know more than we can tell."

He illustrated this with the example of learning to ride a bicycle. A skilled rider gliding through the wind can perfectly balance during every tilt of gravity, yet cannot accurately reflect that moment's subtle intuition to a beginner with rehashed physics formulas or pale vocabulary. They know how to ride but cannot articulate it. This knowledge, which cannot be coded or verbalized, is tacit knowledge.

The workplace is full of such tacit knowledge. A seasoned engineer might pinpoint an issue just by glancing at logs when troubleshooting a system failure, but they struggle to document that "intuition" built on thousands of trial and error; an outstanding salesperson may suddenly fall silent at the negotiation table, and the weight and timing of that silence are inscrutable to any sales manual; an experienced HR professional can detect a candidate’s dishonesty just by noticing a half-second of eye avoidance.

"Colleague.skill" can only extract those explicit forms of knowledge that have already been written down or spoken. It can capture your retrospective documents but cannot grasp the angst you felt while writing them; it can replicate your decision replies but cannot replicate the intuition that drove your decision-making.

What is distilled by the system is always just a shadow of a person.

If the story ended here, it would merely be another crude imitation of humanity by technology.

Yet, when a person is distilled into a skill, this skill does not remain static. It will be used to reply to emails, write new documents, make new decisions. In other words, these AI-generated shadows begin to produce new contexts.

These AI-generated contexts will then settle in Feishu and DingTalk, becoming training materials for the next round of distillation.

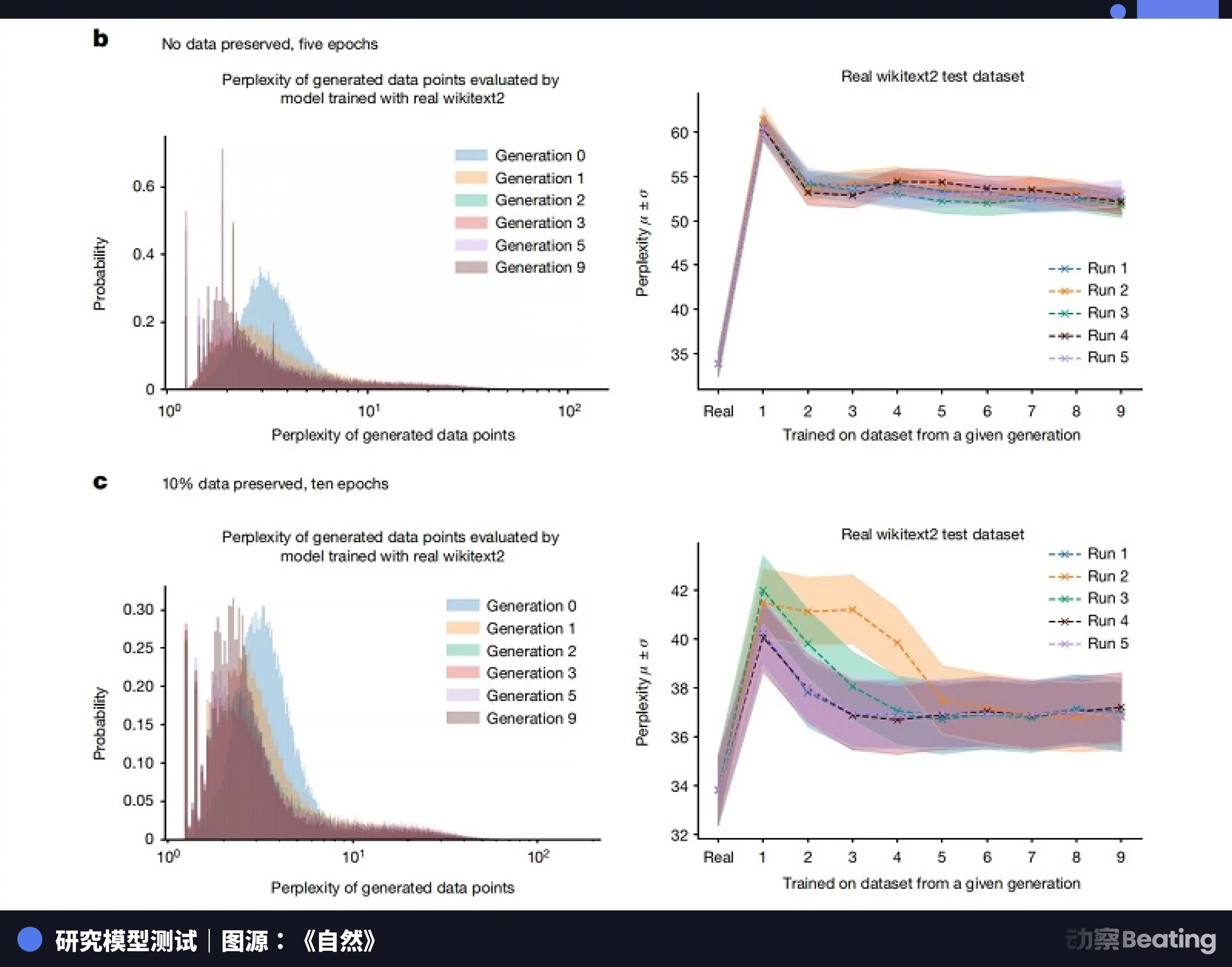

As early as 2023, a research team from Oxford University and Cambridge University jointly published a paper on "model collapse." The research indicated that when AI models are iteratively trained using data generated by other AIs, the distribution of data becomes increasingly narrow. Rare, marginal, but strikingly real human traits are quickly erased. After merely a few generations of training on synthetic data, models completely forget those long-tail, complex, real human data, defaulting to producing extraordinarily mediocre and homogenized content.

In 2024, "Nature" also published a research paper pointing out that training future generations of machine learning models with AI-generated datasets will severely contaminate their outputs.

This is akin to those images of meme templates circulating online, originally a high-definition screenshot, compressed and forwarded numerous times. Each propagation loses some pixels and adds a bit of noise. Ultimately, the image becomes blurred and coated with electronic patina.

When the authentic, tacitly knowledgeable human context is drained, and the system can only train itself using the coated shadow, what will ultimately remain?

Who is Erasing Our Traces

What remains is only the correct nonsense.

When the river of knowledge dries up into an endless ruminating and self-chewing of AI on AI, everything that the system digests will inevitably become extremely standardized, extremely safe, but also hopelessly hollow. You will see countless perfectly structured weekly reports, countless faultless emails, yet inside there is no breath of a living person, no truly valuable insights.

This great collapse of knowledge is not due to human brains becoming dull; the real tragedy lies in us outsourcing the right to think and the responsibility of leaving context to our own shadows.

A few days after "Colleague.skill" exploded in popularity, a project called "anti-distill" quietly appeared on GitHub.

The author of this project did not attempt to attack large models, nor did he write grand declarations. He simply provided a little tool to help workers in Feishu or DingTalk automatically generate seemingly reasonable but actually full of logical noise ineffective long texts.

His purpose is simple: to hide his core knowledge before being distilled by the system. Since the system likes to capture "actively written long texts," he feeds it a pile of utterly unnutritious garble.

This project did not explode like "Colleague.skill"; it even appears small and powerless. Defeating magic with magic still essentially spins within the game rules set by capital and technology. It cannot change the overarching trend of the system becoming increasingly reliant on AI and increasingly neglecting real humans.

But this does not prevent this project from becoming the most tragically poetic and profoundly metaphoric scene in the entire absurd play.

We strive extremely hard to leave traces in the system, writing detailed documents, providing rigorous decisions, attempting to prove that we once existed within this colossal modern enterprise machine, proving our value. Yet, we do not realize that these exceptionally serious traces will ultimately become the eraser that wipes us away.

However, thinking from another angle, this may not be a complete dead end.

Because what that eraser wipes away is always just "the past you." A skill packed into a document, no matter how ingenious its capturing logic, is essentially just a static snapshot. It is locked in that exported moment, able only to revolve endlessly within established processes and logic, relying on outdated nutrients. It does not possess the instinct to face the unknown chaos, nor the ability for self-evolution in the face of real-world setbacks.

As long as we continue to probe outward, constantly breaking and reconstructing our cognitive boundaries while relinquishing those highly standardized, established experiences, we simultaneously free our hands. That shadow residing in the cloud will forever only be able to echo our backs.

Humans are fluid algorithms.

免责声明:本文章仅代表作者个人观点,不代表本平台的立场和观点。本文章仅供信息分享,不构成对任何人的任何投资建议。用户与作者之间的任何争议,与本平台无关。如网页中刊载的文章或图片涉及侵权,请提供相关的权利证明和身份证明发送邮件到support@aicoin.com,本平台相关工作人员将会进行核查。