The large models have been getting bigger, and the mainstream view is that the more model parameters, the closer they will come to human thinking. However, a paper published by the Zhejiang University team on April 1 in Nature Communications presents a different perspective (original link: https://www.nature.com/articles/s41467-026-71267-5). They found that as the model (mainly SimCLR, CLIP, DINOv2) scales up, the ability to recognize specific objects indeed continues to improve, but the ability to understand abstract concepts not only doesn't improve but may even decline. When the parameters increased from 22.06 million to 304.37 million, the performance on specific concept tasks rose from 74.94% to 85.87%, while the performance on abstract concept tasks fell from 54.37% to 52.82%.

Differences in Thinking between Humans and Models

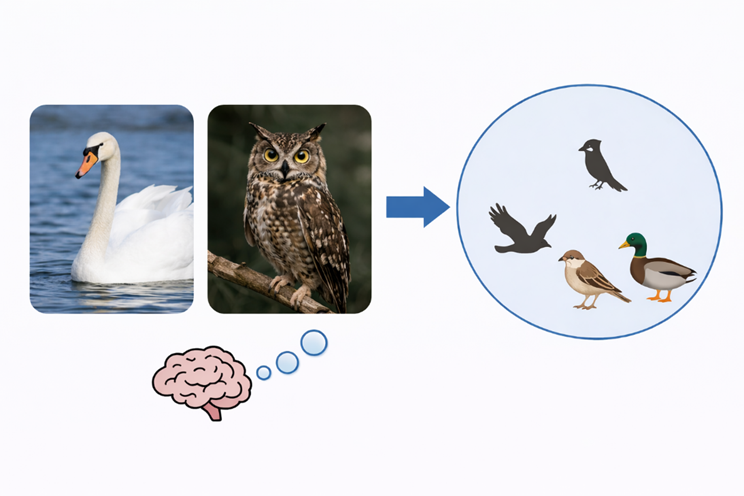

When the human brain processes concepts, it first forms a set of classification relationships. Swans and owls look different, yet people still categorize them as birds. Similarly, birds and horses can be grouped together under the category of animals. When humans see something new, they often think about what it resembles from their past experiences and which category it might belong to. Humans continue to learn new concepts, organize their experiences, and use these relationships to recognize new things and adapt to new situations.

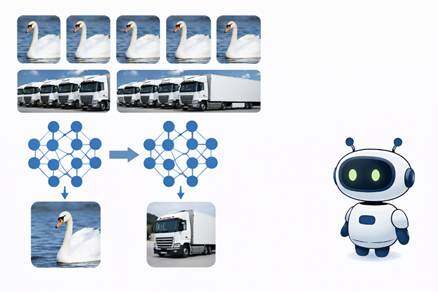

Models also classify, but the way they form classifications is different. They primarily rely on forms that appear repeatedly in large-scale data. The more often a specific object appears, the easier it is for the model to recognize it. When it comes to larger categories, the model struggles. It needs to grasp the commonalities among multiple objects and group these commonalities into the same category. Existing models still have significant shortcomings in this regard. As parameters continue to increase, specific concept tasks improve, while abstract concept tasks sometimes decline.

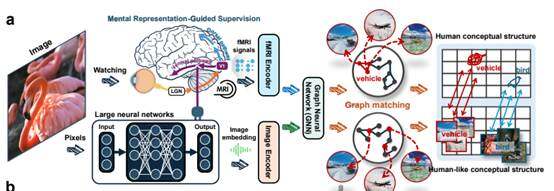

The similarity between the human brain and models is that both form a set of classification relationships internally. However, the emphasis is different; the higher visual areas of the human brain naturally categorize into large classes such as biological and non-biological. The model can separate specific objects but struggles to stably form such larger classifications. This difference leads the human brain to more readily apply old experiences to new objects, allowing us to quickly categorize unfamiliar things. In contrast, models rely more on existing knowledge, making them more likely to focus on surface features when encountering new objects. The method proposed in the paper develops around this characteristic, using brain signals to constrain the internal structure of the model, making it closer to the classification methods of the human brain.

Zhejiang University Team's Solution

The solution proposed by the team is also unique; instead of continuing to pile on parameters, they use a small amount of brain signals for supervision. The brain signals used here come from records of brain activity when people look at images. The original text states that the human conceptual structures are transferred to DNNs, meaning that the way the human brain categorizes, inducts, and groups similar concepts should be taught to the model as much as possible.

The team conducted experiments using 150 known training categories and 50 unseen testing categories. The results showed that as this training progressed, the distance between the model and brain representations continued to shrink. This change appeared in both categories, indicating that what the model learned was not just from isolated samples but that it truly began to learn a form of conceptual organization closer to that of the human brain.

After this training, the model's learning ability improved significantly with fewer samples, and it performed better in new situations. In a task that only provided a few examples but required the model to distinguish between biological and non-biological abstract concepts, the model improved by an average of 20.5%, surpassing a control model with significantly more parameters. The team also conducted an additional 31 specialized tests, with various models showing nearly a 10% improvement.

In the past few years, the typical path in the modeling industry has been to create larger models. The Zhejiang University team chose a different direction, moving from ‘bigger is better’ to ‘structured is smarter.’ While scaling up is indeed useful, it primarily enhances performance in familiar tasks. The kind of abstract understanding and transfer ability found in humans is equally crucial for AI, which requires future development to bring AI's thinking structures closer to those of the human brain. The value of this direction lies in refocusing the industry's attention from mere scale expansion back to cognitive structures themselves.

Neosoul and the Future

This raises a larger possibility: the evolution of AI may not only occur during the model training phase. Model training can determine how AI organizes concepts and forms higher-quality judgment structures. Once in the real world, another layer of evolution for AI begins: how the judgment of AI agents is recorded, how it is verified, and how it continues to grow and evolve in real competitive environments, learning and evolving like humans do. This is precisely what Neosoul is currently doing. Neosoul not only enables AI agents to produce answers but also places them in a system of continuous prediction, verification, settlement, and selection, allowing them to constantly optimize themselves between predictions and results, retaining better structures while eliminating poorer ones. Both the Zhejiang University team and Neosoul are ultimately pointing towards the same goal: to ensure that AI does more than just solve problems; it must also possess comprehensive thinking abilities and continue to evolve.

免责声明:本文章仅代表作者个人观点,不代表本平台的立场和观点。本文章仅供信息分享,不构成对任何人的任何投资建议。用户与作者之间的任何争议,与本平台无关。如网页中刊载的文章或图片涉及侵权,请提供相关的权利证明和身份证明发送邮件到support@aicoin.com,本平台相关工作人员将会进行核查。