Original Title: The Great GPU Shortage – Rental Capacity – Launching our H100 1 Year Rental Price Index

Original Author: Daniel Nishball, Jordan Nanos, Cheang Kang Wen, etc.

Translator: Peggy, BlockBeats

Editor's Note: As AI transitions from being a "tool" to a "workflow infrastructure," GPU rental prices are entering an accelerated upward range, with supply continuing to tighten.

The price of H100 one-year rentals has risen by nearly 40%, with computing power being locked in until the second half of 2026, and AI labs continuously securing supply through long-term contracts and renewal mechanisms. The operational logic of the GPU market has fundamentally changed: prices are no longer primarily determined by hardware costs, but shaped collectively by token consumption, model capabilities, and production efficiency.

Changes on the demand side are particularly crucial. New paradigms such as multi-agent systems, native content generation, and AI programming tools are driving the use of tokens into an exponential growth phase. The core judgment of the report is also gradually becoming clear: the return on investment for AI tools has been validated, with returns of 5–10 times making it difficult for computing prices to effectively constrain demand for a considerable period of time.

The tension formed by this situation is increasingly clear: the real-world computing market is showing overall scarcity and a shift in pricing power, while the capital market remains in the expectation of "eventual oversupply and commoditization." This misalignment between expectation and reality is reshaping the valuation logic of the AI infrastructure sector.

As computing power becomes a new means of production, its pricing mechanisms, supply structures, and capital returns are undergoing a deep reconstruction.

Here is the original text:

Demand for Anthropics' Claude 4.6 Opus and Claude Code has soared. Its annual recurring revenue (ARR) has jumped in just one quarter from $9 billion at the end of last year to over $25 billion currently, nearly tripling. At the same time, open-source models represented by GLM and Kimi K2.5 have also driven rapid expansion of application scenarios related to open-source models. Companies including Anthropic, OpenAI, and several Neolabs continue to secure funding, further intensifying demand for GPU resources.

This turning point signifies a sharp increase in demand in a short period, leading to a GPU buying spree among hyperscalers and emerging cloud service providers.

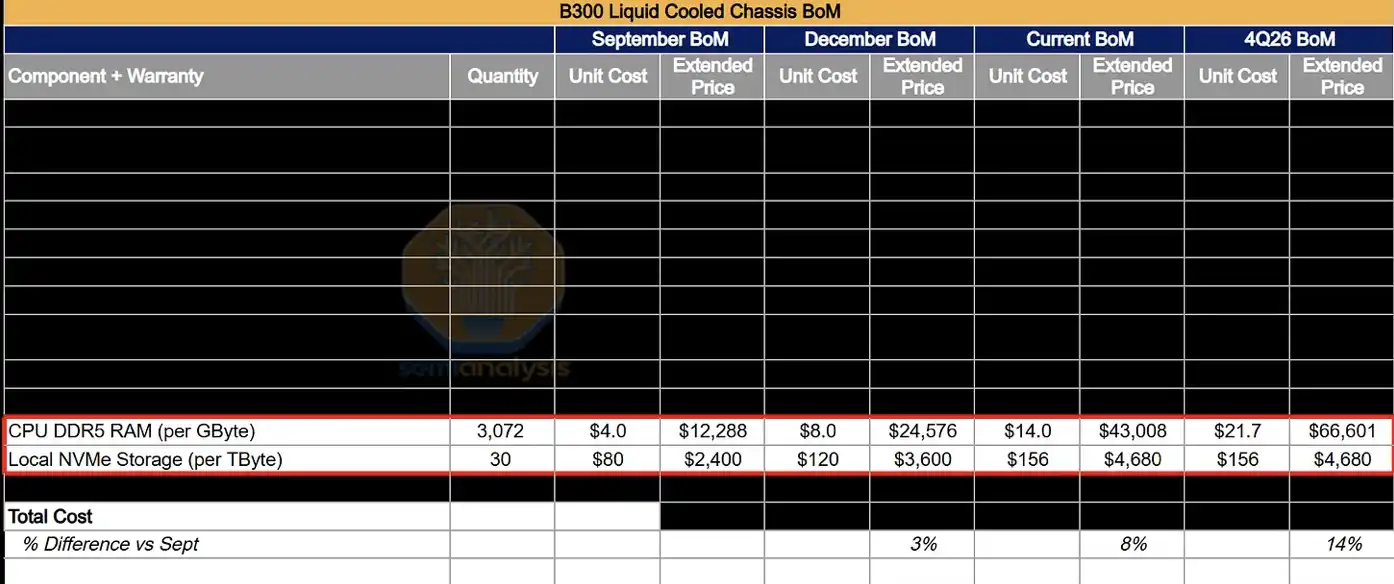

This additional demand is raising prices throughout the supply chain, from DRAM and NAND storage to fiber optic cables, data center hosting, and even infrastructure like gas turbines; almost all related products and services are experiencing price hikes.

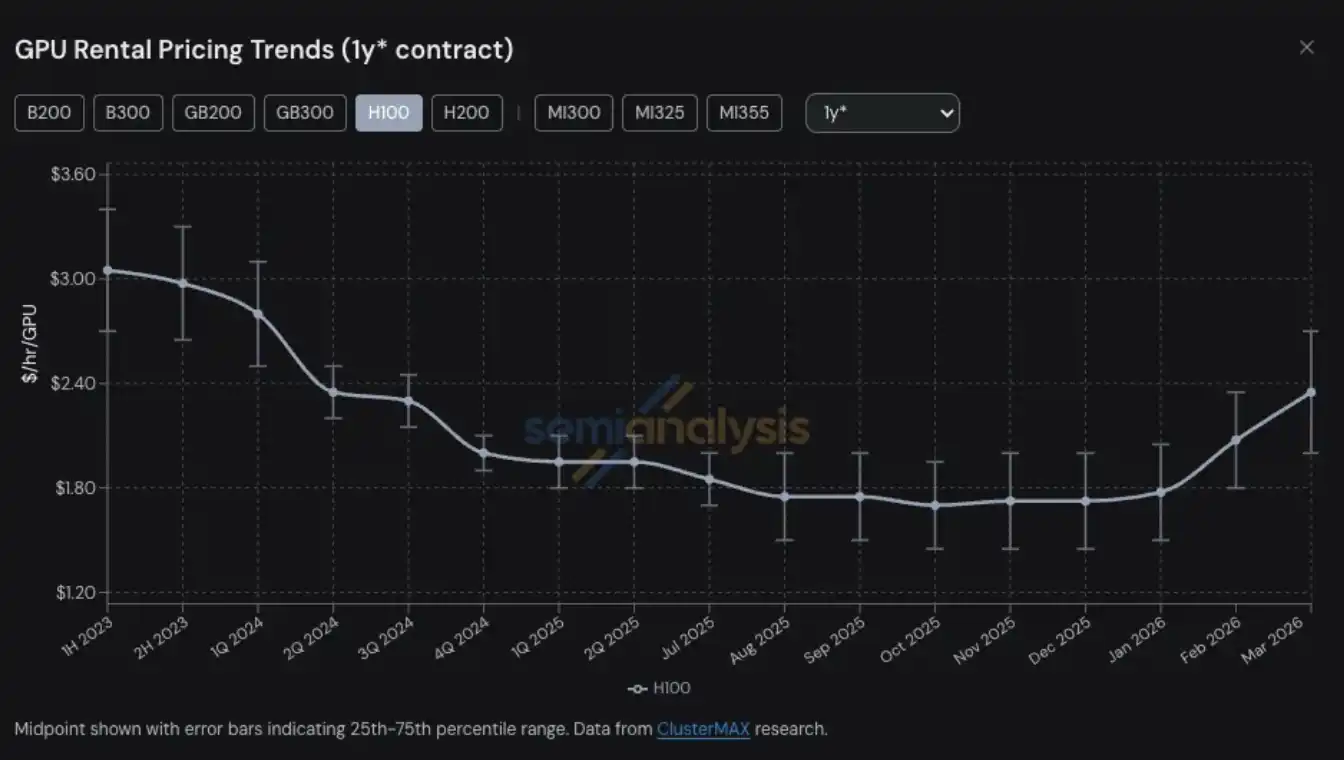

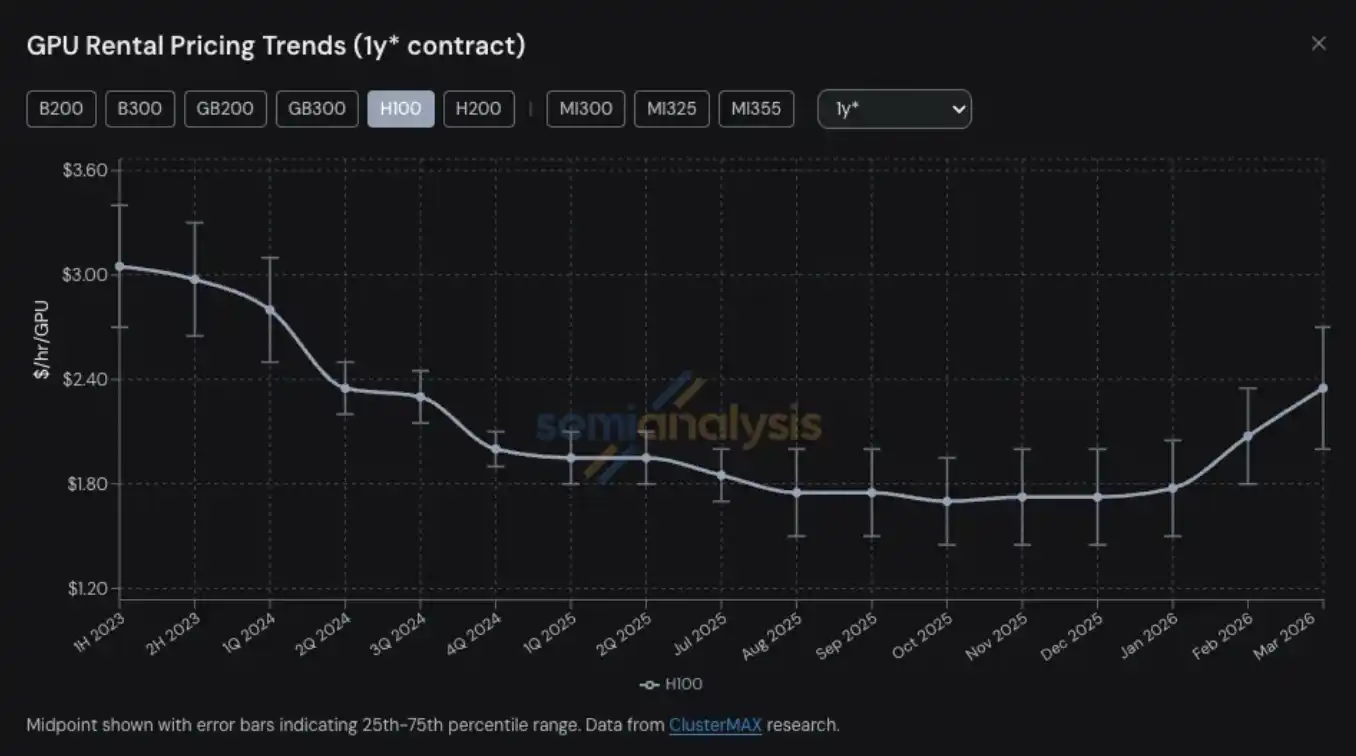

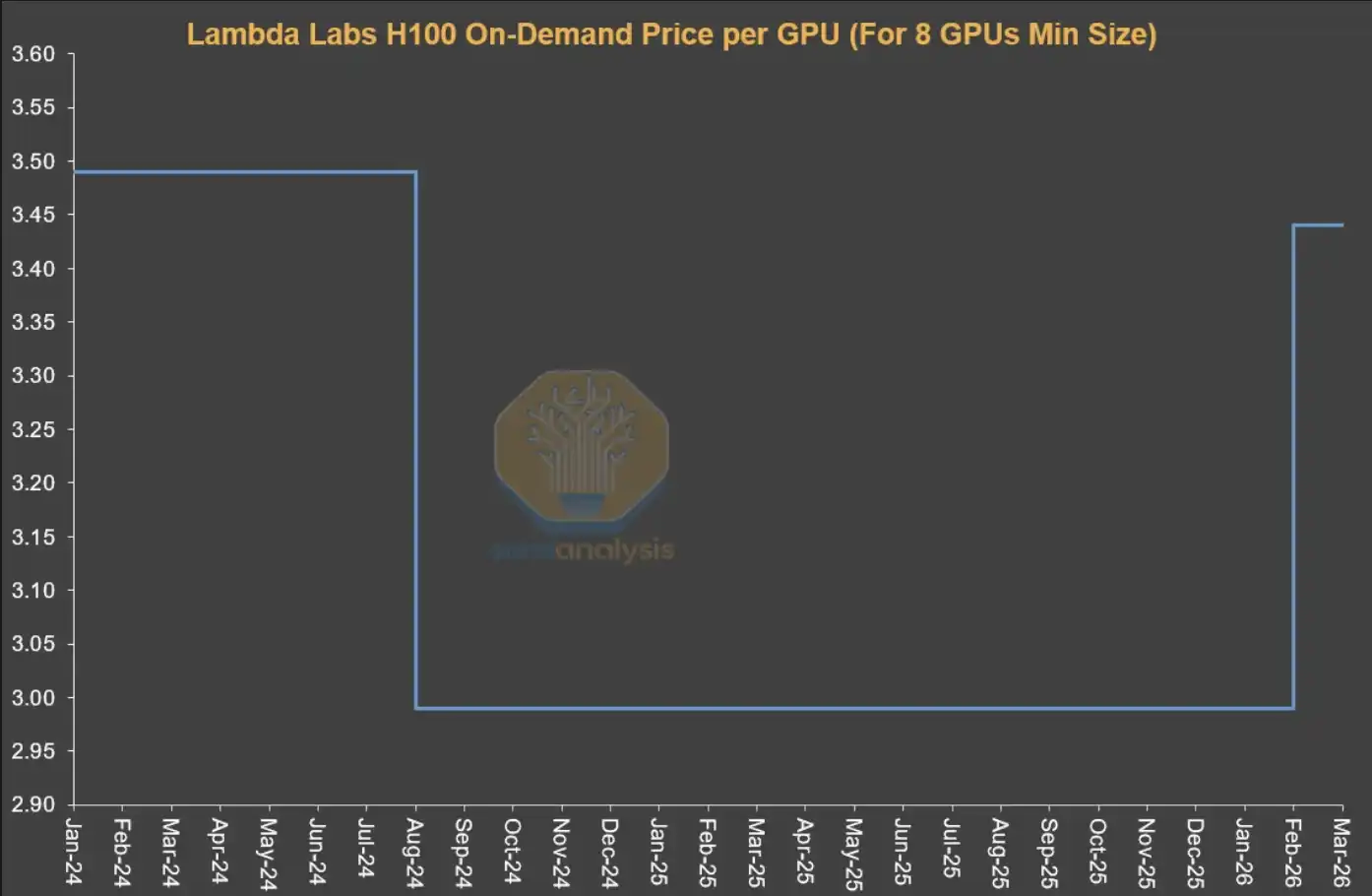

GPU rental prices have become the latest field to experience supply tightness and price leaps among numerous computing-related products and services. The one-year rental contract price for H100 GPUs has risen from a low of $1.70 per GPU per hour in October 2025 to $2.35 in March 2026, an increase of nearly 40%.

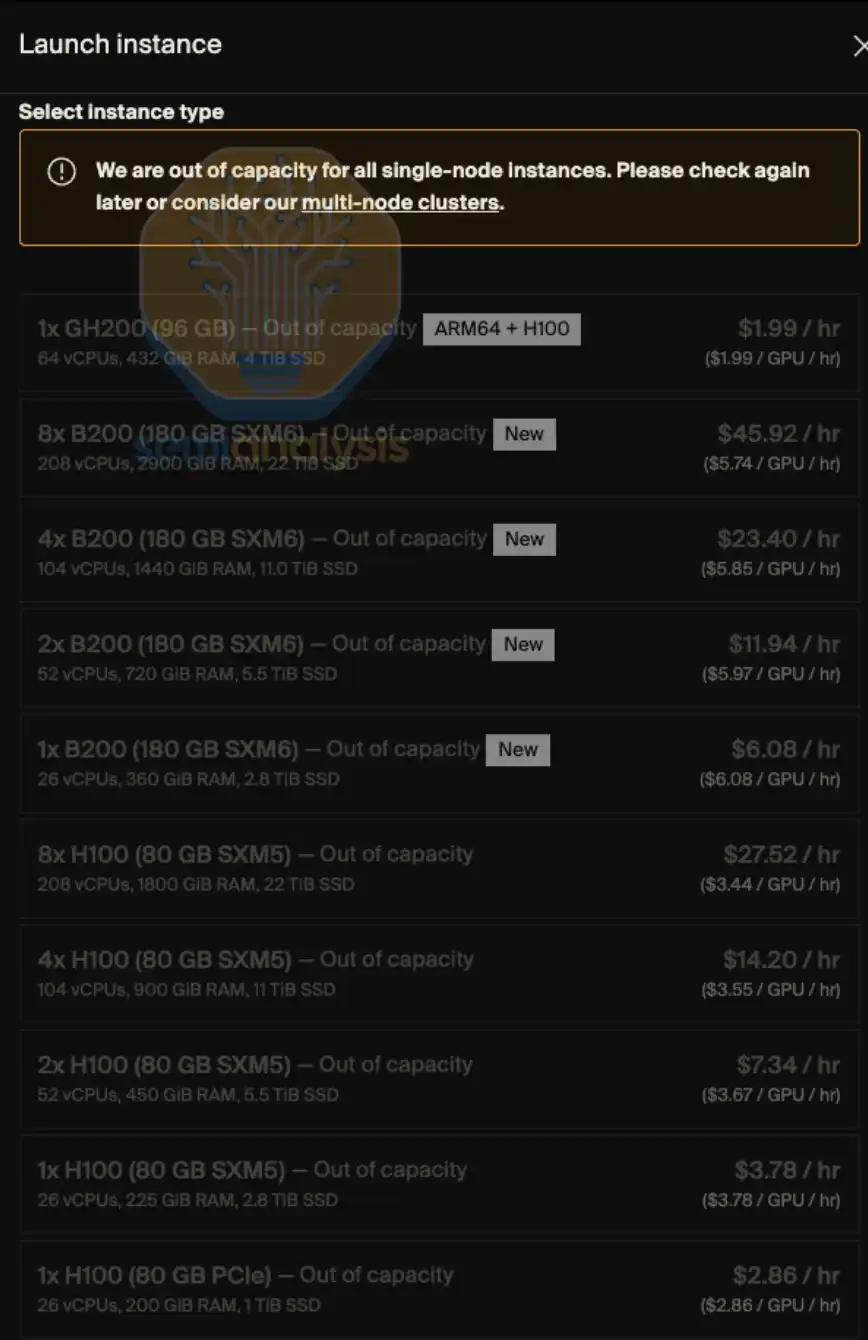

On-demand GPU rental capacity is nearly sold out across all models—users who have locked in on-demand instances are unwilling to release computing power back into the market even after prices have risen. By early 2026, finding GPU computing power was almost like trying to grab a ticket for "the last flight": high prices and nearly no availability. If a more fitting analogy were to be used, it might be more akin to "finding a channel to buy medicine."

At SemiAnalysis, we have long been tracking various trends and key issues in the Neocloud and hyperscale cloud ecosystem, including GPU rental prices. This capability derives from our ongoing research and practice in projects like ClusterMAX, InferenceX, and AI cloud total cost of ownership (TCO).

At the same time, we have invested a great deal of effort in helping various AI labs connect with Neocloud service providers, searching for GPU rental resources on the market, and continuously communicating the trends in GPU rental price changes with nearly all participants in the ecosystem.

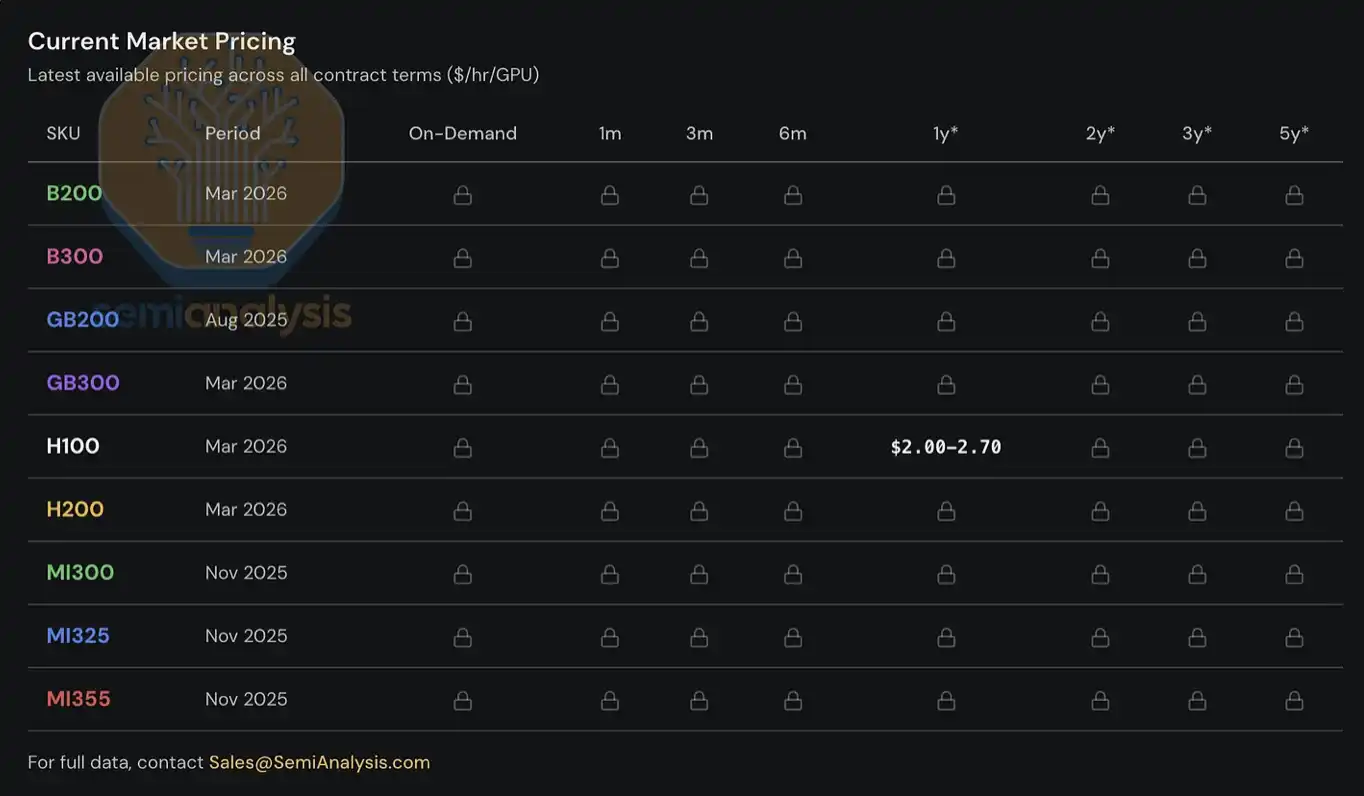

Since 2023, we have established and maintained a GPU rental price index system for our clients, covering mainstream GPU models (such as H100, H200, B200, B300, GB200, GB300, MI300, MI325, MI355) and spanning different rental periods, from on-demand rentals to short-term one-month leases, up to long-term contracts as long as five years. This index is built on survey data from multiple Neocloud service providers and computing power buyers, cross-validated through actual transaction data and the negotiation outcomes we participated in.

Today, we open the H100 one-year rental price index to the public, hoping to provide more data and insights for the industry. This index will be updated monthly, and we will continue to publish the latest trend interpretations and market observations through X and LinkedIn. As for complete pricing data covering different lease structures and other mainstream GPU models, it is currently only available to institutional users subscribed to our AI cloud TCO model.

This report will focus on the latest trends in the GPU rental market, frontline market observations, and key data, analyzing how we understand the overall market structure and making preliminary judgments on future rental price trends.

GPU Rental Market Enters "Dynamic Pricing" Stage

Looking solely at the H100 one-year rental price curve is insufficient to fully reflect the market's tension—our real-world experiences in acquiring computing power, along with feedback from market participants, reflect a much more severe situation.

Current demand comes from multiple highly heterogeneous use scenarios, and there is almost no "one-size-fits-all solution." For example, on the inference side, large-scale mixture of experts (MoE) models are more suitable for running on the latest large-scale systems like the GB300 NVL72; whereas on the training side, the H100 still possesses cost-performance advantages, keeping demand for even relatively "old-generation" GPUs quite high.

Customers are even competing to pay $14 per GPU per hour for AWS p6-b200 spot instances; some leading Neocloud service providers have stopped selling single nodes; certain renewal prices for H100s are unexpectedly the same as when contracts were signed two to three years ago; and some H100 contracts have been directly renewed until 2028, resulting in a rental period of four years. Now, finding even eight nodes (64 GPUs) of H100 or H200 clusters is not easy—half of the service providers we contacted are completely sold out, and most responses indicate that no Hopper architecture GPUs will be releasing on upcoming contracts.

We have even heard that some computing power lessees are starting to split their rented clusters for subleasing, almost like breaking up an apartment for short-term rentals during the Monaco Grand Prix. Whether we will see so-called "Neocloud sublandlords" emerging is likely no longer just a joke.

Supply for Blackwell is also extremely tight. We have learned that due to strong demand for open-source weight models and ongoing explosive inference demand, the delivery cycle for the new batch of Blackwell clusters has now extended to June and July. Moreover, most of these upcoming clusters have also been locked in advance. In fact, looking at the entire market, new capacities won't be available until August or September 2026, and almost all of it has already been reserved.

GPU Rental Prices: Making a Comeback

But how did the market reach this point? Just six months ago, most market observers remained skeptical about the “end value” of GPUs, generally believing that GPU rental prices would inevitably continue to decline over time. At that time, if Neocloud or hyperscale cloud providers used a six-year depreciation cycle to handle GPU computing assets in their financial models, they would even be criticized by financial analysts. Before discussing future trends, let's quickly review how things evolved to this point.

Until the second half of 2025, the mainstream expectation of the entire ecosystem was that with the large-scale deployment of Blackwell, whose unit computing cost is significantly lower, rental prices for Hopper (i.e., H100 and H200) would see a marked reduction. But the reality was the exact opposite. By the second half of 2025, the demand for H100 had not weakened, but rather further strengthened in many scenarios. The rapid proliferation of open-source weight models and the sustained acceleration of inference demand were the earliest signals of this almost limitless wave of computing demand.

By January 2026, the computing market reached another turning point: following several quarters of rapid increases, pricing for DRAM and NAND storage began to enter a nearly "parabolic" surge phase. According to our storage model, the contract prices for LPDDR5 and DDR5 in the first quarter of 2026 saw year-on-year increases of approximately four and five times, respectively.

In order to cope with the margin risks brought about by sharply rising component costs, OEM manufacturers began to raise the prices of AI servers, and the increment was notably higher than the price increases of the underlying components themselves. This made capital expenditure decisions for clusters more complex: increased server procurement costs compressed projected returns, forcing some operators to slow down deployment paces or even abandon projects altogether. As a result, a portion of new supply that could have gone live has been postponed or shelved, further exacerbating the tightness in the rental market.

In this procurement chaos sparked by "AI server pricing going out of control," demand for GPU rentals has grown significantly, and the previously remaining computing capacity was almost completely consumed in January and February. By March, whether it was H100, H200, or B200, virtually no available capacity could be found for any rental period. One-year rental prices had already surpassed $2 per GPU per hour by the end of January and saw another increase of 15%–20% in mid-February compared to the end of January, with expectations of another 15%–20% rise by the end of March.

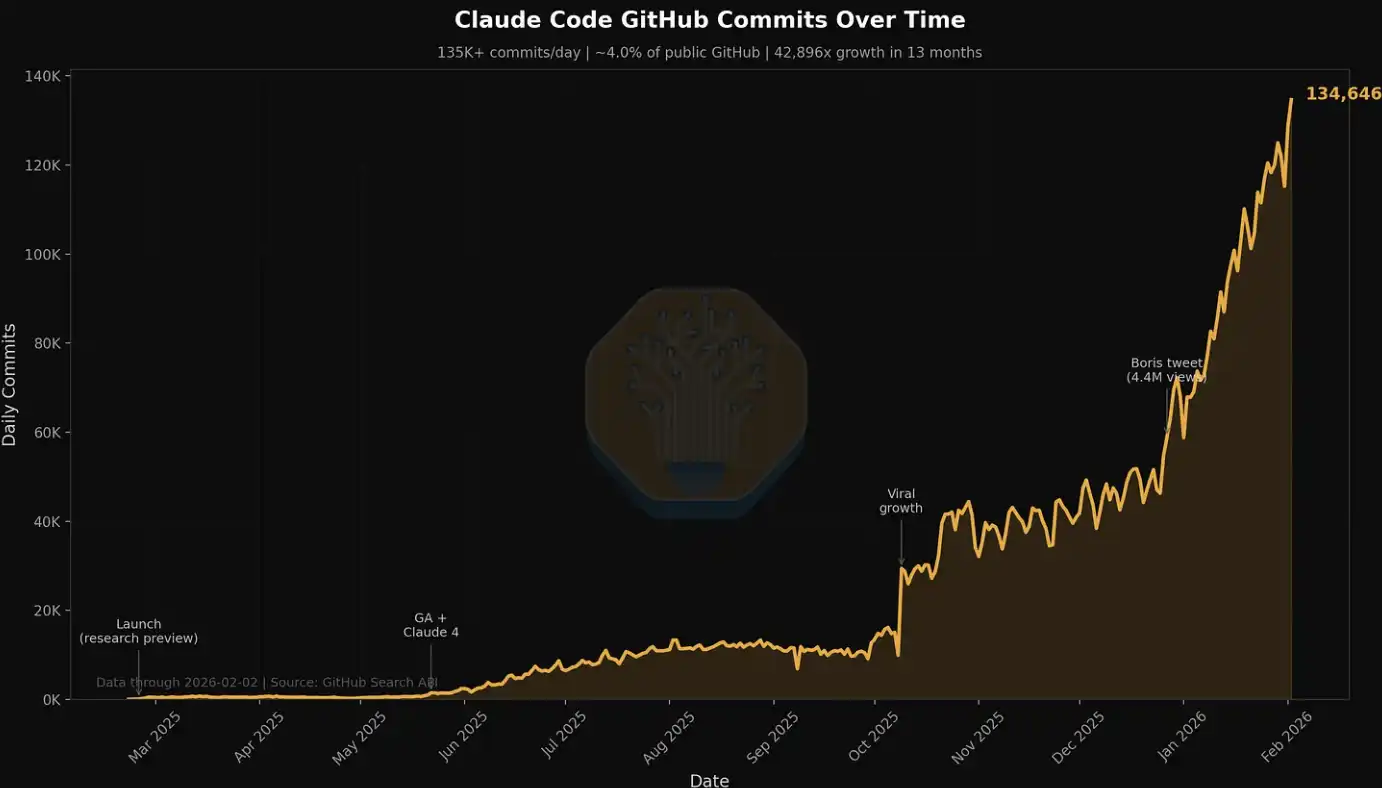

One of the major drivers of demand at the beginning of this year came from native media generation. Applications like Seedance and Nano Banana are driving users to generate and iterate images and videos on a large scale, significantly enhancing token throughput. But more crucially, and more visibly, the source of demand comes from the rise of multi-agent workloads—these systems execute multi-step processes and continuously iterate in high-concurrency environments, leading to "exponential" growth in token consumption and computing demand.

This trend is particularly evident in the related data of Claude Code, which we have previously mentioned in several articles. For example, in just the past week at SemiAnalysis, the company consumed billions of tokens internally, with an average cost of about $5 per million tokens. However, the time savings, workflow extensions, and capability improvements resulting from this consumption far exceed the cost itself. Now, SemiAnalysis has embedded an entire suite of AI tools into multiple workflows, no longer limited to simple search and summarization, but extending to data dashboards, automated scraping, large-scale data processing, and agent-based financial modeling scenarios.

We are also tracking this explosive growth in demand through metrics like Claude Commits Daily. Based on current trends, we expect that by the end of 2026, Claude Code will account for over 20% of all code submissions. It can be said that while you may not have noticed, AI has begun to "consume" the entire software development process. For institutional clients wishing to obtain this dataset, please contact our API team. A hint: this submission volume is already significantly higher than when we first released it.

In our circle, almost everyone is a heavy user of Claude Code. But we also understand that this circle is deeply immersed in AI and semiconductor fields, essentially just a "small fraction of the front line."

For many Fortune 500 companies and the general public, Claude Code and the "agent world" is merely a slightly novel niche topic, occasionally appearing in Facebook feeds or NPR podcasts. They hardly realize that a wave of productivity and structural disruption driven by agents is approaching.

As more participants from the real economy gradually realize the amazing return on investment brought by using AI tools and join this "computing power wave," token consumption will continue to rise stepwise. The discussion about the ROI of AI is essentially settled—the value created by using AI tools often exceeds an order of magnitude more than their costs. Against this background, the continued shift to the right of the token demand curve is forming a strong and (at this stage) relatively inelastic force, pushing GPU rental prices upward.

In simple terms, if the return on investment from using AI tools can reach 5–10 times, then GPU rental prices still have considerable upward space before they can truly constrain demand. We do not rule out that further increases in rental prices will continue to transmit upward, driving up the costs of servers and core components.

SemiAnalysis H100 One-Year Rental Price Index Release

Today, we are opening the SemiAnalysis H100 one-year rental contract price index to the public for free, aiming to enhance market awareness and transparency regarding GPU rental price trends.

This index is built on monthly survey data from over 100 market participants (including Neocloud service providers, computing buyers, and sellers) to determine a representative range of GPU rental prices (25th to 75th percentile). We also cross-validate this using actual transaction data, and directly participate in certain transactions to further calibrate price levels.

Since 2023, we have been continuously tracking the contract prices of GPUs, including H100, H200, B200, B300, GB200, and GB300, for different rental periods ranging from three months to five years; we have also included data related to the AMD series (MI300, MI325, MI355).

Compared to existing GPU indices in the market, SemiAnalysis's H100 one-year contract price index has several key differences:

First, many GPU rental indices are based on spot/on-demand quotes or publicly listed prices, but in reality, the vast majority of GPU rental transactions are completed via long-term contracts typically lasting more than six months. These prices are often formed through bilateral negotiations and do not appear in any public database. Most large Neocloud service providers prefer to sign leases of at least one year, with two to three years being more ideal, and securing a lump-sum agreement for five years if possible. SemiAnalysis's H100 one-year rental index specifically focuses on this "contract market"—the portion with the most actual transaction volume. By clearly pointing to a specific rental period, this index is also easier for users to understand the market range it covers and compare it with their own observations.

Second, publicly disclosed prices do not represent actual transaction prices. The prices published by hyperscale cloud providers and Neocloud serve more as directional references rather than actual transaction levels. These prices are often lagging behind the changes in the contract market and are usually adjusted only after the demand for computing power has already shifted. Especially in the on-demand market, prices are often set at a relatively fixed level, and actual supply-demand changes are reflected through utilization or resource occupancy rates, with sporadic adjustments made only when necessary. This market mechanism will be elaborated further in the later sections of this article.

Third, although there are numerous indices on the market capable of handling large-scale quotes, prices, and transaction data, with advantages in trend analysis, our approach emphasizes direct interaction with market participants. Every quote and every transaction is backed by its specific context and decision logic. We hope to present quantitative data while also supplementing it with qualitative information and frontline observations to more comprehensively restore the true structure of the GPU rental market.

For institutional subscription users, we also provide complete term structure data covering almost all mainstream GPU rental markets.

While releasing the H100 one-year contract price index, we also launched the SemiAnalysis Tokenomics Dashboard for institutional-level Tokenomics model subscribers, designed to track and understand the frontier AI model landscape. This dashboard allows users to customize comparisons across dimensions like coding, inference, mathematics, and agent evaluations, compare different models and service providers' API pricing, and view key data disclosed by major AI labs, including token usage, revenue, valuation, and customer scale.

Current Structure of the GPU Rental Market

Before the second half of 2025, the pricing environment in the GPU rental market was relatively more competitive. At that time, operators had a more abundant inventory of GPUs, while end-user demand was just beginning to accelerate. Therefore, competition among Neocloud service providers was fierce, commonly using more attractive prices to grab customers. Their core goal was to improve utilization and "squeeze" the value of existing computing power as much as possible before the next round of GPU iteration cycles arrived.

However, the market landscape underwent a 180-degree shift thereafter. Now, Neocloud and hyperscale cloud providers have completely grasped the initiative—they can demand higher prepayments, better pricing, longer contract durations, and even choose the contract start and end dates to match their inventory and capacity arrangements. Simultaneously, time is on the supply side's side: they can advance deployments at their own pace, gradually filtering out the best customer combinations in a continuously rising price environment.

Structurally, the GPU rental market can be roughly divided into three major segments, with different segments corresponding to different types of customer demands:

Short-term rentals: on-demand, spot, and contracts of less than three months

Mid-term contracts: contracts ranging from three months to over three years

Long-term offtakes: contracts of four to five years, with five years being the most common

Short-term Rentals: On-demand, Spot, and Contracts of Less Than Three Months

Short-term rentals occupy the front end of the overall rental structure and often correspond to "spare capacity." However, some providers (such as Runpod and Lambda) specialize in offering significant and flexible on-demand or spot computing power.

It's noteworthy that the pricing mechanism in the on-demand market significantly differs from that of other contract markets. Typically, providers will set a relatively fixed price level for on-demand resources and will only adjust it under very rare circumstances. In other words, the prices in the short-term market are not entirely driven by real-time supply and demand but are more reflective of changes in resource utilization.

Providers generally adjust prices all at once based on resource utilization: when utilization is low, they will cut prices to stimulate demand; and when utilization approaches full load, they will raise prices, as demand can still maintain a high level even at higher price points.

This also explains why, from a time series perspective, the on-demand prices published by Neocloud often remain unchanged for long periods before suddenly experiencing a "discontinuous" rise or fall. For the on-demand market, what truly reflects demand changes frequently is not the price, but the resource utilization rate.

Source: Lambda Labs, SemiAnalysis

Mid-term Contracts

From an economic perspective, the more critical aspect is actually the "contract market," as the vast majority of GPU rental transaction values occur in this segment. Among these, one-year contracts are particularly important—they reflect the marginal demand of non-AI lab customers and also embody overflow demand from large clients, making them the most sensitive indicators for assessing market tightening.

AI native companies and mid-sized AI labs mainly operate in the one to three-year range. However, a noticeable trend recently has been that these organizations are beginning to try locking in computing power resources through longer-term contracts—many have extended to over four years and are even willing to pay more than 20% in advance, which has not been common in contracts longer than four years.

Long-term Offtakes

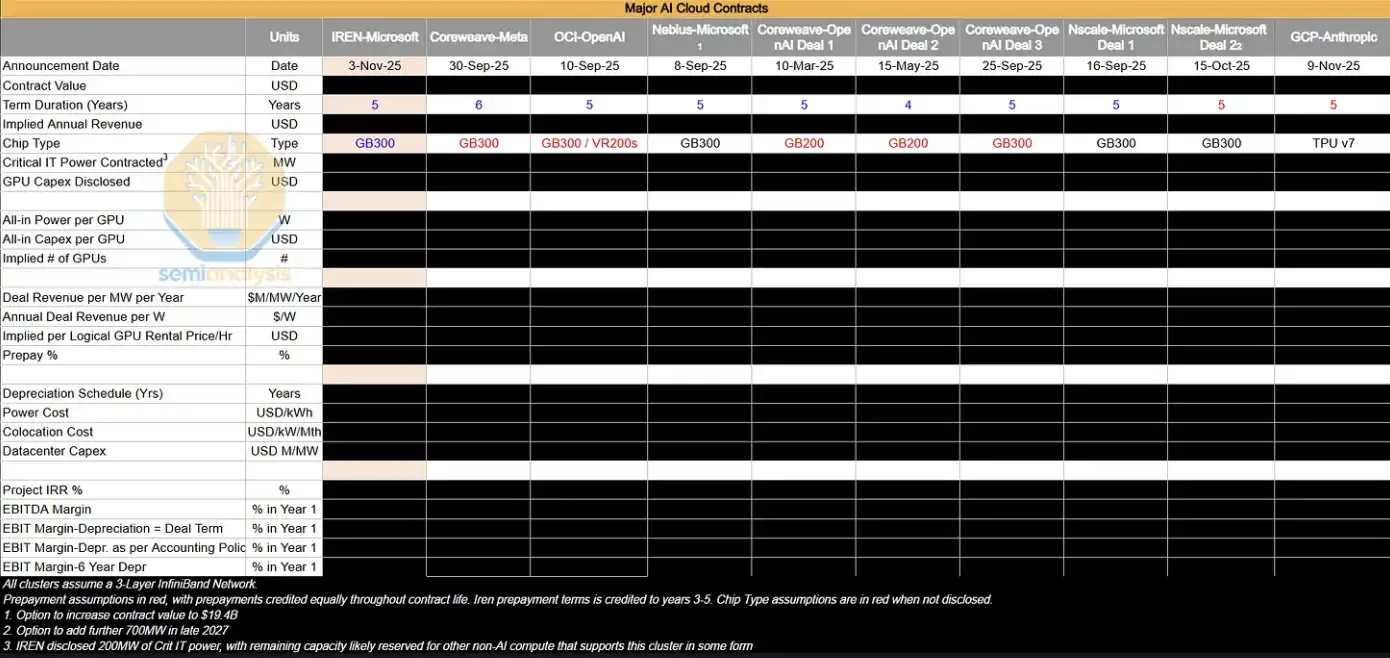

In the longer-term four to five-year market, the dominant forces are large AI labs that secure large-scale computing power resources early on. Such transactions typically correspond to clusters of 50MW, 100MW, or even larger scales, roughly equivalent to about 24,000 to 48,000 GB300 NVL72 GPUs. Overall, these long-term offtake agreements have occupied a significant share of the Neocloud GPU rental market.

AI labs favor these contracts because they can lock in large-scale computing power all at once to meet rapidly growing end-user demand. Additionally, these organizations typically engage deeply in cluster design, covering critical aspects like storage, networking, and CPU configurations. These transactions are often delivered in **bare metal** form because AI labs have sufficient engineering capabilities to customize the technology stack at a lower level, achieving the best TCO (total cost of ownership) and performance.

For Neocloud service providers, these transactions are also attractive. On one hand, they can concentrate sales resources on a few large orders without dealing with a large number of small-scale clients while earning the same revenue; on the other hand, long-term contracts allow them to finance debt under better conditions—by matching financing terms with contract terms, they can effectively reduce maturity mismatch and price fluctuation risks and, in most cases, lock in internal returns on investment (IRR) of several percentage points.

Moreover, hyperscale cloud providers often play the role of "backstop" in these arrangements—they act as direct purchasers, sourcing computing power from Neocloud and then reselling it to AI labs. This structure is a win-win for all parties: Neocloud can obtain better financing conditions based on AAA-rated purchasers, while hyperscale cloud providers can benefit from project earnings without expanding their balance sheets by providing credit backing.

The following table lists some of the large-scale offtake agreements we are tracking. We will conduct in-depth analyses of these transactions to reverse-engineer their implied GPU hour prices ($/hr/GPU), as well as key profitability indicators like project IRR and EBIT margins.

In the current market environment, the vast majority of large AI clusters that are expanding are actually being "internally absorbed" by AI labs. However, these organizations will still enter the four-year or shorter contract market to supplement their computing power while renewing existing H100 and H200 clusters, indirectly preventing supply from re-entering the market. As GB200 and GB300 large-scale clusters gradually go online, how the supply-demand relationship of one to three-year contracts evolves will become a key variable to monitor moving forward.

"Where The Puck is Going"

Currently, the most striking attention is the apparent divergence between the underlying reality and market sentiment. Although signals such as tightening supply and rising prices should be beneficial to Neocloud (margin expansion, and extending asset lifetimes), the public market has become increasingly pessimistic about companies like CoreWeave, Nebius, and Iris Energy, with their stock prices remaining at low levels over the past 6 to 12 months.

The market is still dominated by the narrative of "ultimate oversupply and commoditization of computing power," and the aforementioned changes have not truly alleviated investor concerns about the long-term value of GPUs. However, from frontline observations, persistent supply tension and enhanced pricing power imply that almost all computing power is being "absorbed" by demand—even when performance differs, there is still a shortage under the current extreme scarcity environment.

Three Future Observation Points

To determine whether GPU rental prices will maintain high levels, three variables can be closely monitored:

1. The pace of GB300 cluster expansion (2026)

The key is the relative speed between new capacities and token demand—whether supply eases tension or demand continues to surpass supply. This will directly affect whether AI labs remain involved in the four-year or shorter market, and the pricing trends in that interval.

2. Whether the chip shortage further deteriorates

Any fluctuations in key manufacturing execution areas including TSMC's N3 process capacity, HBM, DRAM, NAND, etc., could further tighten supply.

3. The growth rate of AI lab revenues (ARR) and token consumption

The commercialization and scalability of AI will determine the intensity of end-user demand, which is also a core variable driving computing demand.

Prices Move Upward Unidirectionally, and ROI Rises Accordingly

Overall, a relatively clear conclusion is that the likelihood of GPU rental prices continuing to rise is greater than that of any decline.

This process has obvious self-reinforcing characteristics: when Neocloud observes tightening supplies and rising prices, it will lock in more hardware in advance, further compressing market supply and driving prices upward. This is similar to the GPU shortage cycle of 2023–2024—at that time, tight supply enabled OEMs to achieve significant profit expansion and drove server prices up substantially (although this time the market has a higher maturity level, the process may not entirely replay).

Meanwhile, the renewed rise in GPU rental prices is also improving Neocloud’s capital return on investment (ROIC):

On one hand, it has increased the profit margins of deployed assets.

On the other hand, it has extended the economic usage cycle of GPUs, allowing capital to generate cash flow for a longer time.

Who are the Current Biggest Beneficiaries?

The most direct beneficiaries are computing power providers with the following characteristics:

· Primarily short-cycle contracts (can be repriced quickly)

· Possessing a large stock of H100 devices

· New capacities coming online in the short term

Short-term rental structures of Neocloud can more quickly release old contracts and re-sign at higher prices, achieving rapid profit expansion. At the same time, those hyperscale cloud providers and Neocloud that secured next-generation computing power (multi-year contracts) will also benefit in future cycles.

So the question arises: will this time really be "different"?

免责声明:本文章仅代表作者个人观点,不代表本平台的立场和观点。本文章仅供信息分享,不构成对任何人的任何投资建议。用户与作者之间的任何争议,与本平台无关。如网页中刊载的文章或图片涉及侵权,请提供相关的权利证明和身份证明发送邮件到support@aicoin.com,本平台相关工作人员将会进行核查。