There is a vulnerability that has been hidden in the OpenBSD codebase for 27 years. There is also a vulnerability in FFmpeg that has been concealed for 16 years, and that piece of code has been called over 5 million times before being discovered. What unearthed these two items was not top researchers from any bug bounty platform, nor was it Google's Project Zero. It was a yet-to-be-released model from Anthropic, codenamed Claude Mythos Preview.

On April 7, Anthropic announced Project Glasswing. The action itself is simple: send Mythos Preview to a whitelist. The whitelist includes AWS, Apple, Google, Microsoft, NVIDIA, Broadcom, Cisco, CrowdStrike, JPMorgan Chase, the Linux Foundation, Palo Alto Networks, plus about 40 agencies responsible for critical infrastructure. Those outside the list cannot access it. Anthropic clearly stated that they do not plan to publicly release this model in the short term.

This is the first time that a cutting-edge laboratory has actively locked away its strongest asset.

In the past two years, the pace of releases has been almost reflexive. Each generational leap of GPT, Gemini, and Claude has followed the pattern of "release, observe, patch." Anthropic's own "Responsible Scaling Policy" (RSP) essentially serves as a commitment framework; when a certain capability threshold is reached, corresponding mitigation measures are implemented, and then they continue to release. Glasswing is not the next step in this framework; it is the first exception to the framework. A model that has already been judged by Anthropic as "not suitable for release through the normal process" has been extracted separately and is given only to defenders.

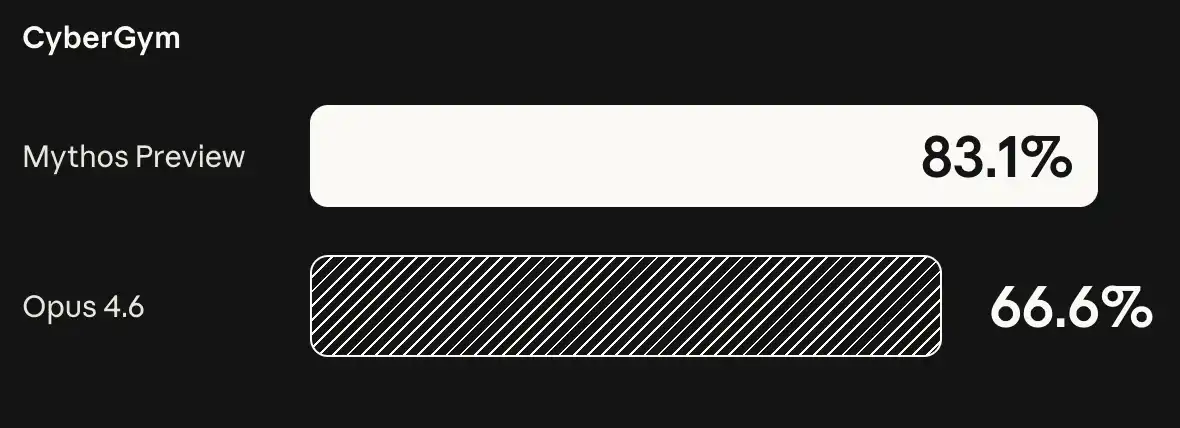

What has Mythos Preview achieved? The official statement is "thousands of zero-day vulnerabilities, covering every mainstream operating system and every major browser." What speaks more than the numbers is the range of capabilities. The success rate of Claude 4.6 Opus in autonomous vulnerability development tasks is close to zero, meaning that six months ago, Anthropic's own strongest publicly available model could not accomplish this task at all. Mythos can string together multiple unrelated vulnerabilities into a complete attack chain; a four-step browser exploit is already a proven example. The leap from "almost zero" to "four vulnerability chain" is not a generational advance, but a leap forward.

Maintainers have already sensed this. Greg Kroah-Hartman of the Linux kernel and Daniel Stenberg, the author of curl, have recently publicly stated the same thing: over the past year, AI-generated security reports have shifted from "spam level" to "real, high-quality, must-see." The number of reports received by open-source projects is increasing, quality is improving, but the manpower for maintainers has not risen. This is the suffering that the defense side has been enduring for a long time. Anthropic's action has merely brought this matter from vague anxiety to the forefront.

It is worth taking a look at the whitelist itself. Three major clouds (AWS, Google, Microsoft), three hardware companies (Apple, NVIDIA, Broadcom), two network equipment manufacturers (Cisco, Palo Alto Networks), one endpoint security company (CrowdStrike), one open-source infrastructure organization (Linux Foundation), and one bank. There is only one bank on the list: JPMorgan Chase.

This is not a random allocation of slots. Anthropic is mapping out a "if it collapses, the sky will fall" scenario. The vast majority of code in the world runs on the stacks of these companies, and the vast majority of money flows through the accounts of one of them. The logic of the whitelist is not "who needs it the most," but "who collapsing affects everyone first." Outside the list, Anthropic has also allocated 4 million dollars to open-source security organizations. The money provides manpower, while the model offers capability, and together it conveys one message: give maintainers months.

Anthropic's own wording is more direct than the list. The company stated, "Given the pace of AI development, such capabilities will not remain with participants focused on secure deployment for a long time." Followed by, "Defending global network infrastructure may take years."

Looking at these two sentences together, Anthropic judges that the window of time for the model to leak or be replicated is short, while the window of time for defenders to patch vulnerabilities is long. The entire significance of Glasswing lies between these two time differences. Using a controlled advantage to exchange for a patch window of months to a year.

This matter also has a Washington dimension. Anthropic is in ongoing communication with the U.S. government regarding the capabilities of Mythos Preview. At the same time, it is involved in an unresolved dispute with the U.S. Department of Defense regarding the scope of military AI use. A company refuses to use the model for certain military applications while actively sending this model to the security teams of the Linux Foundation and Apple. These two matters are not contradictory; they are two sides of the same judgment. Anthropic is defining "what this model can be used for," rather than leaving the definition power to the users.

The most unusual aspect of Glasswing is not what it does, but when it does it. In the past, AI companies proved themselves through releases. Now, Anthropic chooses to prove through "not releasing." A cutting-edge laboratory actively locks up its strongest product, stating that it is not due to commercial reasons, not due to incomplete alignment, not due to regulatory requirements, but because it has calculated that the timeline for openness can no longer keep pace with the timeline for fixes.

In the coming months, what to watch is not Mythos Preview itself, but how many vulnerabilities that emerge from the 50 or so organizations on the whitelist will be patched. The next step to observe is whether other cutting-edge laboratories will follow suit. If they do, an industry that has followed the rhythm of "open, iterate, open" will, for the first time, see an action of "lock it up and say more later." If they do not, Anthropic will be the one standing at the door, holding the key, and watching the clock.

免责声明:本文章仅代表作者个人观点,不代表本平台的立场和观点。本文章仅供信息分享,不构成对任何人的任何投资建议。用户与作者之间的任何争议,与本平台无关。如网页中刊载的文章或图片涉及侵权,请提供相关的权利证明和身份证明发送邮件到support@aicoin.com,本平台相关工作人员将会进行核查。