Original | Odaily Planet Daily (@OdailyChina)

Author | Azuma (@azuma_eth)

On April 8, the AI development company behind Claude, Anthropic, officially announced a new initiative called "Project Glasswing," which will be jointly promoted with several leading companies such as Amazon, Apple, Broadcom, Cisco, CrowdStrike, Google, JPMorgan Chase, the Linux Foundation, Microsoft, Nvidia, and Palo Alto Networks.

Anthropic stated that this is an urgent initiative aimed at protecting the world's most critical software, where all parties will jointly use the Mythos Preview version to identify and fix potential defects in the systems on which the current world relies.

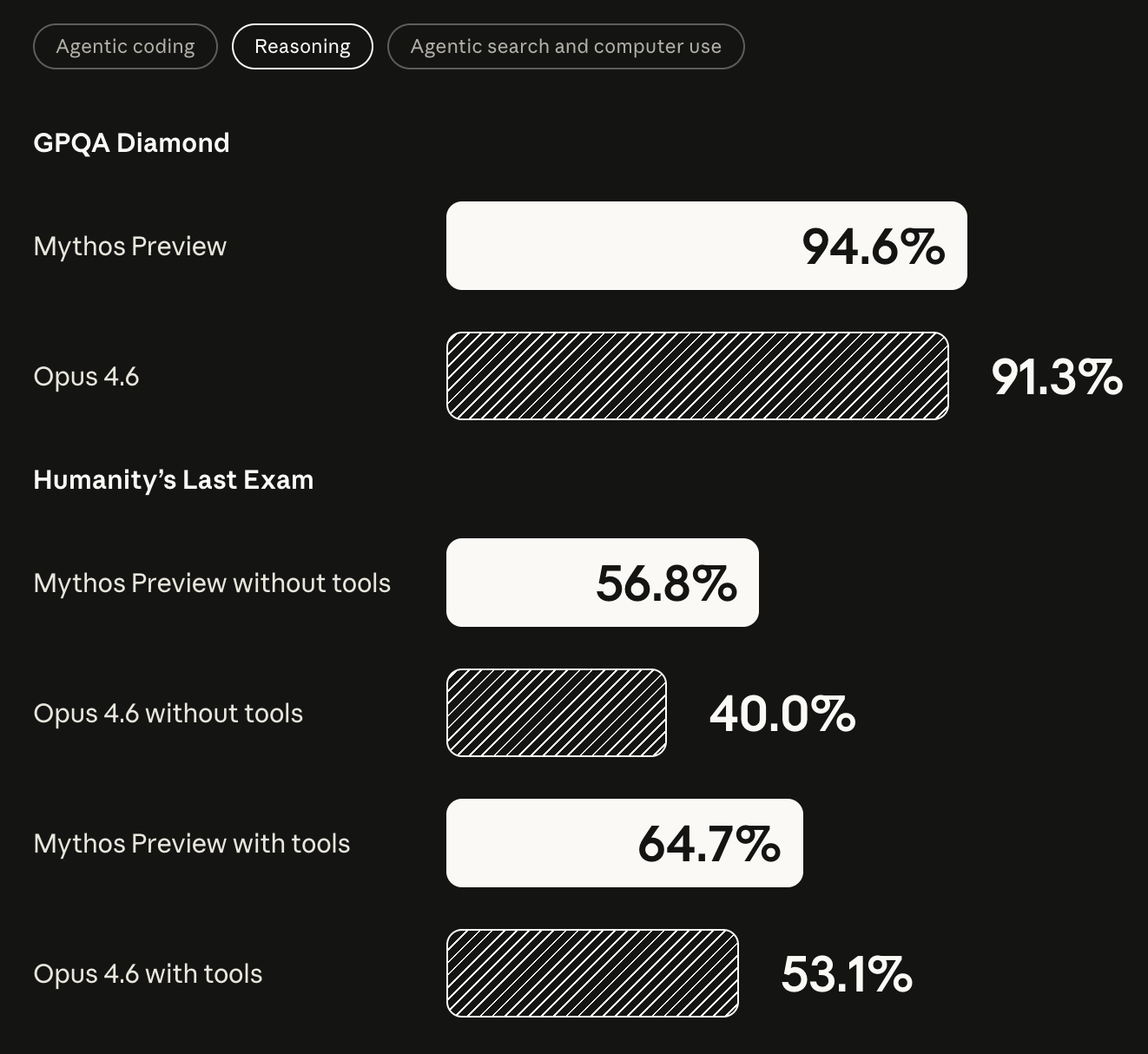

The so-called Mythos refers to the next-generation AI model being developed by Anthropic, which is the first model in human history to exceed one trillion parameters (in contrast, currently mainstream models on the market have parameter counts in the hundreds of billions to one trillion), with training costs reaching an astonishing $10 billion. Compared to Claude's current most powerful model Opus 4.6, Mythos has significantly improved scores in software coding, academic reasoning, and cybersecurity tests.

Rumors about Mythos had already circulated in the market last week, with widespread concerns about whether Mythos, which possesses cybersecurity specialization capabilities, would affect the current security offensive and defensive landscape. If maliciously exploited, could it lead to larger-scale security incidents? At that time, Odaily reported on this issue and discussed with industry security experts and Slow Mist founder Yu Xian about the potential impacts on the security offense and defense in the cryptocurrency industry (see the interview: "Odaily Interview with Yu Xian: How Will Anthropic's Nuclear-Level New Model Leakage Impact Cryptocurrency Security Offense and Defense?"), but at that time, Anthropic had not publicly acknowledged the existence of Mythos, so relevant information remained limited.

On April 8, with the announcement of the "Project Glasswing," Anthropic also revealed more details about Mythos. Based on the actual test cases released by Anthropic, they have not exaggerated Mythos’s capabilities, to the extent that the company even hesitated to directly publish the model for fear of malicious use by hacker groups, and instead plans to allow top companies to trial the inspection and preemptively fix potential vulnerabilities through the "Project Glasswing."

Mythos Flexes Its Muscles: Thousands of "Zero-Day Vulnerabilities" Discovered in Just Weeks

When discussing the power of Mythos, Anthropic clearly stated that the birth of this model signifies that a harsh reality has arrived — the coding capabilities of AI models have reached an extremely high level, and in finding and exploiting software vulnerabilities, they can nearly surpass everyone except the most skilled humans.

According to disclosures from Anthropic, within just a few weeks, they used Mythos to identify thousands of zero-day vulnerabilities (defects that even the software developers had not previously discovered), many of which are high-risk vulnerabilities, covering all major operating systems and mainstream browsers and affecting a range of other critical software.

Anthropic provided several representative cases:

- Mythos discovered a 27-year-old vulnerability in OpenBSD, a system known for its "extreme security" and widely used for firewalls and other critical infrastructure, and this vulnerability allows attackers to remotely crash the system;

- In the widely used video processing library FFmpeg, Mythos found a 16-year-old vulnerability, where the faulty code had been triggered more than 5 million times by automated tests but had never been discovered;

- Mythos was also able to automatically chain multiple vulnerabilities in the Linux kernel, upgrading from ordinary user permissions to complete control of the server.

Even more concerning is that Anthropic stated that most of these vulnerabilities were "autonomously discovered and constructed paths for exploitation" by Mythos with almost no human intervention, which may indicate that AI has already begun to possess automated offense and defense capabilities similar to top hacker teams.

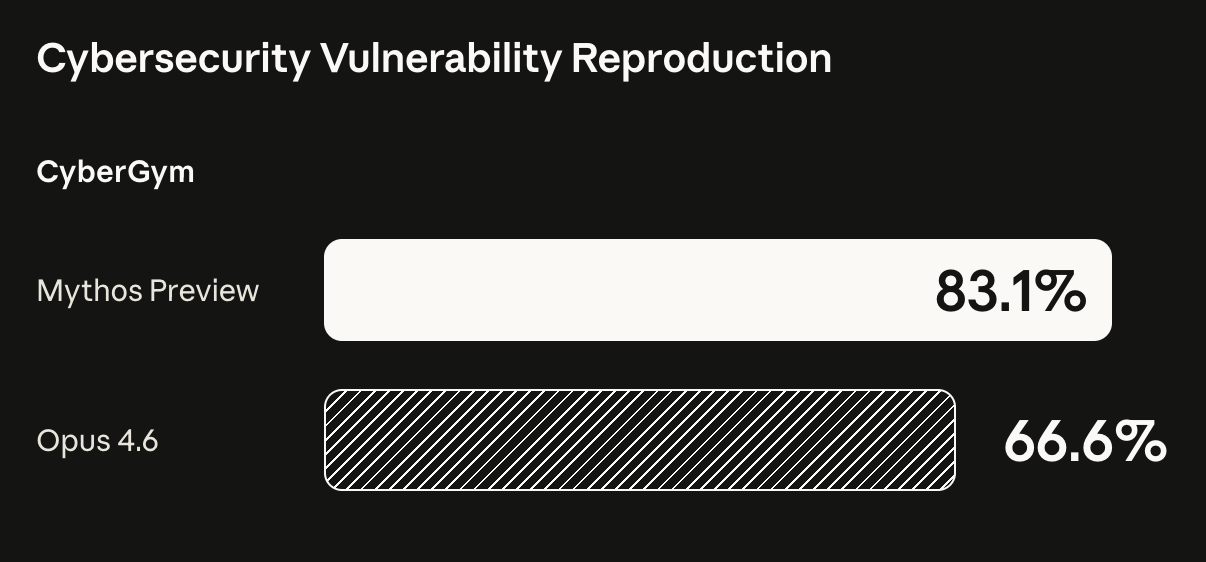

In terms of evaluation benchmarks, Mythos exhibited a dramatic evolution compared to Opus 4.6. For example, in the cybersecurity vulnerability reproduction tests, Mythos achieved 83.1%, while Opus 4.6 was at 66.6%; in multiple coding and reasoning tests, Mythos also scored significantly higher.

Perhaps precisely because of Mythos's overwhelming capabilities, Anthropic did not choose to directly open the model but instead first launched the "Project Glasswing" to allow the entire internet to "reinforce" in advance.

Through this initiative, Anthropic will provide participants with early access to the Mythos Preview version to discover and fix vulnerabilities or weaknesses in their fundamental systems — focusing on tasks like local vulnerability detection, binary program black-box testing, terminal security hardening, and system penetration testing.

Anthropic also committed to providing a total of $100 million in model usage credits to participants to support usage throughout the research preview phase. Afterward, the Mythos Preview version will be made available to participants at a price of $25 per million input tokens / $125 per million output tokens (participants can also access the model through the Claude API, Amazon Bedrock, Google Cloud Vertex AI, and Microsoft Foundry). Aside from the model usage credits, Anthropic will also donate $2.5 million to Alpha-Omega and OpenSSF through the Linux Foundation and $1.5 million to the Apache Software Foundation to help open-source software maintainers cope with the evolving security environment.

Anthropic plans to gradually expand the participation in "Project Glasswing" and continue to promote it for months while sharing experiences as much as possible so that other organizations can apply relevant experiences to their own security constructions. Within 90 days, Anthropic will publicly report on the phase results, including vulnerabilities that have been fixed and disclosable security improvements.

Technology Will Continuously Upgrade, But There Is No Need for Excessive Worry

AI is irreversibly changing the world we are familiar with, including the cybersecurity field that this article focuses on. With the thresholds for discovering and exploiting vulnerabilities significantly lowered, there are inevitable concerns that AI may become a double-edged sword in the hands of malicious actors, threatening the existing cybersecurity balance. (PS: For cryptocurrency users who need to put real money into wallet systems or on-chain protocols, this concern is particularly strong.)

In response to this question, Anthropic believes "we still have reasons to remain optimistic." AI models are dangerous precisely because they have the ability to cause harm in the hands of wrongdoers, but at the same time, AI also holds immeasurable value in discovering and fixing critical software flaws and developing safer new software.

It is foreseeable that in the coming years, AI's capabilities will still evolve rapidly, but as new attack methods emerge, new defense mechanisms will also arise. Technological upgrades are inevitable, but that does not mean risks will necessarily spiral out of control — as long as the defense system evolves concurrently, it can even leverage AI to build a stronger security moat.

免责声明:本文章仅代表作者个人观点,不代表本平台的立场和观点。本文章仅供信息分享,不构成对任何人的任何投资建议。用户与作者之间的任何争议,与本平台无关。如网页中刊载的文章或图片涉及侵权,请提供相关的权利证明和身份证明发送邮件到support@aicoin.com,本平台相关工作人员将会进行核查。