Author: Claude, Deep Tide TechFlow

Deep Tide Guide: Amazon announced on Monday an additional investment of up to $25 billion in Anthropic (of which $5 billion will be available immediately), and secured a commitment of over $100 billion in AWS spending from the latter over the next decade.

This is the second time Amazon has issued a ten billion-dollar check to a leading AI lab in two months—previously, it had just invested $50 billion in OpenAI.

Anthropic's annual revenue has surpassed $30 billion, but the computing power bottleneck is dragging down the user experience; the core goal of this transaction is to solve the capacity crisis.

Amazon is betting on two major labs in the AI field at the same time, and the stakes are increasing.

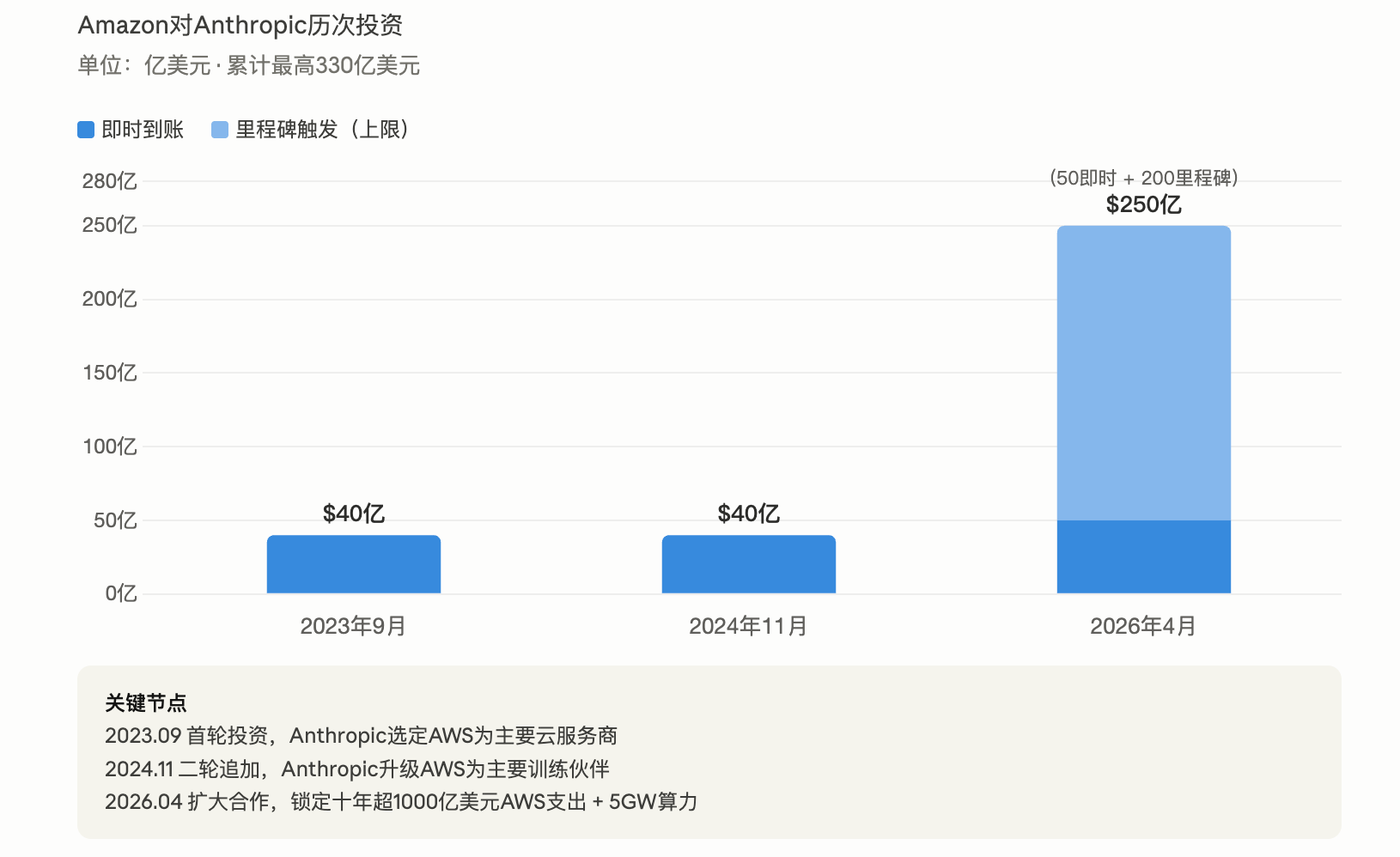

According to reports from multiple media outlets including CNBC and Bloomberg on April 20, Amazon announced an additional investment of up to $25 billion in Anthropic, with $5 billion available immediately, and the remaining $20 billion linked to specific business milestones. This investment is executed at a $380 billion valuation based on Anthropic's Series G funding in February this year, adding to the previous cumulative investment of $8 billion, Amazon's total investment commitment to Anthropic has reached $33 billion.

Two months ago, Amazon invested $50 billion in Anthropic's main competitor, OpenAI, and reached a similar scale cloud service agreement. Amazon CEO Andy Jassy stated in a press release that Anthropic committed to running large language models on AWS Trainium for up to ten years, "reflecting the progress we have made in customized chips."

After the announcement, Amazon’s after-hours stock price rose about 2.5%.

$100 billion cloud commitment for 5 gigawatts of computing power, responding to OpenAI's "insufficient computing power" accusations

The core of this transaction is not just equity investment, but a deeply binding infrastructure agreement.

Anthropic has committed to investing over $100 billion in AWS technology over the next decade, covering Amazon's customized AI chips Trainium (from Trainium2 to Trainium4 and future generations) and tens of millions of Graviton CPU cores. In exchange, Anthropic will receive up to 5 gigawatts of computing capacity for training and deploying the Claude model. According to disclosures from the Anthropic blog, the company is currently using over 1 million Trainium2 chips to train and serve Claude, planning to put nearly 1 gigawatt of Trainium2 and Trainium3 capacity into operation by the end of 2026.

This expansion in computing power directly addresses OpenAI's recent public attacks. OpenAI's Chief Revenue Officer, Denise Dresser, stated in an internal memo last week that Anthropic made a "strategic mistake by failing to acquire enough computing power" and predicted that OpenAI will have 30 gigawatts of computing power by 2030, whereas Anthropic will only have 7 to 8 gigawatts by the end of 2027. In today's announcement, Anthropic admitted that demand for Claude among enterprises and developers is accelerating, and consumer usage has also seen a "sharp rise," putting "inevitable pressure" on infrastructure, affecting reliability and performance during peak periods.

Anthropic CEO Dario Amodei stated in a press release: "Users tell us that Claude is becoming increasingly important to the way they work, and we need to build infrastructure to keep up with the rapidly growing demand."

Amazon issues two $10 billion checks to two AI labs within two months

Amazon's investment strategy has become very clear: simultaneously betting on two leading players in the AI race.

In February of this year, Amazon announced an investment of up to $50 billion in OpenAI, also accompanied by a commitment of $100 billion in AWS cloud services. The current deal structure with Anthropic is almost identical—a $25 billion investment plus a lock-in of over $100 billion in cloud spending. According to GeekWire, Amazon is executing "the same script" for both labs.

Both AI companies are also competing to prove their strength to investors. According to CNBC, Anthropic and OpenAI are preparing for an IPO that could land as early as this year. OpenAI's latest financing round was valued at over $850 billion, while Anthropic was valued at $380 billion. Anthropic claims its annual revenue has surpassed $30 billion (approximately $9 billion by the end of 2025), while OpenAI alleged in a memo that this figure has been inflated by about $8 billion, as Anthropic accounts for cloud cooperative income with Amazon and Google in total, rather than net.

Microsoft is also betting on both ends—having previously invested over $13 billion in OpenAI, it is set to invest up to $5 billion in Anthropic in November 2025, with the latter promising to procure $30 billion in Azure computing power.

The Claude platform settles in AWS, the battle for over 100,000 customers

Beyond investment, the integration of products between the two parties is also deepening.

According to the announcement, Anthropic's native Claude platform will be directly embedded in AWS, allowing users to access the complete Claude console through existing AWS accounts, permission controls, and billing systems, without needing additional registration or signing new contracts. This is a step further than the previous provision of Claude services through the Amazon Bedrock marketplace. Amazon disclosed that over 100,000 organizations are currently running Claude models on Amazon Bedrock.

Anthropic also emphasized in its blog that Claude is the only cutting-edge AI model that has been launched across the three major cloud platforms (AWS Bedrock, Google Cloud Vertex AI, Microsoft Azure Foundry). This multi-platform strategy allows enterprise clients to flexibly choose deployment paths according to their needs and is one of the differentiated advantages of Anthropic in competition with OpenAI.

On the client side, Lyft reduced its average customer service resolution time by 87% after using Claude to drive its customer service AI assistant through Amazon Bedrock. Pfizer utilized Claude to assist scientists in voice searching drug development documents, saving approximately 16,000 hours of retrieval time each year.

AI infrastructure race: Amazon’s capital expenditure expected to reach $200 billion this year

The larger context behind this transaction is the arms race for AI infrastructure among cloud computing giants.

Amazon stated in February that it expects capital expenditures to reach around $200 billion by 2026, with the vast majority directed towards AI infrastructure. A previous collaboration, Project Rainier (a massive computing cluster with nearly 500,000 Trainium2 chips), was one of the largest AI computing clusters in the world, and Anthropic is using it to train and deploy existing and future versions of Claude.

Earlier this month, Anthropic also expanded its cooperation with Google and Broadcom, securing "multiple gigawatt" levels of computing power that is expected to go online in 2027. Coupled with this 5 gigawatt agreement with Amazon, Anthropic is simultaneously expanding its computing power reserves on multiple fronts.

Amazon's customized chip business is also accelerating. Jassy recently revealed that the annual revenue of this business has exceeded $20 billion, doubling from the earlier reported $10 billion earlier this year, which he described as "exceptionally hot."

免责声明:本文章仅代表作者个人观点,不代表本平台的立场和观点。本文章仅供信息分享,不构成对任何人的任何投资建议。用户与作者之间的任何争议,与本平台无关。如网页中刊载的文章或图片涉及侵权,请提供相关的权利证明和身份证明发送邮件到support@aicoin.com,本平台相关工作人员将会进行核查。