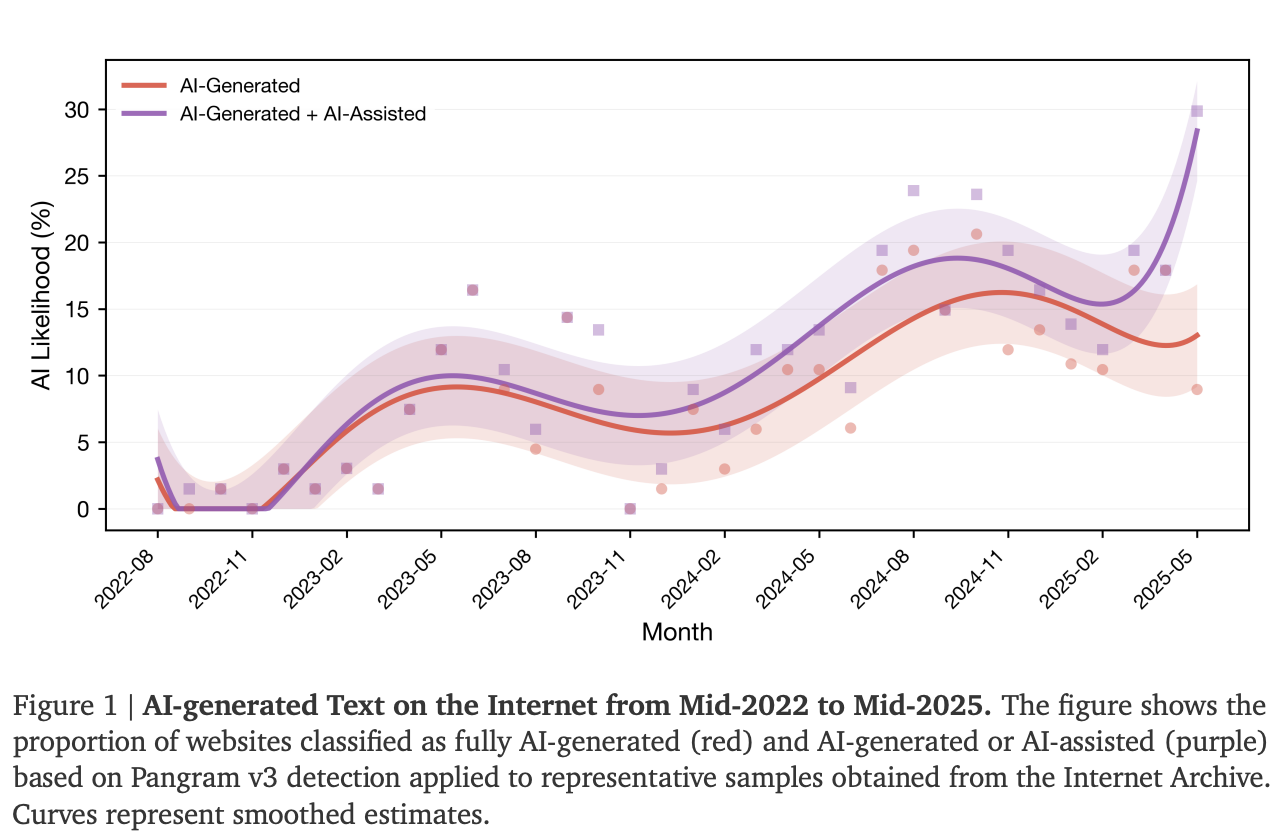

A new study has a number for how much of the internet is now AI-generated: 35%. That's the share of newly published websites classified as AI-generated or AI-assisted by mid-2025, according to research from Stanford University, Imperial College London, and the Internet Archive. The figure was essentially zero before ChatGPT launched in November 2022.

"I find the sheer speed of the AI takeover of the web quite staggering," Jonáš Doležal, researcher at Imperial College London and co-author of the paper, told 404 Media. "After decades of humans shaping it, a significant portion of the internet has become defined by AI in just three years."

The study, titled “The Impact of AI-Generated Text on the Internet,” drew on 33 months of website snapshots from the Internet Archive's Wayback Machine and used an AI text detector called Pangram v3 to classify each page.

The confirmed harms: vibes, not facts

Researchers tested six hypotheses about what AI content does to the web. Only two held up under data scrutiny.

The first: We’re turning into a horde of dumb NPCs acting in the same way… Or more scientifically put, the web is becoming less semantically diverse.

AI-generated sites showed pairwise semantic similarity scores 33% higher than human-written ones. The same ideas keep getting expressed in nearly the same ways.

The paper suggests the online Overton window may be narrowing, not through censorship or coordinated campaigns, but because language models optimize for outputs close to their training distribution.

The second: The web is getting aggressively cheerful.

AI content showed positive sentiment scores more than 107% higher than human content. Researchers tie this to the well-documented sycophantic tendencies of LLMs—trained on human approval signals, they produce text that feels sanitized, friction-free, and relentlessly upbeat.

An internet flooded with cheerful, homogenized content may marginalize human dissent at scale without anyone pulling a lever.

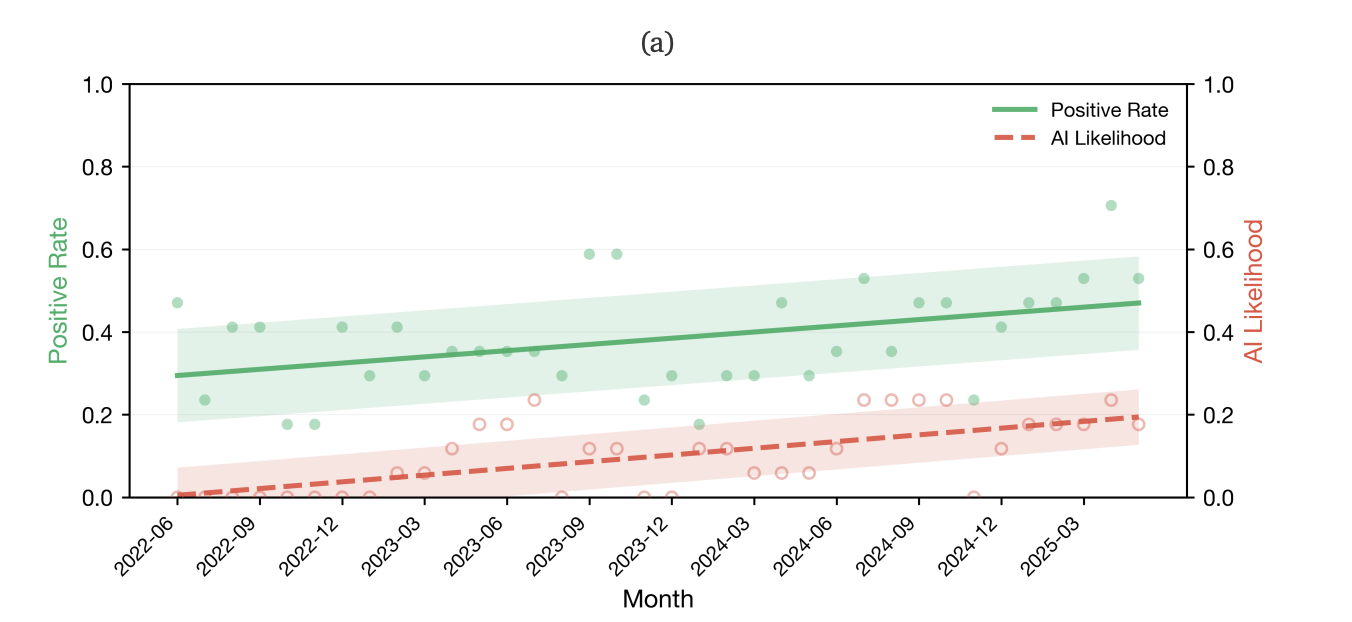

Despite widespread public belief, the study found no statistically significant evidence that AI content is making the internet less factually accurate. Researchers found no meaningful correlation between AI prevalence and factual error rate.

The stylistic monoculture hypothesis—AI flattening individual voices into a generic uniform register—was the belief respondents held most strongly (83% agreed). The data didn't confirm it. Character-level analysis found no statistically significant increase in stylistic homogeneity tied to AI prevalence.

The model collapse problem just got real

The broader stakes go beyond discourse quality. At 35% AI prevalence, the theoretical risk of model collapse—where future models degrade after training on AI-generated data—shifts from academic concern to empirical reality. Future foundation models trained on contemporary web crawls will inevitably ingest data that is substantially AI-generated and measurably less semantically diverse.

The team is now working with the Internet Archive to turn the study into a continuous, live monitoring tool, tracking AI's share of the web in real time rather than as a one-off snapshot.

A U.S. survey conducted alongside the study found most Americans already believe all six negative hypotheses, including the ones the data doesn't support. People who use AI infrequently were 12% more likely to believe in the harms than frequent users. Dead Internet Theory believers, meet the data: The internet isn't dead, but 35% of what's new is probably zombie content in some way.

免责声明:本文章仅代表作者个人观点,不代表本平台的立场和观点。本文章仅供信息分享,不构成对任何人的任何投资建议。用户与作者之间的任何争议,与本平台无关。如网页中刊载的文章或图片涉及侵权,请提供相关的权利证明和身份证明发送邮件到support@aicoin.com,本平台相关工作人员将会进行核查。