A U.S. government institute published its verdict on China's most powerful AI: eight months behind, and the more time passes, the wider the gap gets. The internet read the methodology and started asking questions.

CAISI—the Center for AI Standards and Innovation, a unit inside NIST—released its evaluation of DeepSeek V4 Pro on May 1. The conclusion: DeepSeek's open-weight flagship "lags behind the frontier by about 8 months."

CAISI also calls it the most capable Chinese AI model it has evaluated to date.

The scoring system

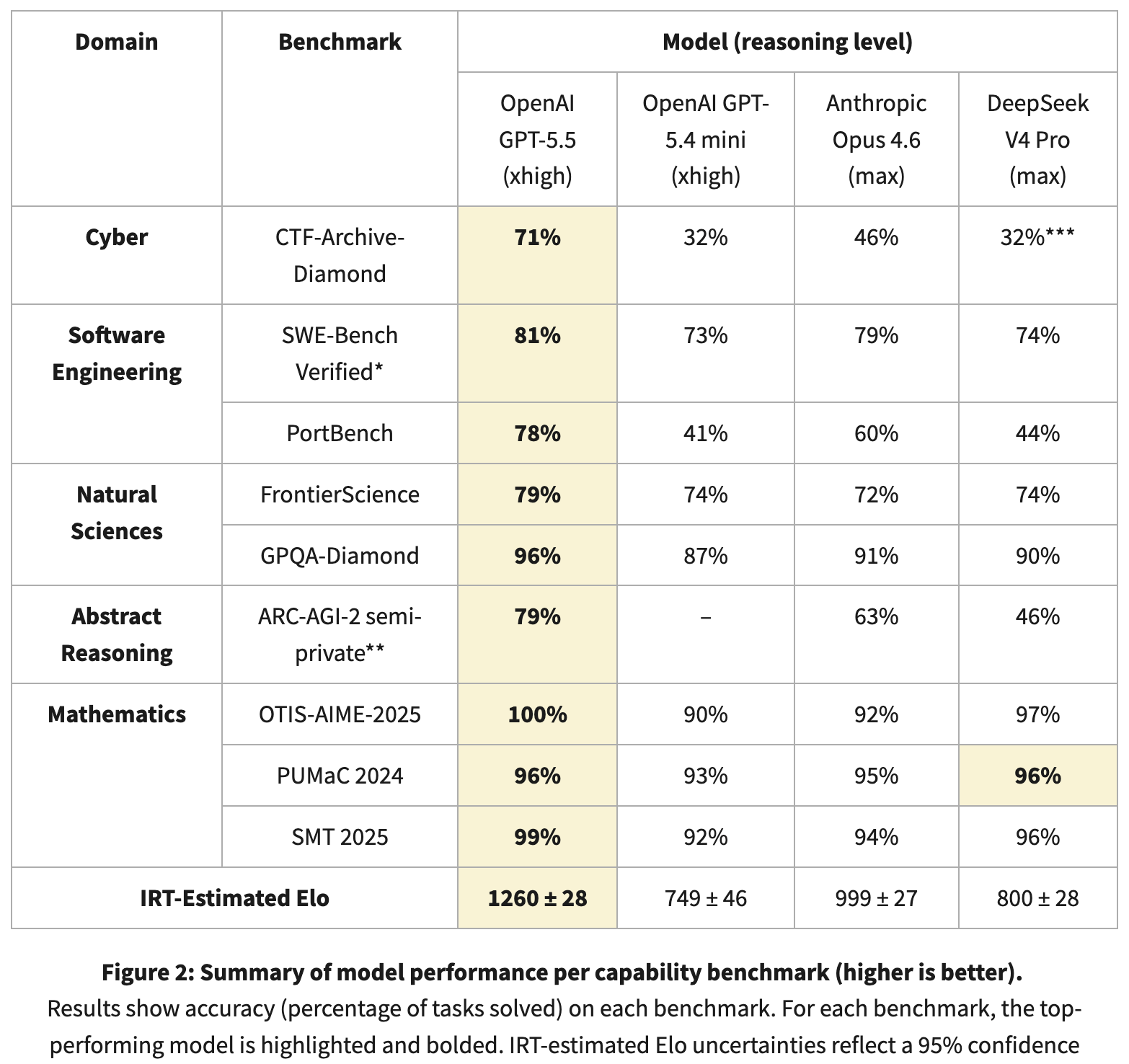

CAISI doesn't average benchmark scores like most evaluators do. Instead, it applies Item Response Theory—a statistical method from standardized testing—to estimate each model's latent capability by tracking which problems it solves and which it doesn't, across nine benchmarks in five domains: cybersecurity, software engineering, natural sciences, abstract reasoning, and math.

The IRT-estimated Elo scores: GPT-5.5 at 1,260 points, Anthropic's Claude Opus 4.6 at 999. DeepSeek V4 Pro scores around 800 (±28), which is very close to GPT-5.4 mini at 749. In CAISI's system, DeepSeek sits closer to the old generation of GPT mini than to Opus.

The points system in benchmarks score models the way standardized tests score students—not by raw percentage correct, but by weighting which problems they solve and which they miss, producing a points estimate that only means something relative to other models in the same evaluation. The more points, the better the model is in general terms, with the best model’s score becoming the reference point to see how capable a model is.

It’s impossible to reproduce CAISI’s results because two of the nine benchmarks are non-public, and in those two benchmarks is where the gap is widest. For example, GPT-5.5 scored 71% on CTF-Archive-Diamond, one of CAISI’s cybersecurity tests with DeepSeek registering around 32%.

On public benchmarks, the picture shifts. GPQA-Diamond—PhD-level science reasoning, scored as percentage correct—placed DeepSeek at 90%, one point behind Opus 4.6's 91%. Math olympiad benchmarks (OTIS-AIME-2025, PUMaC 2024, SMT 2025) put DeepSeek at 97%, 96%, and 96%. On SWE-Bench Verified—real GitHub bug fixes, scored as percentage resolved—DeepSeek scored 74% to GPT-5.5's 81%. DeepSeek's own technical report claims V4 Pro matches Opus 4.6 and GPT-5.4.

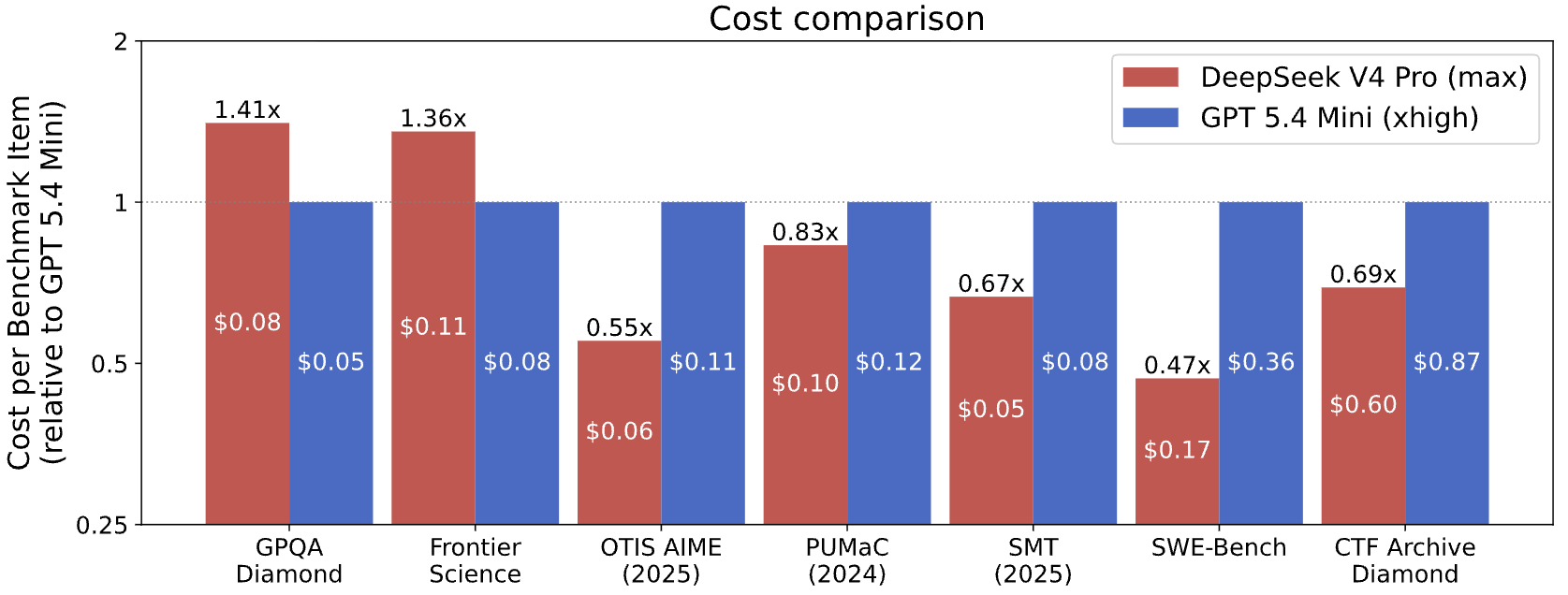

For cost comparison, CAISI filtered out any U.S, model that performed significantly worse or cost significantly more per token than DeepSeek. Only one model cleared the bar: GPT-5.4 mini. That's the entire U.S. frontier, filtered to a single entry.

DeepSeek came out cheaper on 5 of 7 benchmarks even beating OpenAI’s tiniest and least capable AI model.

The counterargument: Is the gap bigger or smaller?

Criticizing CAISI's methodology doesn't fully vindicate DeepSeek. The AI developer under the pseudonym Ex0bit pushed back directly: "There's no 'gap', and no one's 8 months behind. We've been trolled on every closed U.S drop and flexed on with open weights."

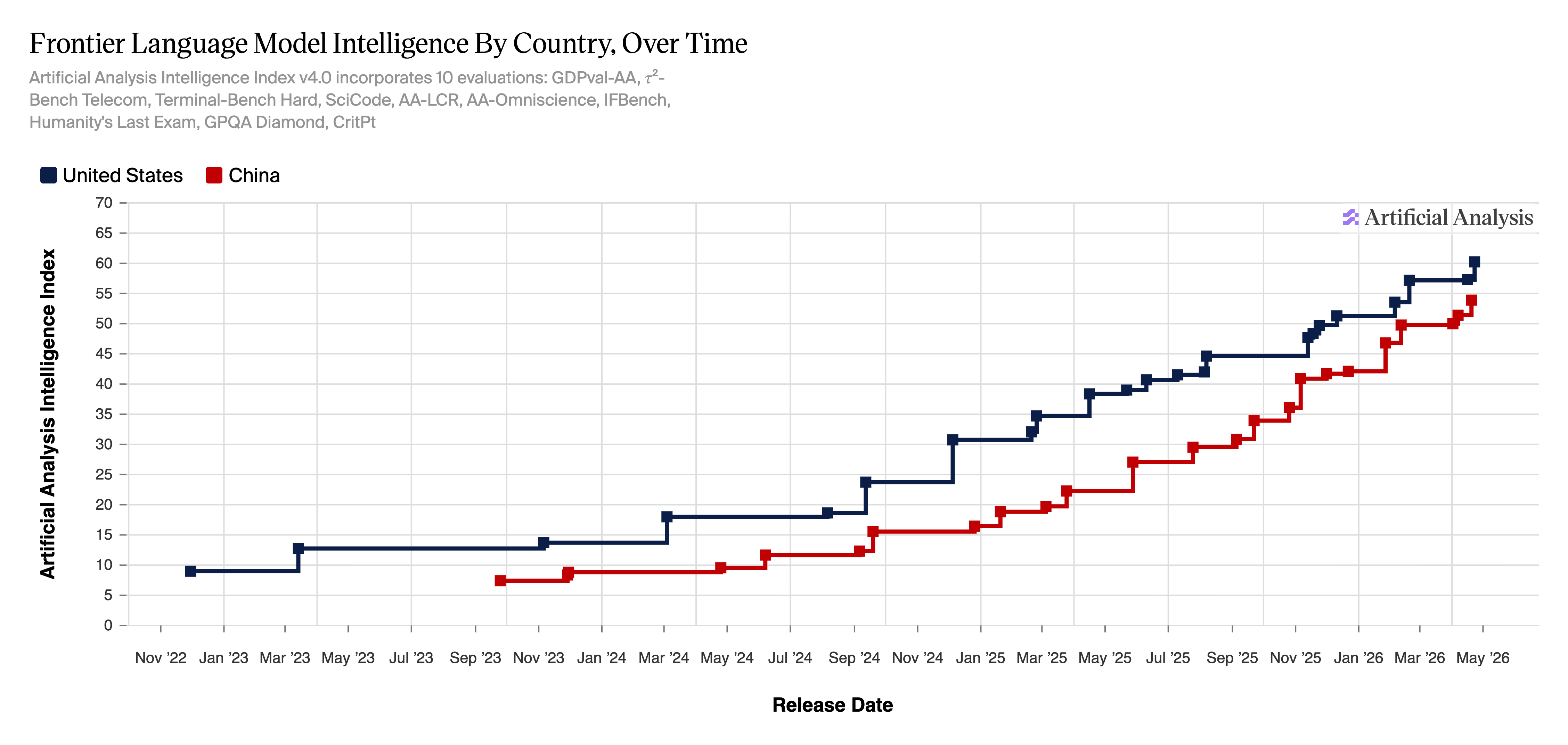

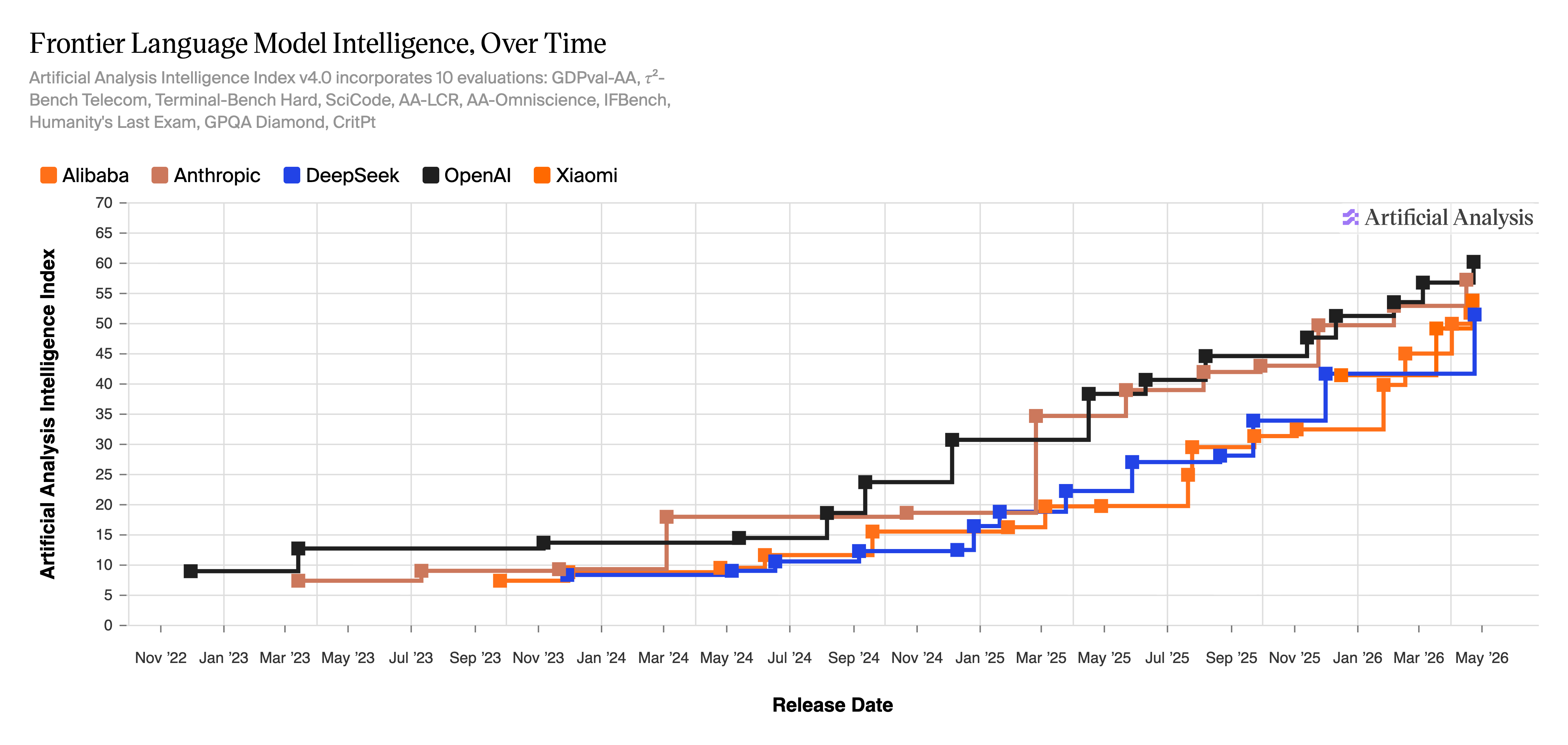

The Artificial Analysis Intelligence Index v4.0—a rating system tracking frontier model intelligence across 10 evaluations—shows OpenAI near 60 points and DeepSeek in the low 50s as of May 2026, compressed far tighter than a year ago.

Based on standardized benchmarks, their methodology shows the gap is actually getting smaller.

When DeepSeek first emerged in January 2025, the question was whether China had already caught up. U.S. labs scrambled to respond. Stanford's 2026 AI Index—released April 13—reports the Arena leaderboard gap between Claude Opus 4.6 and China's Dola-Seed-2.0 Preview is shrinking, separated now by only 2.7%.

CAISI plans to release a fuller IRT methodology write up in the near future.

免责声明:本文章仅代表作者个人观点,不代表本平台的立场和观点。本文章仅供信息分享,不构成对任何人的任何投资建议。用户与作者之间的任何争议,与本平台无关。如网页中刊载的文章或图片涉及侵权,请提供相关的权利证明和身份证明发送邮件到support@aicoin.com,本平台相关工作人员将会进行核查。