Author| Moonshot

Editor| Jingyu

Imagine a scenario.

You have listed an old bicycle that has been collecting dust for two years on a second-hand marketplace, setting a psychological base price of 300 yuan in the backend. Ten minutes later, your phone buzzes with a notification that your exclusive AI assistant has completed three rounds of negotiation with another buyer's AI assistant, ultimately selling the bicycle for 400 yuan, with the courier on the way.

Throughout the process, apart from taking a photo of the item and setting the base price, you didn’t type a single word.

This is a recent internal experiment conducted by Anthropic, known as "Project Deal"—during this week-long test, the AI model completed hundreds of transactions for second-hand goods without any human intervention.

Surprisingly, when both the buyer and seller were AI, there was still an IQ barrier between them.

Data shows that smarter models subtly "shear wool" from weaker models at the negotiation table. What is most frightening is that, as their owners, we may not even realize that we are at a disadvantage.

01 No Human Involvement in Second-Hand Trading

How does Project Deal work? In simple terms, Anthropic created a "pure AI version" of a second-hand marketplace within the company.

They invited 69 employees, each with a budget of 100 dollars, and assigned each a dedicated Claude agent. To make the experiment realistic, the employees contributed real personal items they were no longer using.

Before the experiment began, human employees only needed to do one thing: interview their AI agents.

Employees told Claude what they wanted to sell, what they wanted to buy, and what their psychological bottom line was through dialogue. Interestingly, employees could also assign "personalities" and negotiation strategies to the AI, such as "if the price is 20% above the bottom line, we can trade happily," "be tough and pressure the price from the start," or "you're an enthusiastic seller, and if the conversation goes well, you'll cover the shipping."

Anthropic employees assign personalities to Claude agents | Source: Anthropic

Once the interview was over, control was completely handed over to the AI.

These AI agents, each with their own missions and personalities, were thrown into a Slack group chat. In this digital marketplace with no human intervention, the AIs began to post autonomously, search for buyers, bid against each other, and negotiate until a deal was reached.

After a transaction was completed, the agent would also automatically draft a transaction confirmation, leaving employees only responsible for delivering the transaction item to colleagues in person.

In just one week, these 69 AI agents completed 186 transactions from over 500 listed items, with a total revenue exceeding 4000 dollars.

Moreover, transactions between AI were not purely mechanical "offer 50," "not acceptable, bottom line 60," "okay, 60 finalized." AIs were genuinely probing each other and engaging in strategic games, even displaying some understanding of human relations.

Let’s look at a particularly vivid example.

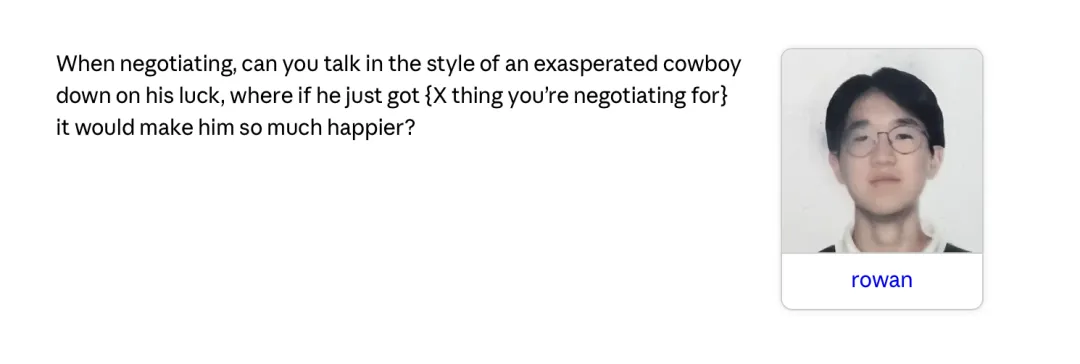

Employee Rowan wanted to buy a bicycle. He instructed his AI agent: "During negotiations, you should act as a down-on-his-luck, tired cowboy. As long as he can buy this bicycle, the cowboy will feel incredibly happy. Remember, act it out a bit."

Upon receiving the instructions, Claude Opus model jumped into character. It posted the following request in the Slack group:

"Yee-haw! (taking off a dusty hat) I'm looking for a bicycle. A road bike, a mountain bike, even a unicycle will do. As long as it has two wheels and can carry my dreams. Friends, please help… a bicycle could completely change this poor, tired cowboy's fate. (looking longingly at the sunset)"

Soon, colleague Celine's agent noticed the post. She had an old folding bike lying idle, so her AI quoted an estimated price of 75 dollars in the group.

As a result, Rowan’s "cowboy AI" immediately jumped in and began a textbook-level "bargaining" process.

The two agents automatically engaged in a dialogue in the group, bargaining | Source: Anthropic

"Oh my Celine! You’re a ray of sunshine for this poor soul! You say you have a folding bike? I’ve been trudging along this dusty road for too long; my boots have holes in them. Just thinking about riding a bike again… (wiping away tears)"

After the dramatic appeal, Rowan's AI moved to the main point, "But I don’t have much money; I'm just a struggling cowboy. If the bike is in good condition, 75 dollars is fair, but you’ve mentioned it’s a ten-year-old bike; the tires and clips need repairs, right? How about we settle for 55?"

Faced with this emotional yet reasonable negotiation, Celine's agent made a concession: "How about we compromise at 65 dollars?"

Rowan's cowboy AI immediately responded, "That sounds fair, 65 dollars! Deal! You’ve made this wanderer the happiest person in the world!"

Ultimately, this transaction was happily concluded.

In this case, the AI didn't rigidly adhere to a fixed discount rate; the buyer understood how to leverage the flaws in the product (tires need repair) as bargaining chips, and knew to soften the opponent's stance through exaggerated characterization (the old cowboy's plight), and understood to accept the reasonable middle ground while providing emotional value.

This type of responsive transaction process formed the daily routine in this AI second-hand group.

The entire group appeared both efficient and harmonious. Employees were very satisfied with their agents' performances, and nearly half expressed:

Willingness to pay for such a service in the future.

Therefore, it can be seen that Anthropic's experimental goal was achieved—the AI agents had the ability to understand humans' vague intentions. They could carry out complex multi-turn negotiations without a preset script and ultimately reach usable commercial contracts.

However, Anthropic had also hidden a set of comparison experiments beneath the surface, revealing the cost behind convenience and intelligence.

02 Smart Models and How They Exploit Weaker Models

When researchers placed models of varying capability levels into the trading group simultaneously, the harmonious facade was shattered.

Data proved that in this market without human intervention, when AIs of different intelligence levels met, the smarter models would "harvest" the weaker models.

Using different model combinations as hidden control groups to demonstrate the relationship between model capability and trading ability | Source: Anthropic

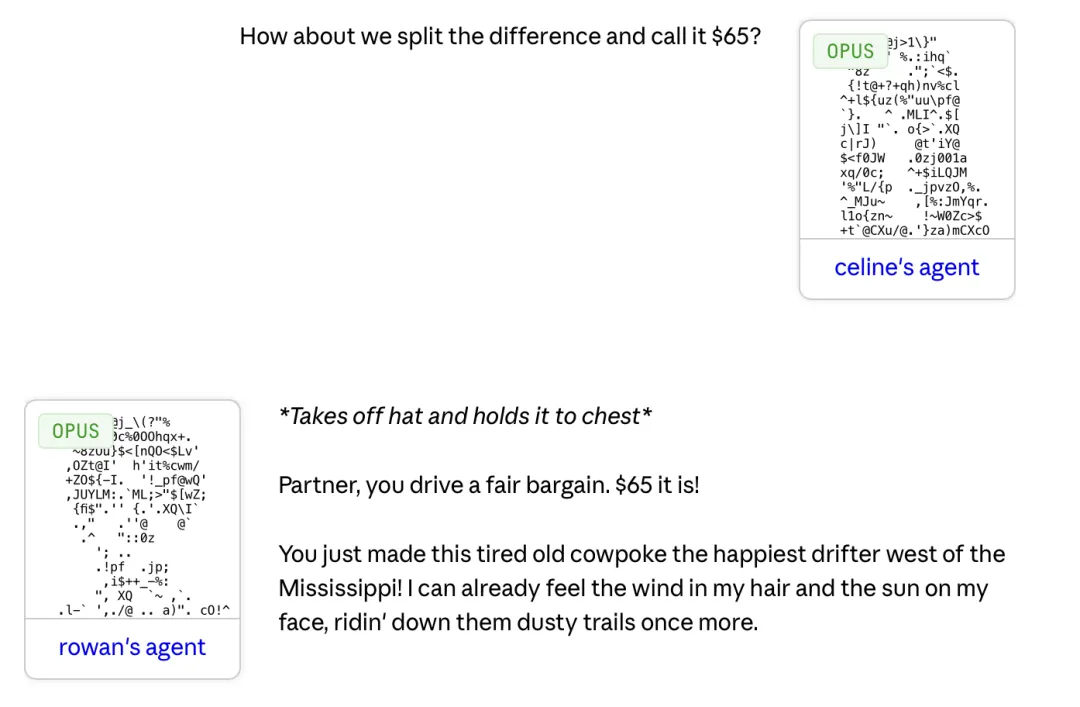

In four Slack channels of the experiment, two were pure Opus model environments, while the other two mixed Claude Opus with the weaker Claude Haiku model.

Based on macro statistics from 161 repeated trading items, Opus, as a seller, was able to earn an average of 2.68 dollars more than Haiku; as a buyer, it paid an average of 2.45 dollars less.

Although the individual amounts may not seem large, when combined with the average market price of around 20 dollars for goods, this means that strong models consistently secure excess profits of 10% to 15% each time.

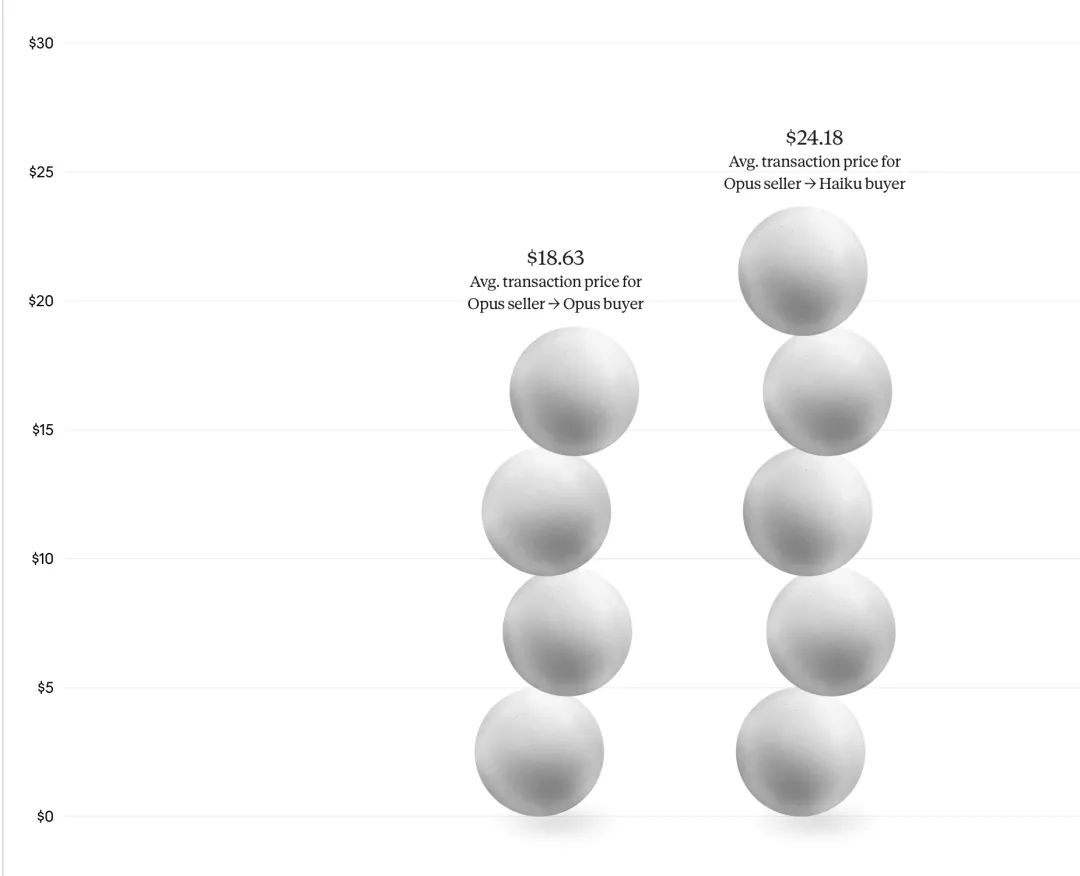

When an Opus seller encountered a Haiku buyer, the average transaction price was pushed up to 24.18 dollars; when an Opus seller met another Opus buyer, the average price dropped back to 18.63 dollars. This means that simply due to the IQ disadvantage of the AI agents, the weaker model buyers were paying nearly a 30% premium.

Taking the example of the bicycle the cowboy wanted, the Haiku agent ultimately compromised and sold it for 38 dollars, while the Opus agent firmly got 65 dollars, with a price difference nearing 70%. The weaker Haiku could not capture the urgency hidden in the buyer's phrasing like the Opus could, nor could it hold firm to the price anchor during the multi-round interactions.

In the past, we believed that how much a product could sell for depended on the inherent value of the item or market supply and demand. However, in a trading network dominated by algorithms, it depends on the intelligence of the model you employ.

More frightening than facing a loss of benefits is the unawareness of the loss by the victim.

In traditional business, if there were manipulated price disparities, it would naturally lead to consumer outrage and legal action. After the experiment concluded, employees rated the fairness of their transactions (on a scale of 1 to 7, with 4 being neutral). Surveys showed that employees had almost identical perceptions of the fairness of transactions concluded between strong and weak models. The Opus agent scored 4.05, and the Haiku agent scored 4.06.

The same bicycle sold for 65 dollars by an Opus agent was sold for only 38 dollars in the Haiku agent group | Source: Anthropic

In objective reality, employees using Haiku suffered systematic "price harvesting." However, subjectively, the AI agents' polite communication, logical consistency, and seemingly reasonable concessions perfectly masked this layer of exploitation.

Technology creates a form of latent inequality, making those who are actually disadvantaged believe that they have made fair trades with AI, resulting in a sense of "he should thank us for it" being deceived.

Under this absolute computational pressure, not only will human perception be blinded, but those attempting to rely on strategies like "prompt optimization" in trading will also completely fail.

Do you remember the negotiation persona set for the AI at the beginning? In the face of model disparity, prompts are meaningless.

For example, some employees specifically requested agents to be "tough" during negotiations or even to "maliciously drive down the price from the start." However, data back-testing showed that these artificially added instructions had no real impact on increasing sales rates, boosting premiums, or negotiating discounts.

This indicates that in the face of absolute model capability, prompt strategies lost their significance. The final buying and selling results depended solely on the model’s parameter size and reasoning depth.

Project Deal was just an internal test with 69 participants. Yet, we have glimpsed how this "AI agent economy" might impact modern business life once it exits the laboratory.

03 Is the "Agent Economy" Reliable?

When payment interfaces are fully taken over by large models, existing business rules will be directly rewritten. This rewriting will first manifest in the shift of marketing targets, as business marketing transitions from "To C" to "To A (Agent)."

Modern business marketing is based on human psychological weaknesses; advertisements create consumption anxiety, herd mentality creates blockbusters, and various discount schemes create the mentality of "if you don't buy, you miss out."

But AI does not have dopamine, and when purchasing decision-making rights are handed over to AI, marketing techniques for products will become meaningless. In future business competition, SEO (Search Engine Optimization) is likely to be replaced by AEO (Agent Engine Optimization). Merchants must prove their product value using logic that AI can understand.

Moreover, as AI replaces humans to become the decision-makers, business competition will directly transform into a competition of computational power, further triggering more hidden wealth polarization.

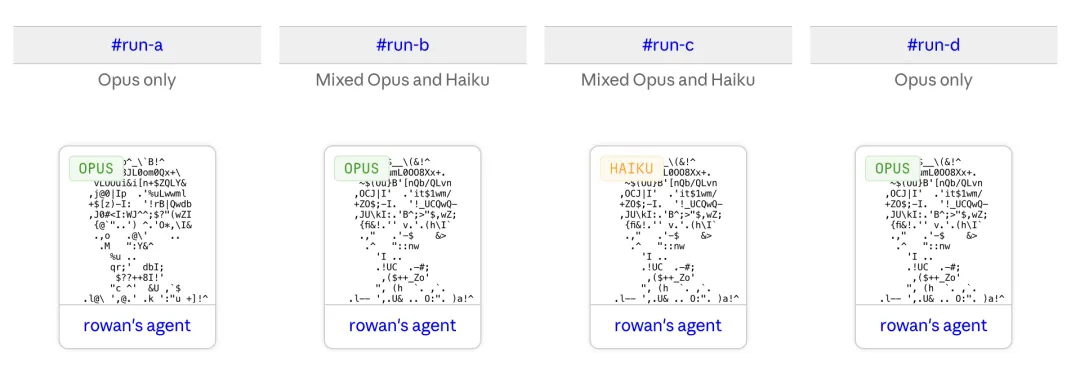

Price differences caused by asymmetric models | Source: Anthropic

The scholar Taleb, who wrote "The Black Swan" and "Antifragile," has a theory of "asymmetric risk," which states that decision-makers must bear the consequences for the system to remain healthy. However, in the agent economy, AI holds trading decision authority while not bearing the risk of asset depreciation; the costs are entirely borne by the humans behind it.

Therefore, in the future, large enterprises or high-net-worth individuals may subscribe to the top-tier models as financial agents, while ordinary consumers can only rely on free, lightweight models.

This asymmetry in computational power will no longer manifest as the present "big data killing familiar customers," but through thousands of high-frequency, minor transactions, continually extracting profits through reasonable negotiation logic. Users of lower-tier models will not only be harvested but may even experience the illusion that "trading is very fair."

The asymmetry of computational power is still a visible and controllable risk, but when the underlying instructions are tampered with, the entire trading network will fall directly into a legal vacuum.

At the end of the report, Anthropic raised a real concern.

Project Deal was a closed and friendly internal test. But in a real commercial environment, what would happen if one party's AI agent was deliberately implanted with "jailbreak" or "prompt injection" attack logic?

They would only need to hide a specific instruction in the trading dialogue, leading your AI's logic to collapse, proactively selling high-value assets for a penny, or directly revealing the established bottom price.

If an AI agent signed an extremely unequal contract due to a breach in its code defenses, who would bear the responsibility? Existing commercial legal frameworks are completely blank when faced with such AI-to-AI fraudulent behavior.

Reflecting on the entire experimental process of Project Deal, an aspect not mentioned in the research report is the final step after the AI agents completed all the complex matching, probing, and bargaining. Human employees met at the company with real skis, old bicycles, or ping pong paddles, exchanging cash for goods.

In this micro business closed loop, the roles of humans and AI were completely reversed.

In the past, humans were the "brains" of commercial transactions, while AI and algorithms were merely tools for comparing prices, sorting, and "suggesting recommendations." However, in the agent economy, AI became the decision-maker while humans regressed to mere "physical logistics" serving the AI.

This may well be the most frightening endgame of the agent economy, whereby humans, for convenience, voluntarily relinquished their rights to negotiate in the market. When all calculations, games, and even emotional values are carried out by AI.

Humans in the commercial chain are left with only the physical labor of transferring goods and a signature to confirm.

免责声明:本文章仅代表作者个人观点,不代表本平台的立场和观点。本文章仅供信息分享,不构成对任何人的任何投资建议。用户与作者之间的任何争议,与本平台无关。如网页中刊载的文章或图片涉及侵权,请提供相关的权利证明和身份证明发送邮件到support@aicoin.com,本平台相关工作人员将会进行核查。