Tether is building an AI platform that runs on your own hardware.

QVAC provides a modular SDK that lets developers build AI micro-modules for virtually any device. Those modules connect and collaborate through a peer-to-peer encrypted network without centralized servers, API keys, or gatekeepers.

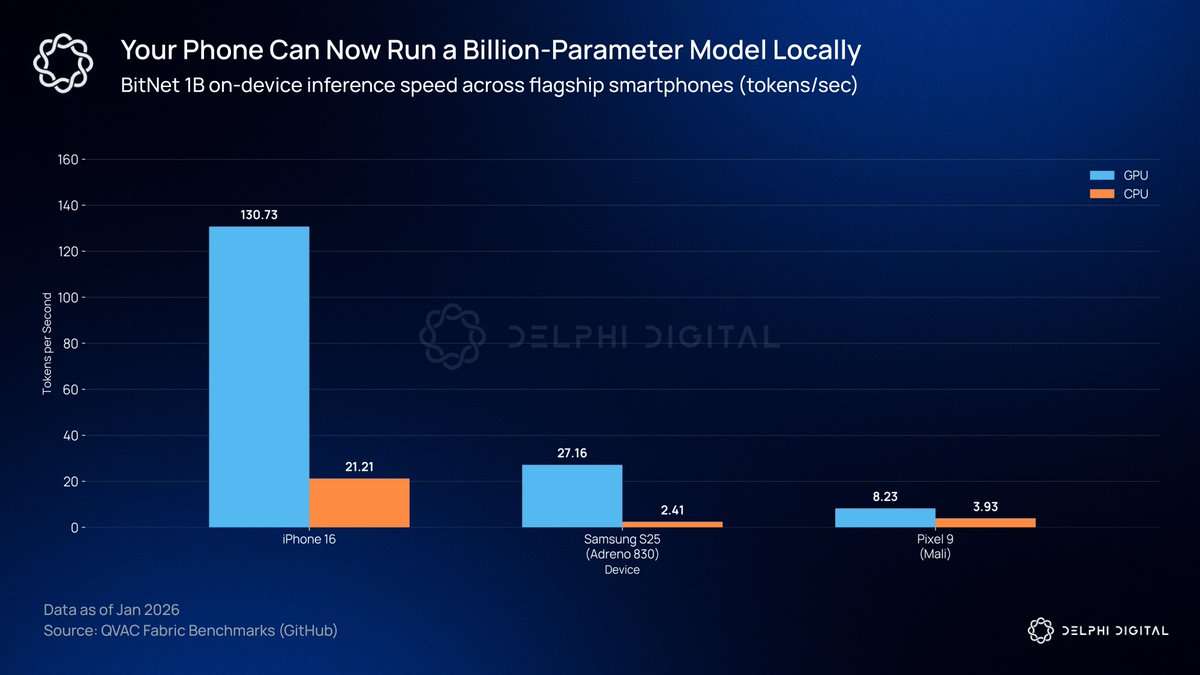

QVAC Fabric just added support for Microsoft's BitNet architecture to enable LoRA fine-tuning and inference of 1-bit large language models directly on consumer devices. What previously required dedicated NVIDIA GPUs and expensive server infrastructure can now run on everyday devices.

Tether's benchmarks show BitNet models using up to 77.8% less VRAM than comparable 16-bit models, with GPU inference running between 2x and 11x faster than CPU on mobile devices. Fabric has been released as open source.

AI development today depends on the same kind of centralized infrastructure that crypto was designed to move away from. Training and fine-tuning models still rely on NVIDIA hardware and cloud providers, which concentrates control over a small number of companies.

Fabric aims to change this by making consumer hardware a viable platform for real model development.

Tether is building several applications on QVAC. Translate handles offline transcription and translation across text, audio, and images. Health uses an on-device AI agent to track health data locally. Keet is integrating QVAC AI to enable on-device conversational features.

Tether's development of QVAC suggests decentralized AI is becoming a serious priority for them.

免责声明:本文章仅代表作者个人观点,不代表本平台的立场和观点。本文章仅供信息分享,不构成对任何人的任何投资建议。用户与作者之间的任何争议,与本平台无关。如网页中刊载的文章或图片涉及侵权,请提供相关的权利证明和身份证明发送邮件到support@aicoin.com,本平台相关工作人员将会进行核查。