Author: 0xai

Special thanks to @DistStateAndMe and their team for their contributions in the field of open-source AI models, as well as for the valuable advice and support provided for this article.

Why You Should Pay Attention to This Report

If "decentralized AI training" has shifted from impossible to possible, how undervalued is Bittensor?

At the beginning of 2026, there was a pervasive sense of fatigue throughout the entire Crypto circle.

The aftershocks of the last bull market had long dissipated, and talent was accelerating towards the AI industry. Those who once talked about "the next 100x" are now discussing Claude CodeOpenclaw. "Crypto is wasting time" — you may have heard this more than once.

But on March 10, 2026, a Bittensor subnet called Templar quietly announced something.

More than 70 independent participants from around the globe, with no central server, no large company coordination, solely relying on Crypto incentives, collaboratively trained a 72 billion parameter AI large model.

The model and related papers have been published on HuggingFace and arXiv, with data that is publicly verifiable.

More critically: In several key tests, this model outperformed comparable models trained with heavy investments by Meta.

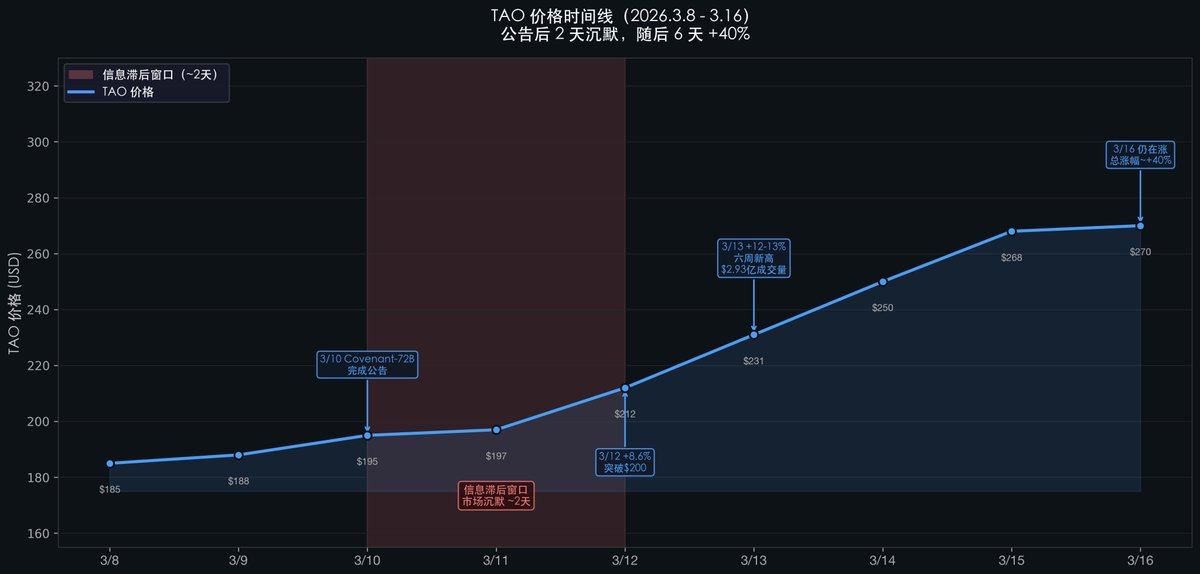

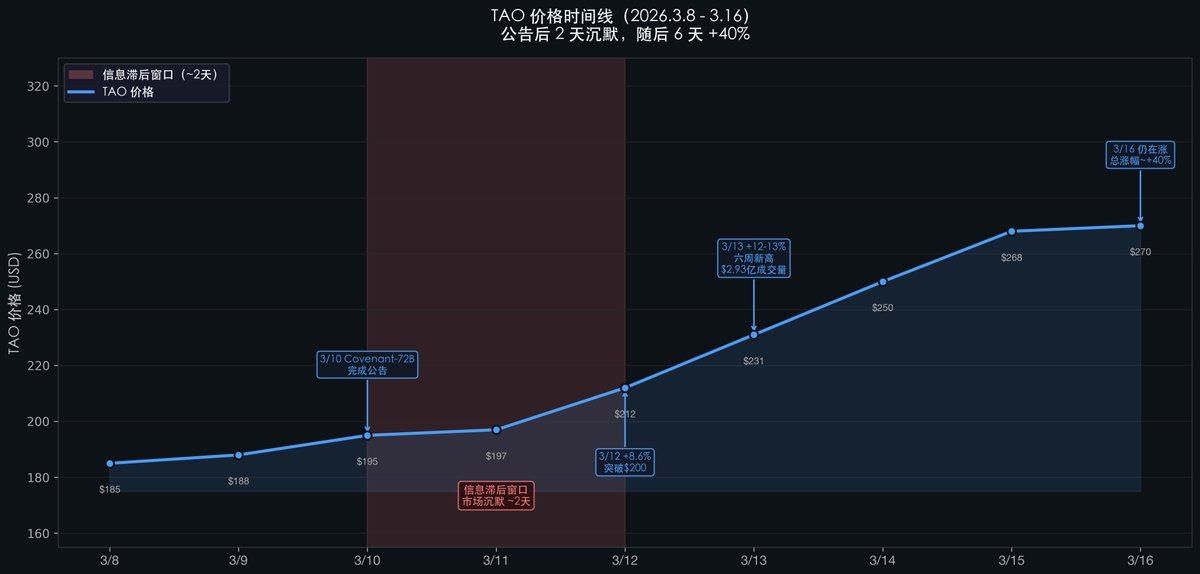

After the announcement, the price of TAO remained silent for nearly two days. It only began to surge on the third day, and has not stopped six days later, with an approximate total increase of +40%. Why was there a two-day delay?

The core argument of this report is: Crypto investors see "another open-source model," feeling it cannot compete with commonly used GPT, Claude; AI researchers do not pay attention to crypto. The gap between the two circles is creating a cognitive arbitrage window.

Reading Framework

This report is divided into two logical parts:

Part I — Technological Breakthrough: Explain what SN3 Templar has accomplished and why this matter is significant in the history of AI and Crypto.

Part II — Industry Significance: Explain why this matter indicates that the Bittensor ecosystem is systematically undervalued and why it is said that Bittensor is the hope of the entire Crypto village.

Part I: Breakthrough in Decentralized AI Training

1. What does SN3 do?

What is required to train a large language model?

The traditional answer: Build a giant data center, purchase thousands of top GPUs, spend hundreds of millions of dollars, led by a unified engineering team from one company. This is how Meta, Google, and OpenAI operate.

The approach of SN3 Templar: Let individuals scattered around the world each take out one or several GPU servers, combining their computing power like a puzzle piece, to collaboratively train a complete large model.

However, there is a fundamental problem: If participants are from all over the world, do not trust each other, and have unstable network delays, how can the training results be guaranteed as valid? How to prevent someone from slacking off or cheating? How to incentivize everyone to keep contributing?

Bittensor provides the answer: Use the TAO token as an incentive. The more effective the gradient (which can be understood as "contribution to model improvement"), the more TAO one receives. The system scores and settles automatically, without the need for any central authority to coordinate.

This is Bittensor's SN3 (Subnetwork No. 3), codenamed Templar.

If Bitcoin proved that decentralized "money" is possible, SN3 is proving that decentralized "AI training" is also possible.

2. What were the achievements of SN3?

On March 10, 2026, SN3 Templar announced the completion of training a large language model named Covenant-72B.

What does "72B" mean?: 72 billion parameters. Parameters are the "knowledge storage units" of AI models; the more there are, the smarter the model typically is. GPT-3 has 175 billion, and LLaMA-2 (Meta's open-source flagship) has 70 billion. Covenant-72B is in the same magnitude as LLaMA-2.

How large was the training scale?: About 1.1 trillion tokens ≈ 5.5 million books (assuming 200,000 words per book).

Who participated in the training?: More than 70 independent participants (miners) contributed computing power sequentially (with a synchronization limit of about 20 nodes per round), with training starting on September 12, 2025, lasting about 6 months. There was no central server and no unified authority to coordinate.

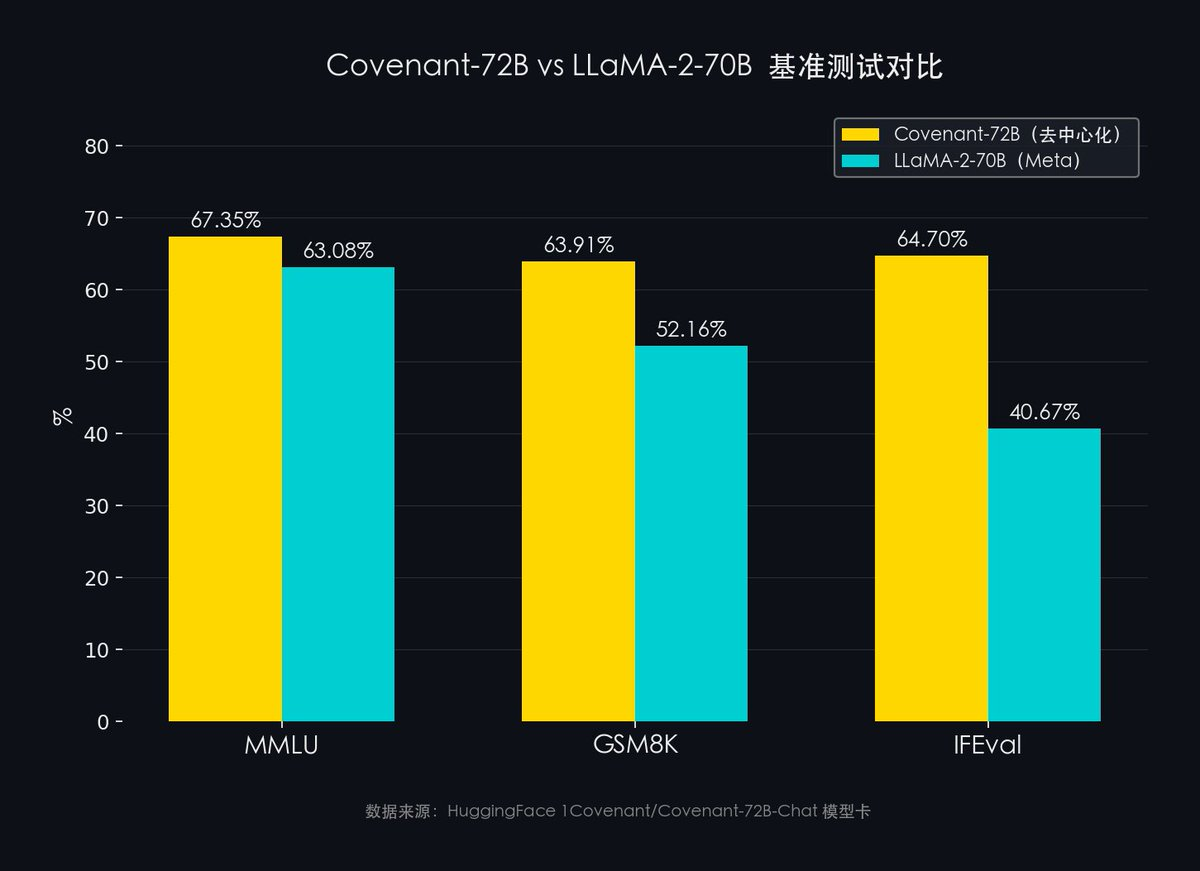

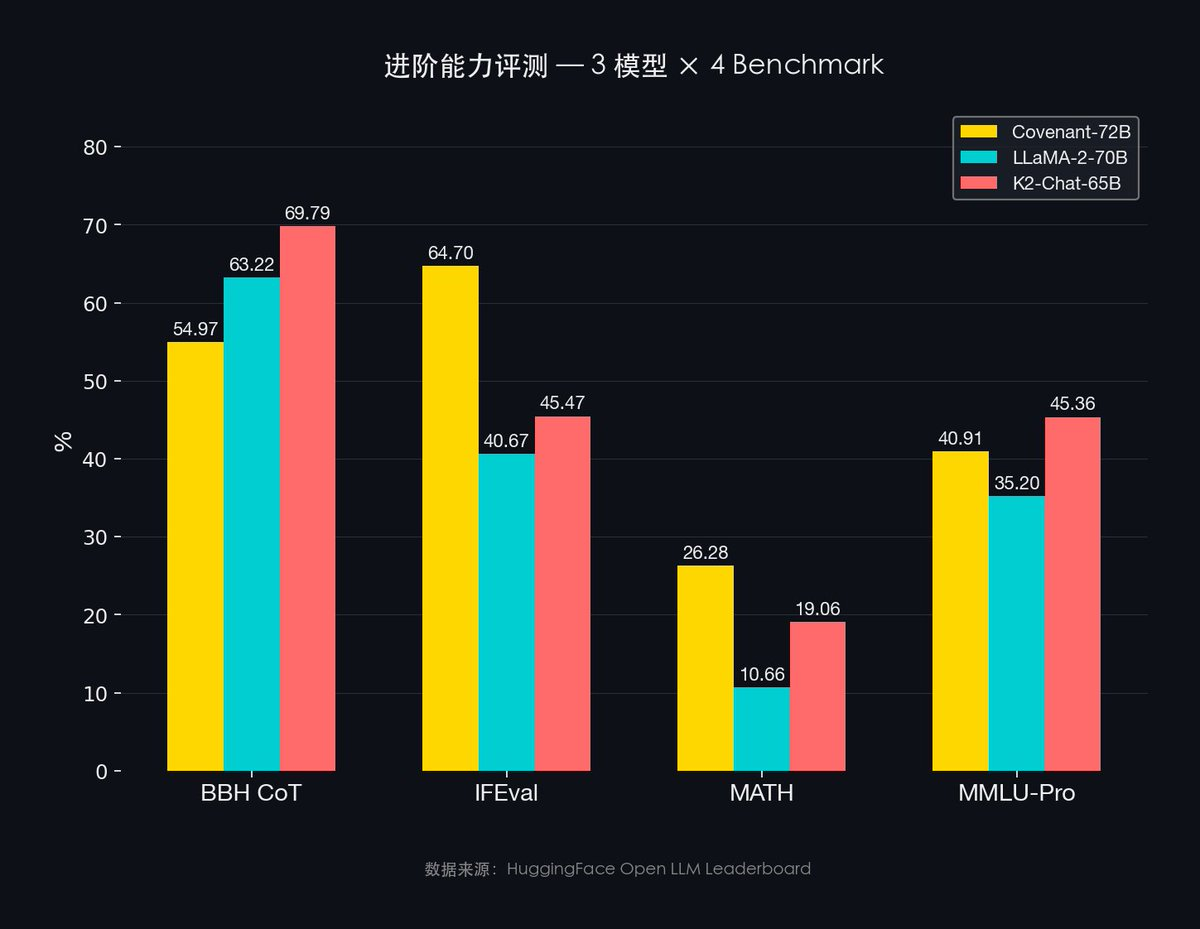

How did the model perform?: To compare with mainstream AI exams:

Data source: HuggingFace 1Covenant/Covenant-72B-Chat model card

- MMLU (57 subjects comprehensive knowledge): Covenant-72B 67.35% vs Meta LLaMA-2 63.08%

- GSM8K (mathematical reasoning): Covenant-72B 63.91% vs Meta LLaMA-2 52.16%

- IFEval (instruction-following ability): Covenant-72B 64.70% vs Meta LLaMA-2 40.67%

Fully open-source: Apache 2.0 License. Anyone can download, use, and commercialize freely, without restrictions.

Academic endorsement: The paper has been submitted [arXiv 2603.08163], and the core technologies (SparseLoCo optimizer and Gauntlet anti-cheating mechanism) were presented at the NeurIPS Optimization Workshop.

3. What does this achievement signify?

For the open-source AI community: In the past, due to financial and computational barriers, training a 70B-level large model was a privilege of a few large companies. Covenant-72B proves for the first time: a community, without any centralized financial support, can also train models of the same scale. This changes the boundaries of who qualifies to participate in AI foundational model development.

For the AI power structure: The current landscape of AI foundational models is highly centralized — a few companies like OpenAI, Google, Meta, and Anthropic control the strongest foundational models. The establishment of decentralized training means this moat may not be insurmountable. The premise that "only large companies can create foundational models" has been shakily shifted for the first time.

For the Crypto industry: This is the first time a crypto project has produced real technological contributions in the AI field, rather than just "riding the trend." Covenant-72B has a HuggingFace model, arXiv paper, and publicly available benchmark data. This sets a precedent: Crypto incentive mechanisms can be the foundational infrastructure for serious AI research.

For Bittensor itself: The success of SN3 transformed Bittensor from a "theoretically feasible decentralized AI protocol" into a "practically verified decentralized AI infrastructure." This is a qualitative leap from 0 to 1.

4. The historical status of SN3

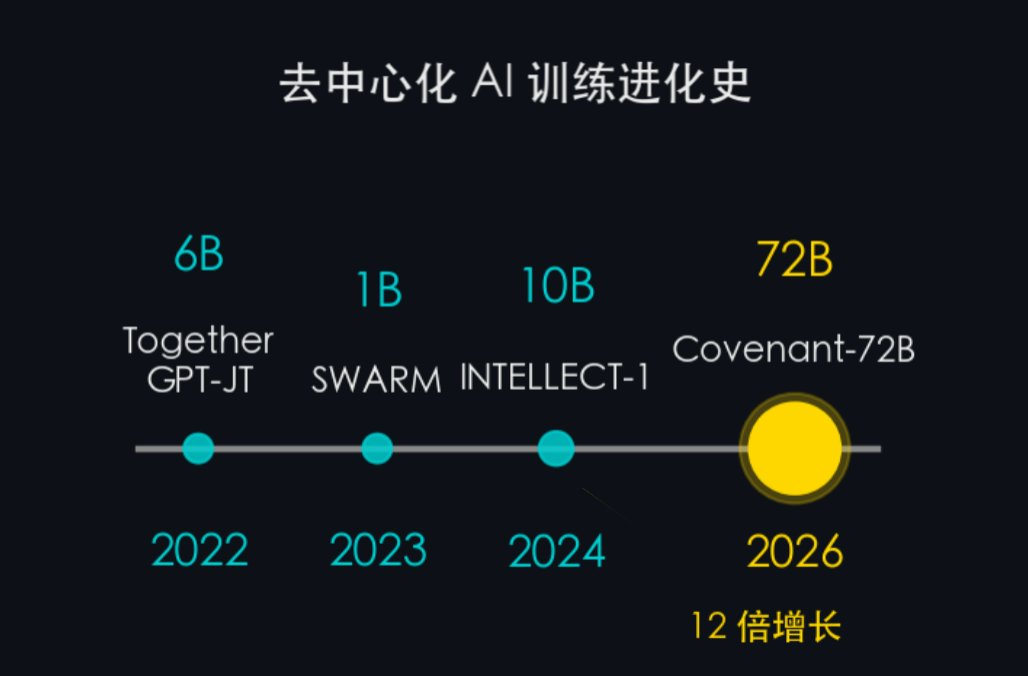

The path of decentralized AI training was not first taken by SN3. However, SN3 has reached places where predecessors have not gone.

Evolutionary history of decentralized training:

- 2022 — Together GPT-JT (6B): Early exploration proving that multi-machine collaboration is feasible

- 2023 — SWARM Intelligence (~1B): Proposed a heterogeneous node collaborative training framework

- 2024 — INTELLECT-1 (10B): Cross-institution decentralized training

- 2026 — Covenant-72B / SN3 (72B): The first large model that surpasses centralized training on mainstream benchmarks

In 4 years, from 6B to 72B, the parameter count increased by 12 times. But more importantly than the parameter count is the quality — previous generations of projects were mainly about "being able to run," while Covenant-72B is the first decentralized large model to surpass centralized training models on mainstream benchmarks.

Key technological breakthroughs:

- >99% compression rate (>146x): Each time a participant uploads the training results (gradients), data that originally needed to be transmitted in GB is compressed over 146 times through the SparseLoCo process. It's equivalent to compressing an entire season of a TV series into a single image, with minimal information loss.

- Only 6% communication overhead: When 100 people collaborate, only 6% of the time is spent "communicating and coordinating," while 94% is spent on actual training. This resolves one of the biggest bottlenecks in decentralized training.

5. Is decentralized training undervalued?

Let's look at the data before making a judgment.

Evidence of Being Undervalued

- MMLU 67.35% vs LLaMA-2 63.08%

- MMLU-Pro 40.91% vs LLaMA-2 35.20%

- IFEval 64.70% vs LLaMA-2 40.67%

The models from decentralized training have surpassed Meta's heavily funded LLaMA-2-70B.

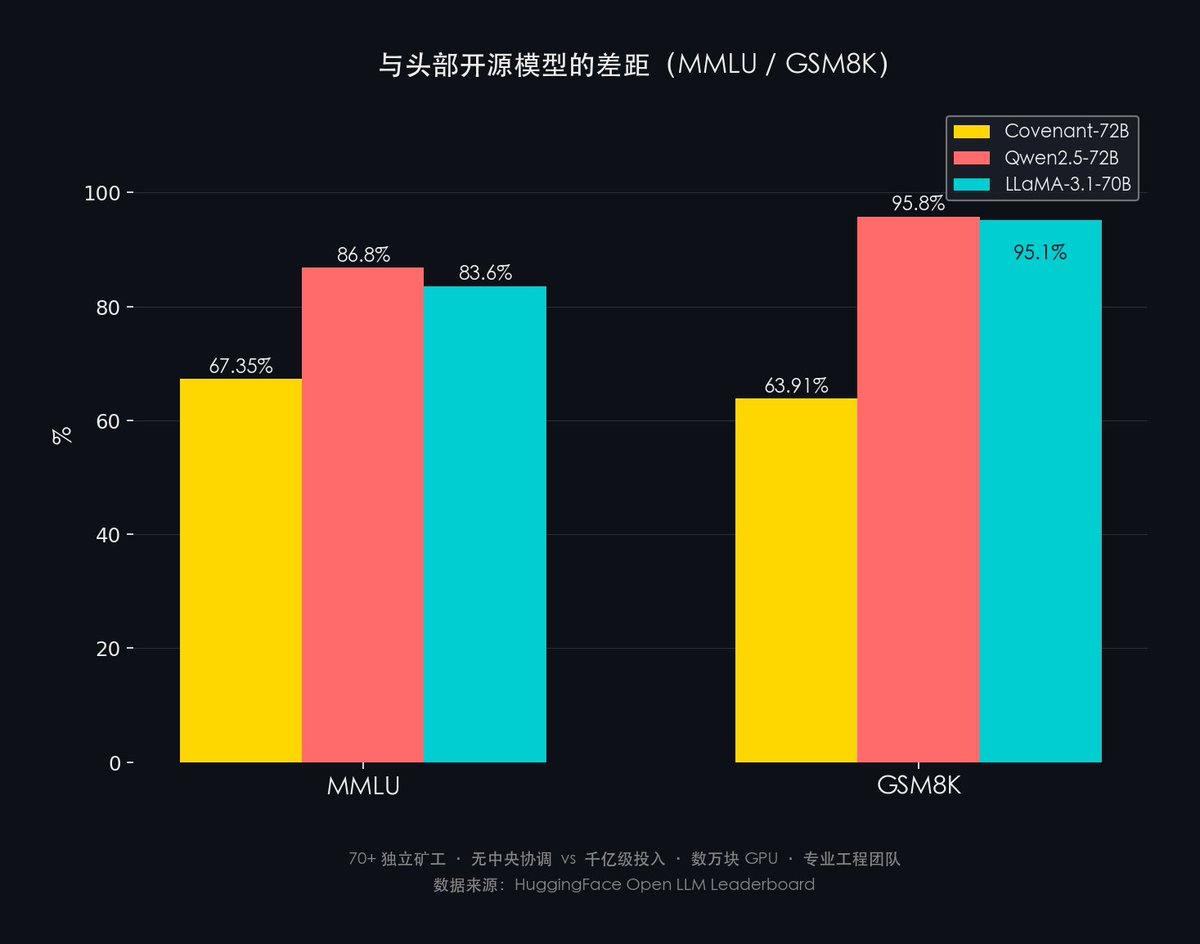

Facing the Gap with Current Leading Open-source Models Honestly:

- MMLU: Covenant-72B 67.35% vs Qwen2.5-72B 86.8% vs LLaMA-3.1-70B 83.6%

- GSM8K: Covenant-72B 63.91% vs Qwen2.5-72B 95.8% vs LLaMA-3.1-70B 95.1%

The gap is about 20-30 percentage points.

However, the comparison framework is crucial: The significance of Covenant-72B is not to defeat the SOTA, but to prove that decentralized training is feasible. Qwen2.5 / LLaMA-3.1 are backed by billions in investment + tens of thousands of GPUs + professional engineering teams; Covenant-72B relies on 70+ independent miners + no central coordination.

Trends are More Important than Snapshots:

- 2022: The best decentralized model was 6B parameters, not even measured individually by MMLU.

- 2026: 72B model, MMLU 67.35%, surpassing comparable models from Meta.

In 4 years, decentralized training has evolved from a "conceptual experiment" to "performance on par with centralized training." The slope of this curve deserves more attention than any single benchmark figure.

Moreover, the gap in deep reasoning for Covenant-72B has already planned solutions — SN81 Grail is responsible for post-training reinforcement learning (RLHF) to align and enhance the model’s capabilities. This is the key improvement step from GPT-4 relative to GPT-3.

Heterogeneous SparseLoCo is the next milestone: Currently, SN3 requires all miners to use the same model of GPU. The next major technical breakthrough is Heterogeneous SparseLoCo, which will allow mixed hardware (B200 + A100 + consumer-grade GPUs) to participate in the same training task. Once realized, the computing power pool for the next round of training will expand significantly.

Decentralized training has crossed the feasibility threshold. The current gap in benchmarks is an engineering problem that continues to be optimized, not a fundamental theoretical barrier.

Part II: The Market Still Hasn't Understood This

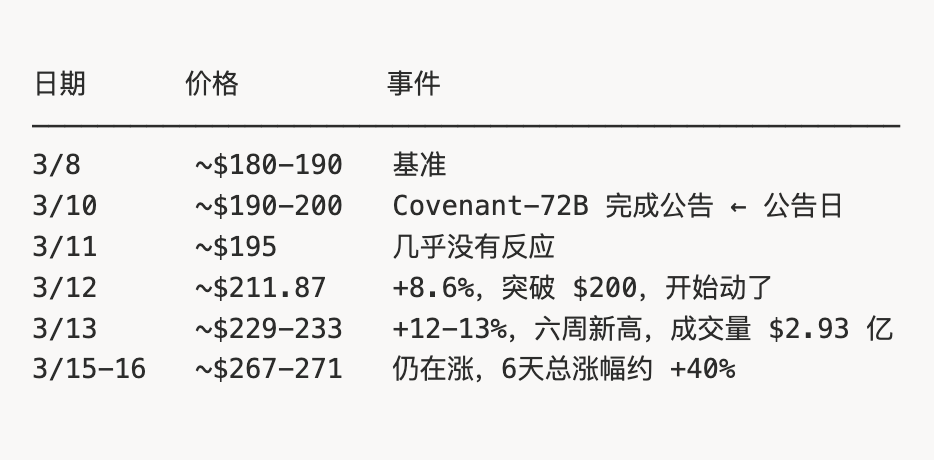

TAO Price Timeline

The price trend of $TAO after the SN3 announcement reveals this cognitive lag:

Note the two days of silence (3/10 → 3/12): After the announcement, the price barely moved.

Why was there a lag?

Crypto investors saw the news "Bittensor SN3 has completed an AI model" — but they may not understand the technical significance of "72B decentralized training surpassing Meta" on MMLU.

AI researchers understand this technical significance, but they do not pay attention to crypto.

The cognitive gap between the two circles created a price lag window of about 2-3 days.

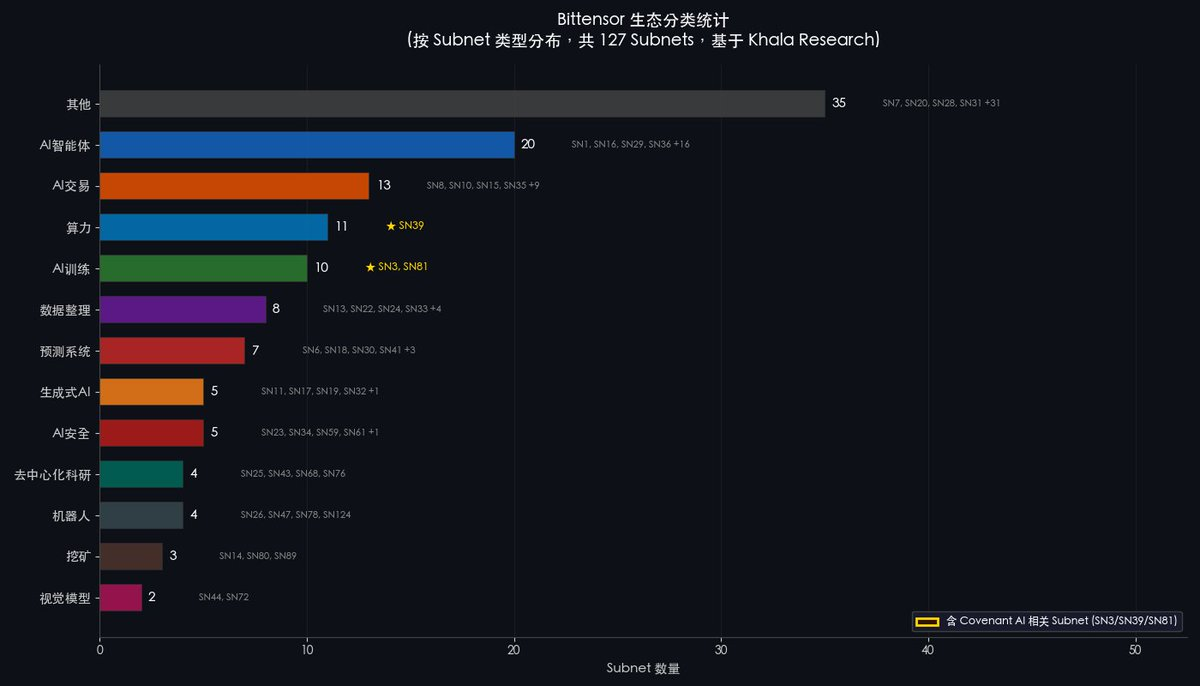

Moreover, most Crypto investors' understanding of Bittensor remains stuck in the last cycle. Today, the number of active subnets on Bittensor exceeds 79, covering vastly different fields such as AI agents, computing power, AI training, AI trading, and robotics. When the market re-evaluates the breadth of the Bittensor ecosystem, this cognitive gap will be corrected — and the correction process usually presents itself in the form of a price surge.

Bittensor's Valuation Misalignment

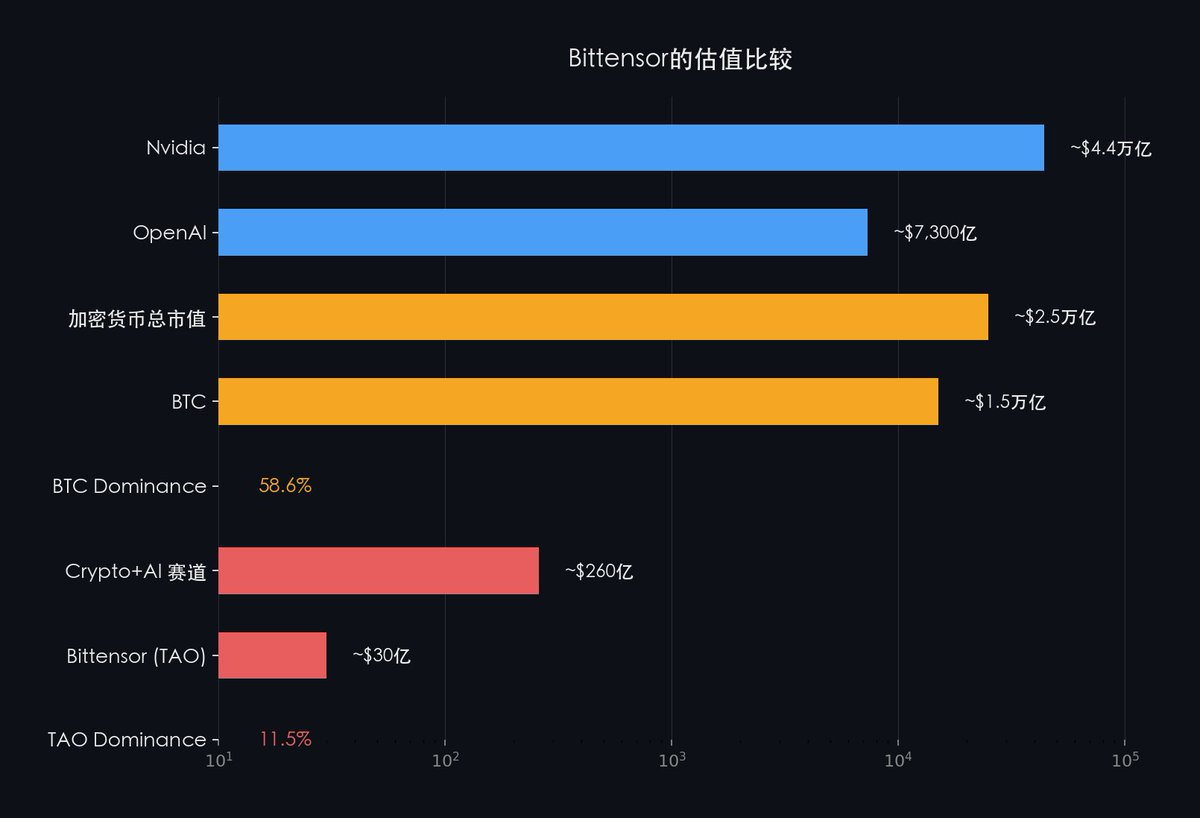

Placing Bittensor in a larger industry context:

SN3 has already proven: Bittensor can accomplish decentralized large model training.

If, in the future, AI requires an open, permissionless training network, then the only one already validated through practice is Bittensor.

The market is pricing a network at the AI infrastructure level using application layer project valuation logic.

Even when comparing within Crypto: Bitcoin has maintained a market share of 50-60% for a long time in the entire Crypto market, while Bittensor's proportion in the Crypto AI field is only about 11.5%.

When the market re-understands Bittensor's position in AI infrastructure, this discrepancy is bound to be corrected.

Conclusion: Bittensor is the Hope of the Entire Crypto Village

If SN3 Templar's Covenant-72B proves one thing, it is:

Decentralized networks can coordinate not only capital but also computing power and cutting-edge AI research.

In the past few years, Crypto has mostly played a marginal role in AI narratives. Many projects rely on conceptual packaging, emotional hype, or capital narratives, but lack verifiable technological output. SN3 is a distinctly different case.

It has not launched a new token narrative or packaged an "AI + Web3" application layer product, but instead has accomplished something deeper and more difficult:

Without centralized coordination, trained a large model at the 72B level.

Participants come from around the globe, without needing to trust each other; the system relies on on-chain incentives and verification mechanisms to automatically coordinate training contributions and revenue distribution.

Crypto mechanisms have organized real productivity in the field of AI for the first time.

Many people have yet to understand the historical significance of SN3. Just as many did not realize back then that Bitcoin proved not "better payments," but a value consensus without centralized trust.

Today, many still see only benchmarks, model releases, or a round of price increases.

But the real change happening is that Bittensor is proving:

- Crypto can do more than just issue assets; it can also organize production

- Crypto can do more than just trade attention; it can also produce intelligence

The open-source community can contribute code, the academic world can contribute papers, but when issues enter the realms of massive-scale training, long-term collaboration, cross-regional scheduling, anti-cheating, and revenue distribution, goodwill and reputation systems are nowhere near enough:

- Without economic incentives, there is no stable supply

- Without verifiable rewards and punishments, there is no long-term collaboration

- Without a tokenized coordination mechanism, it is impossible to form a truly global, permissionless AI production network

So, is Bittensor undervalued? The answer is not "possibly," but rather "significantly and systematically undervalued."

In the grand debate of "Does Crypto still have significant meaning," Bittensor is providing the most compelling answer in the entire industry.

And because of this: Bittensor is the hope of the entire Crypto village.

免责声明:本文章仅代表作者个人观点,不代表本平台的立场和观点。本文章仅供信息分享,不构成对任何人的任何投资建议。用户与作者之间的任何争议,与本平台无关。如网页中刊载的文章或图片涉及侵权,请提供相关的权利证明和身份证明发送邮件到support@aicoin.com,本平台相关工作人员将会进行核查。