Author: BayesCrest

The core dilemma of the AI era is not just the acceleration of technology, but rather that all subjects have simultaneously fallen into an open-ended prisoner’s dilemma: companies dare not stop for fear that competitors will complete AI-native restructuring first; employees dare not stop for fear that colleagues will first complete skill distillation and agent migration; investors dare not stop for fear of missing the next paradigm-level winner. As a result, everyone knows that excessive competition, excessive tokens, and excessive anxiety may not be the optimal solution, but the rational choice for each subject is still to continue accelerating.

Yesterday I read an article titled “Token-Maxxing for All: A Race Nobody Dares to Stop,” which is a Silicon Valley insight from Meng Xing, a partner at Five Sources Capital. This is not just a simple Silicon Valley insight but a sample of the AI world's state transition: it is not merely a Silicon Valley observation, but a record of the transitional period as AI moves from "tool efficiency enhancement" to "production function replacement / organizational structure rewriting / valuation system failure / social contract impact." The recurring keyword in the text is "keeping up": YC is not keeping up, company safety rules are not keeping up, token budgets are not keeping up, xAI management is not keeping up, researchers are not keeping up, computing power / electricity / data centers are not keeping up, DCF valuation frameworks are not keeping up, and social psychological resilience is also not keeping up.

The scenario described in the article records the on-site transition of AI from the "application revolution" to the "production function revolution." In other words, AI is no longer just a tool variable in the software industry but is becoming a common source of disturbance for enterprise production functions, talent structures, valuation terminal values, capital expenditures, and social order.

The most important thing about this article is not certain anecdotes themselves, but the state switch it reveals:

The core state is not that "AI is very strong," but rather: the old systems, old organizations, old valuations, old positions, and old VC rhythms were all designed for a low-speed world; now they confront the rapidly changing AI world, leading to systemic mismatch. Mapping this article to an AI World-State Migration Table:

The key signal of this article is that AI is no longer "software function upgrades," but is rewriting the production function of enterprises. However, it is not yet completely stable because on-call agents are hard to use, PMF is not synchronized, and there are significant conversion losses between token expenditure and revenue growth.

Key Insight: Token-Maxxing ≠ Productivity Realization

The author asks teams that claim "100-fold efficiency improvement":

Has revenue grown 100-fold with the 100-fold efficiency improvement?

The answer is clearly no. The observation given in the article is that many teams have indeed produced more things, but have not simultaneously formed PMF or achieved 100-fold revenue growth.

This can be abstracted into a new metric:

TTCR: Token-to-Truth Conversion Rate

Namely:

token consumption → product capability → user value → revenue / gross profit / retention / valuation conversion rate.

Many companies are now only doing:

Token Burn ↑↑

Feature Output ↑

PMF?

Revenue ↑ limited

Moat?

Valuation?

This means:

In the future, we cannot only look at AI adoption, but must look at AI absorption. That is, whether enterprises truly incorporate AI capabilities into the business loop, rather than just burning token budgets on upstream models and computing power suppliers.

Everyone is competing, afraid of lagging behind, and afraid of being eliminated.

This is a blind race without a visible finish line.

This stems from the deep-rooted anxiety about future uncertainty within human genes, which causes everyone to dare not stop, otherwise the anxiety will linger. Now I feel many people around me have become somewhat nihilistic; this is a blind race without a visible finish line.

And it is not ordinary anxiety.

It is the unique "open-ended anxiety" of the AI era: for the first time, humanity faces a technological leap that may continue to self-accelerate, compress the old order, but does not provide a clear endpoint. This is entirely consistent with the recurring theme of "keeping up" in the article: YC is not keeping up, corporate safety rules are not keeping up, engineers are not keeping up, researchers are not keeping up, valuation frameworks are not keeping up, and social psychology is also not keeping up.

At the Core: This stems from Humanity's Genetic Fear of "Uncertain Futures"

The human brain was not designed for "open-ended exponential changes." The risks our ancestors faced were:

Is there food today?

Are there predators nearby?

Will the tribe abandon me?

Will winter pass?

Although these risks were terrifying, they usually had boundaries.

The risks of the AI era are different:

Will my skills be replaced?

Will my industry disappear?

Will my asset pricing become invalid?

Will the world my child grows up in still need humans?

Will the efforts made now be meaningful three years later?

These are not singular risks, but rather the instability of the world model itself.

Thus, the human brain enters a state of constant scanning:

Not because of the sight of danger, but because of the uncertainty of where danger will come from.

This is more tormenting than known dangers.

Why Does Everyone "Dare Not Stop"?

Because the current AI competition is a typical prisoner’s dilemma + arms race + identity defense battle. An individual rational person may know:

"I need to rest, I need to think, I need to clarify a bit."

But when they see others still running:

Others are using Claude Code

Others are opening 10 agents

Others are launching new products every day

Others are financing

Others are laying off to increase efficiency

Others are token-maxxing

Others are learning new tools

Others are rewriting workflows

Their psychological system will automatically translate this into: if I stop, I may be left behind by the times. So this is not driven by a love of progress but by fear; no one dares to stop waiting for that day, which is critical. It indicates that the current AI competition is no longer just opportunity-driven but anxiety-driven.

This is a typical multi-layered prisoner’s dilemma; the traditional prisoner’s dilemma involves two people. The AI era is not just two individuals, but rather multilayered and nested: company vs. company, employee vs. employee, investor vs. investor, nation vs. nation, model company vs. model company, startup vs. startup.

Every layer has the same structure:

So, the most fundamental paradox is:

Everyone knows that slowing down, thinking a little clearer, and being better organized might be healthier; but as long as others do not slow down, I cannot slow down.

This is the prisoner’s dilemma.

Corporate Level: Non-AI Native May Die, AI Native May Also Burn Out

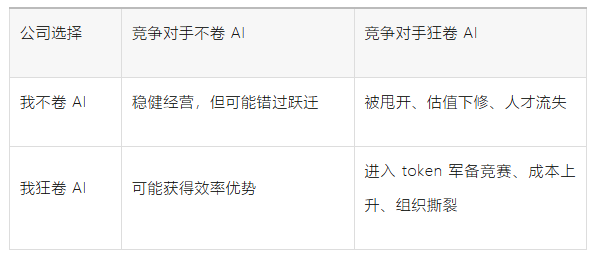

The payoff matrix faced by companies looks something like this:

So the rational choice for any single company is, regardless of whether others are racing, I must race. This is the dominant strategy.

But the overall result of the industry is:

token burn ↑

AI tool spending ↑

Redundant construction ↑

Safety rules lag ↑

Employee anxiety ↑

Acceleration of layoffs ↑

Real PMF may not synchronize ↑

In other words, at the company level, an AI-native arms race has formed.

The cruellest part is: if a company does not participate, it may be eliminated; if a company does participate, it may not necessarily win. Because there is not a linear relationship between AI investment and business realization.

AI adoption ≠ AI absorption

Token spend ≠ Revenue growth

Agent count ≠ PMF

Code output ≠ Business truth

AI-native is not the legality of the seat itself, AI absorption is.

Employee Level: Not Learning AI Will Be Replaced

Learning AI May Also Be Training to Replace Oneself

The prisoner’s dilemma for employees is even harsher.

Therefore employees will also reach the same conclusion: I cannot stop. But the problem is, the more employees try to AI-ify themselves, the more they may be helping the company complete two tasks:

1. Making their workflows explicit

2. Turning their capabilities into replicable skills / agents / templates

This is the cruellest aspect:

To avoid being replaced by AI, employees must enhance themselves with AI; but the process of enhancing oneself may accelerate their replacement by the system.

This is not ordinary internal competition but a self-distillation-style competition.

Previously, employees competed in: overtime, performance, degrees, experience, and connections.

Now employees compete in:

Who is better at prompting

Who is better at tuning agents

Who is better at building workflows

Who can turn their experiences into AI skills faster

Who can do the work of three people alone

But when one person can accomplish the work of three, companies will naturally ask: why do I still need three people? Thus, individual rational effort ultimately leads to collective job compression.

Deepest Paradox: AI Turns "Effort" into an Unstable Asset

In the past, effort had a relatively stable compounding logic:

Learn skills

→ Accumulate experience

→ Increase scarcity

→ Gain income / status / security

Now this chain has become:

Learn skills

→ Skills are quickly absorbed by AI

→ Scarcity decreases

→ Need to learn the next skill

→ Absorbed again

So many people’s sense of nihilism comes from here:

It's not that I am unwilling to put in effort, but rather I do not know where the effort is being deposited.

If the half-life of skills becomes shorter, people's psychology will change:

This is why many people feel nihilistic. It is not because they are lazy, nor is it because they are pessimistic, but because they feel:

They are running a game without save points, finish lines, or stable scoring rules.

Investor Level: Not Investing in AI Will Lose, Randomly Investing in AI Will Also Lose

VCs and secondary investors are also in the same predicament.

So the dominant strategy for investors has also become:

Must participate in AI, but cannot know whether what they participate in is a winner or a bubble.

This results in:

Neo labs being overvalued

Congested trades in AI infra

Flood of vertical agents

SaaS being sold off

Rapid capital migration

Valuation frameworks losing their anchor

This is also a prisoner’s dilemma: each fund knows that many AI projects will go to zero, but is afraid of missing the one that goes from zero to 100 if they do not invest. Thus, AI investment becomes: not because of certainty, so buy; but because the relative risk of not buying is too great, so buy. This mirrors the employees' "not learning AI leads to anxiety" and the companies' "not adopting AI leads to anxiety."

National Level: AI is a National-Level Prisoner’s Dilemma

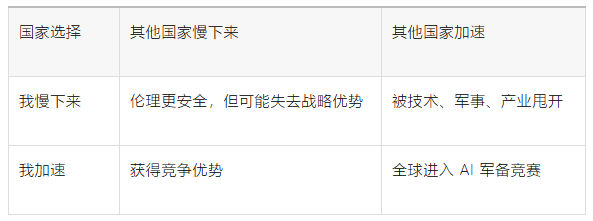

The same goes for countries.

Thus no country dares to truly stop. Even though everyone knows about AI safety risks, employment impacts, energy pressures, social stratification, and model control risks. As long as there exists a competitor that continues to accelerate, others cannot unilaterally slow down. This is why AI safety is very difficult to resolve through moral self-discipline.

It is essentially a global coordination failure issue.

This is not optimism but "Fear-Based Accelerationism"

In traditional technology cycles, everyone runs because they see wealth opportunities. Now it is more complex. Many people are running, not because they believe in a beautiful ending, but because:

Stopping is scarier.

This is what I would term: Fear-Based Acceleration. Its psychological structure is: uncertainty ↑→ sense of control decreases → anxiety rises → action numbs anxiety → the more action, the faster the world → the faster the world, the higher the uncertainty → anxiety continues to rise. This is a self-reinforcing loop. Thus many people appear very busy, very AI-native, and very efficient on the surface, but at a deeper level, it is not certainty, but fear.

Why Does Nihilism Arise?

Because AI does not just replace tasks; it shakes three deeper things.

First, the Meaning of Effort is Undermined

In the past, people believed: learning a skill → accumulating experience → forming professional barriers → gaining stable returns.

Now this chain has been interrupted.

People will ask:

Will what I learn today be useful two years from now? Will the capabilities I accumulated over ten years be compressed by an agent workflow? Am I chasing a future, or am I pursuing an ever-retreating target?

When the path from "effort → rewards" is unstable, naturally people will feel nihilistic.

Second, Identity is Undermined

Many people's self-worth comes from occupational identity:

I am an engineer

I am a researcher

I am an investor

I am a designer

I am a salesperson

I am an analyst

But AI will deconstruct these identities into:

What tasks can be automated?

What judgments still need humans?

What experiences have depreciated?

What capabilities can be distilled into skills?

This leads to a profound sense of loss:

It's not that I can't work anymore, but rather that "who I am" has become unstable.

Third, Future Narratives are Undermined

People need a story about the future. The past narrative was:

Study

Work

Buy a house

Get promoted

Accumulate wealth

Raise the next generation

Retire

The AI era shattered this story. Now many people's subtext is:

The world is changing too fast; I cannot model who I will be in five years. Since the future cannot be modeled, what is the meaning of my efforts in the present?

This is the source of nihilism. It is not that they really do not care; rather, they cannot find a stable meaning coordinate system.

The Essence of "Blind Racing": No Finish Line, No Referee, No Pause Button

The most terrifying aspect of this race is not the speed, but the lack of a clear ending. In the internet era, there were relatively clear endpoints:

Who captures users

Who captures traffic

Who establishes network effects

Who goes public

Who profits

The endpoints of the AI era are unclear:

Is AGI the endpoint?

Is ASI the endpoint?

Is model self-training the endpoint?

Is agent replacement of white-collar workers the endpoint?

Is the exhaustion of computing power the endpoint?

Is regulatory intervention the endpoint?

Is social backlash the endpoint?

No one knows. Therefore, everyone is not running towards a finish line, but running towards "not being eliminated." This is the cruelty of blind racing:

You cannot see the finish line, but you can hear the footsteps of everyone around you.

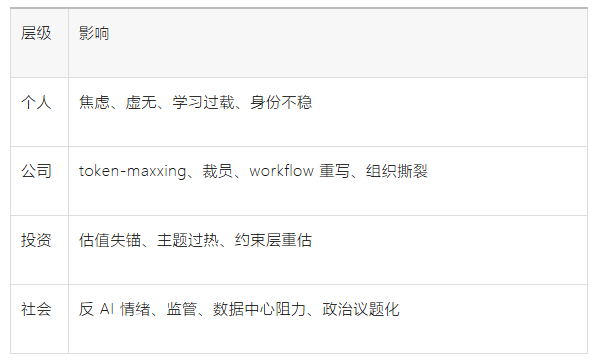

This is not an ordinary emotion; it is a macro psychological state variable. It will affect:

In Investment, This Nihilism Itself is Also a Signal

It is not pure emotional noise; this should be regarded as a Social Legitimacy / Reflexivity Signal.

When a large number of people begin to shift from "excitement" to "nihilism," it indicates that AI has entered the second stage:

First stage: Awe

Second stage: Chasing

Third stage: Anxiety

Fourth stage: Backlash

Fifth stage: Institutional Reconstruction

We are now likely between the second and third stages, with some already beginning to enter the fourth stage.

The Narrative Will Continue to Strengthen

Because no one dares to stop, capital, companies, and individuals will continue to invest. This supports the continued upward demand for AI infra, computing power, token consumption, and agent toolchains.

But Bubbles and Over-investment Will Occur Simultaneously

Because many actions are not driven by rational ROI but by anxiety.

Thus we will see:

Ineffective agents

Excessive token consumption

Repeated entrepreneurship

AI wrappers flooding the market

Overvalued neo labs

Companies becoming "AI-native" just to appear AI-native

Social Backlash Will Become Increasingly Important

As anxiety spreads from Silicon Valley to ordinary white-collar workers, engineers, researchers, and outsourced service personnel, AI will no longer be just a technical topic, but will become a political issue.

This will bring:

Resistance from data centers

Regulation on AI layoffs

Discussions on tax redistribution

Model security regulation

Antitrust actions

Employment protection policies

For Individuals

The Real Solution is Not to "Run Faster" but to Re-establish a Sense of Control

In this world, blind acceleration only leads to increased nihilism. Because without a judgment framework, the faster one runs, the more it feels like being dragged by the times.

A better approach is to change the question from:

How can I avoid being left behind by AI?

To:

How can I build a world model that can continuously update?

Not by predicting every future, but by establishing:

State recognition

Set of hypotheses

Evidence updating

Counter-evidence mechanisms

Action routing

Position discipline

Method post-evaluation

In other words:

Not eliminating uncertainty, but structuring uncertainty.

This is very important. Anxiety arises from an inability to model. The value of methodology is to make the uncontrollable world partially controllable.

The Final Layer: The True Test of This Race is "Mental Structure"

The most scarce capability in the AI era may not be whether one can use tools.

But rather:

Can one maintain judgment amidst uncertainty

Can one keep pace amidst group racing

Can one maintain subjectivity under technological shock

Can one acknowledge change while not being consumed by it

Can one continue learning, but not turn oneself into an anxiety machine

This is where the true differentiation for the future arises. Ordinary people will be forced into:

Tool-chasing mode.

The strong will enter:

World model updating mode.

The stronger will enter:

Constraint recognition + value capture + method post-evaluation updating mode.

This is the most core significance of establishing AI-based systems.

The greatest psychological impact of the AI era is not about machines replacing a specific job, but rather that humanity is facing an open-ended accelerating system for the first time without a clear endpoint, stable skill anchors, clear valuation terminal values, or a pause button. Thus actions shift from opportunity pursuit to anxiety relief, token-maxxing transforms from an efficiency tool to a psychological tranquilizer, and nihilism becomes the intermediate state after the old meaning systems are shattered and the new meaning systems have yet to be established.

Thus everyone, every company, and every investor is forced to make the same choice:

I do not know where I am running to, but I know stopping may be even more dangerous.

This is the collective psychological structure of the AI era. It is not simple optimism, nor is it simple bubble; it is an open-ended prisoner’s dilemma driven by uncertainty, relative competition, identity fear, capital pressure, and technological self-acceleration.

The meaning lies here: while others relieve anxiety through racing, we must reduce anxiety through structured judgment; while others are forced to catch up in speed, we must identify the constraints, captures, terminal values, and backlash behind that speed.

The real response is neither blind racing nor lying flat. But replacing instinctual anxiety with a structured world model, using evidence updates to replace group panic, and employing rhythm discipline to counter blind racing.

In fact, there is no need to be anxious, after all, everyone is facing the same era situation; everyone is the same.

The end of the article.

免责声明:本文章仅代表作者个人观点,不代表本平台的立场和观点。本文章仅供信息分享,不构成对任何人的任何投资建议。用户与作者之间的任何争议,与本平台无关。如网页中刊载的文章或图片涉及侵权,请提供相关的权利证明和身份证明发送邮件到support@aicoin.com,本平台相关工作人员将会进行核查。